Perceptron Classifier Explanation Pdf

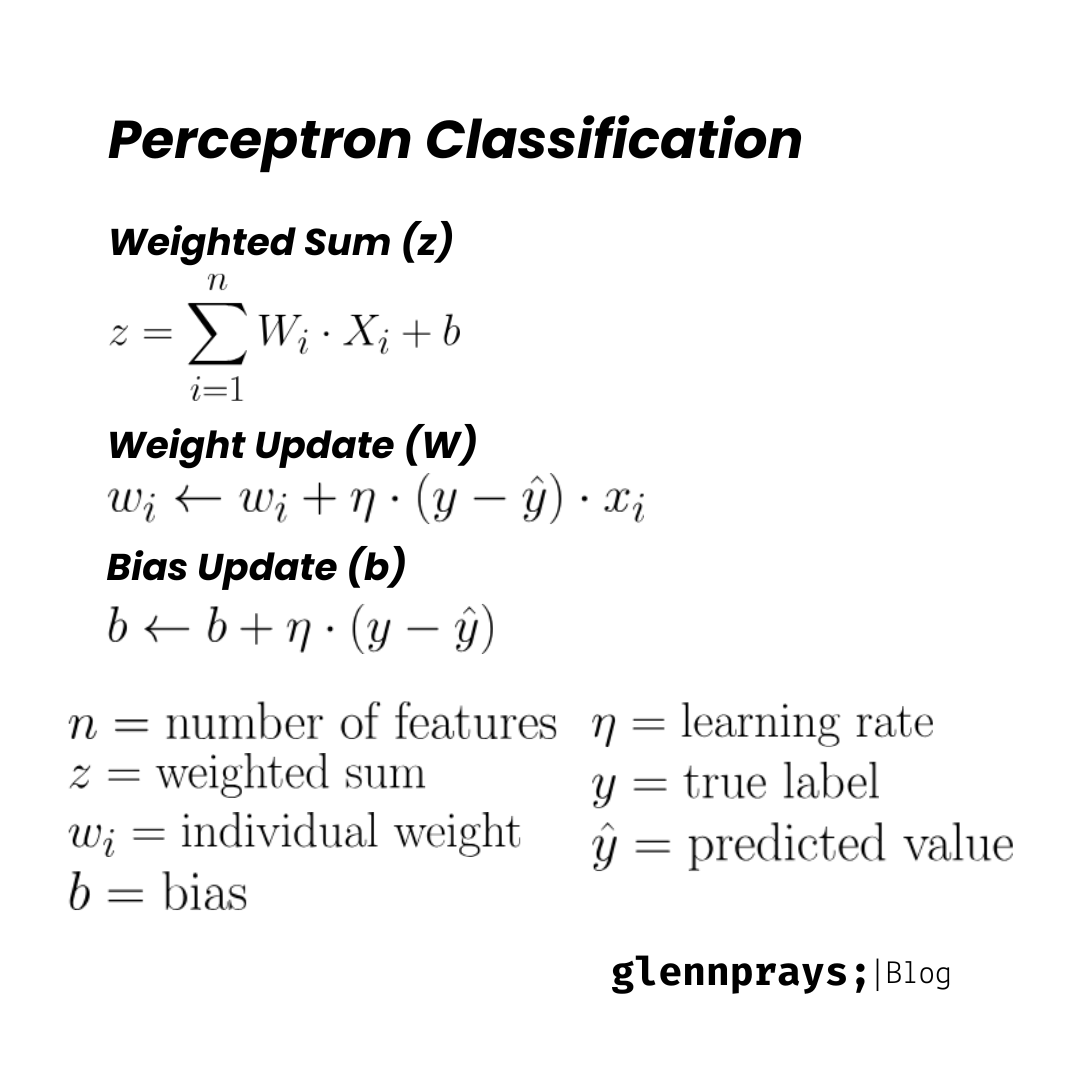

Linear Classifier Perceptron Pdf Matrix Mathematics Equations • formal theories of logical reasoning, grammar, and other higher mental faculties compel us to think of the mind as a machine for rule based manipulation of highly structured arrays of symbols. In a perceptron model, we consider the hyperplane in d 1 dimensional space with normal vector w (referred to as the classification plane), and classify instances of x based on which side of the plane they lie on.

Perceptron Classifier Explanation Pdf Perceptron convergence theorem: for any finite set of linearly separable labeled examples, the perceptron learning algorithm will halt after a finite number of iterations. in other words, after a finite number of iterations, the algorithm yields a vector w that classifies perfectly all the examples. Theorem. under the initial vector (0) = 0, for any data set d satisfying the above assumptions, the perceptron algorithm produces a vector (k) classifying every example correctly after at most. In the same way that linear regression learns the slope parameters to best fit the data points, perceptron learns the parameters to best separate the instances. Perceptron, 1957 predecessor of deep networks. separating two classes of objects using a linear threshold classifier.

Lecture 03 Perceptron Pdf Pdf Statistics Learning In the same way that linear regression learns the slope parameters to best fit the data points, perceptron learns the parameters to best separate the instances. Perceptron, 1957 predecessor of deep networks. separating two classes of objects using a linear threshold classifier. Instructors: ashish goel and reza zadeh, stanford university. lecture 9, 9 26 2014. scribed by adrien fallou. outline: the perceptron algorithm stochastic gradient descent this lecture starts the series on machine learning. we'll focus on supervised learning and classi cation. Linear classification: the perceptron these slides were assembled by byron boots, with only minor modifications from eric eaton’s slides and grateful acknowledgement to the many others who made their course materials freely available online. Here we'll prove a simple one, called a mistake bound: if there exists an optimal parameter vector w∗ that can classify all of our examples correctly, then the perceptron algorithm will make at most a bounded number of mistakes before discovering some optimal parameter vector. How do humans learn ? it performs certain computations (e.g., pattern recognition, perception, and motor control) many times faster than the fastest digital computer in existence today.

Machine Learning Perceptron Classifier Glennprays Instructors: ashish goel and reza zadeh, stanford university. lecture 9, 9 26 2014. scribed by adrien fallou. outline: the perceptron algorithm stochastic gradient descent this lecture starts the series on machine learning. we'll focus on supervised learning and classi cation. Linear classification: the perceptron these slides were assembled by byron boots, with only minor modifications from eric eaton’s slides and grateful acknowledgement to the many others who made their course materials freely available online. Here we'll prove a simple one, called a mistake bound: if there exists an optimal parameter vector w∗ that can classify all of our examples correctly, then the perceptron algorithm will make at most a bounded number of mistakes before discovering some optimal parameter vector. How do humans learn ? it performs certain computations (e.g., pattern recognition, perception, and motor control) many times faster than the fastest digital computer in existence today.

Machine Learning Perceptron Classifier Glennprays Here we'll prove a simple one, called a mistake bound: if there exists an optimal parameter vector w∗ that can classify all of our examples correctly, then the perceptron algorithm will make at most a bounded number of mistakes before discovering some optimal parameter vector. How do humans learn ? it performs certain computations (e.g., pattern recognition, perception, and motor control) many times faster than the fastest digital computer in existence today.

Comments are closed.