Patterns Gpu Uncoalesced Memory Transfer

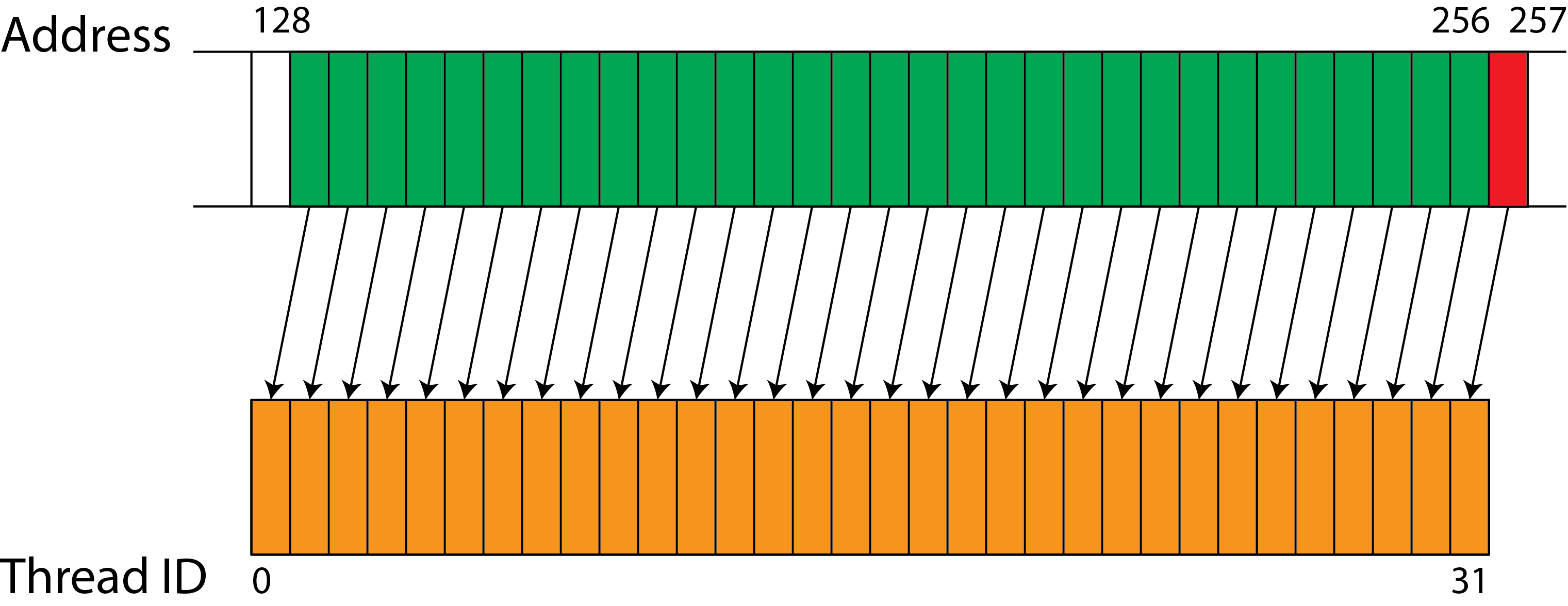

Patterns Gpu Uncoalesced Memory Transfer For cpu based applications, stride 1 access to memory by each thread is very efficient. however, for effective utilization of memory bandwidth on gpus, adjacent threads must access adjacent data elements in global memory. A simple example of a typical usage pattern involves the host allocating and initializing global memory before kernel launch, followed by kernel execution where cuda threads read from and write results back to global memory, and finally host retrieval of results after kernel completion.

Each Memory Transfer Inside Gpu And Cpu Gpu Memory Transfer Model By the end of this chapter, you’ll know how to write cuda kernels that can better utilize the gpu’s memory hierarchy and hardware optimized data transfer engines. An advanced analysis of cuda memory coalescing techniques and access pattern optimization for maximizing gpu memory bandwidth and computational performance. While l1 and l2 cache remain non programmable, the cuda memory model exposes many additional types of programmable memory: registers, shared memory, local memory, constant memory, texture memory and global memory. One such subtlety lies in accessing gpu memory, where certain access patterns can lead to poor performance. such access patterns are referred to as uncoalesced global memory accesses. this work presents a light weight compile time static analysis to identify such accesses in gpu programs.

Impact Of Gpu Memory Access Patterns On Fdtd Remcom While l1 and l2 cache remain non programmable, the cuda memory model exposes many additional types of programmable memory: registers, shared memory, local memory, constant memory, texture memory and global memory. One such subtlety lies in accessing gpu memory, where certain access patterns can lead to poor performance. such access patterns are referred to as uncoalesced global memory accesses. this work presents a light weight compile time static analysis to identify such accesses in gpu programs. Memory coalescing is essential for achieving optimal performance in cuda applications. by carefully considering memory access patterns, data structure organization, and alignment requirements, developers can significantly improve the efficiency of their gpu programs. It recently acquired a plug in system which was implemented using framework assisted dependency injection, a pattern more typically used in enterprise rather than research software. The document provides a sample profiling of a memory bound cuda kernel that performs computations on an array of double3 data type in global memory, highlighting issues with uncoalesced global memory accesses. Learn how memory coalescing in cuda improves performance and optimizes memory accesses in parallel programming. dive into fully coalesced accesses and uncorrelated accesses to enhance execution speed.

Comments are closed.