Parallel Programming Concept Dependency And Loop Parallelization Pptx

Parallel Programming Concept Dependency And Loop Parallelization Pptx The document discusses parallel programming concepts, focusing on dependency types and loop parallelization. it categorizes dependencies into data and control types, exploring their implications in loop iterations. Explore the motivations, advantages, and challenges of parallel programming, including speedups, amdahl's law, gustafson's law, efficiency metrics, scalability, parallel program models, programming paradigms, and steps for parallelizing programs.

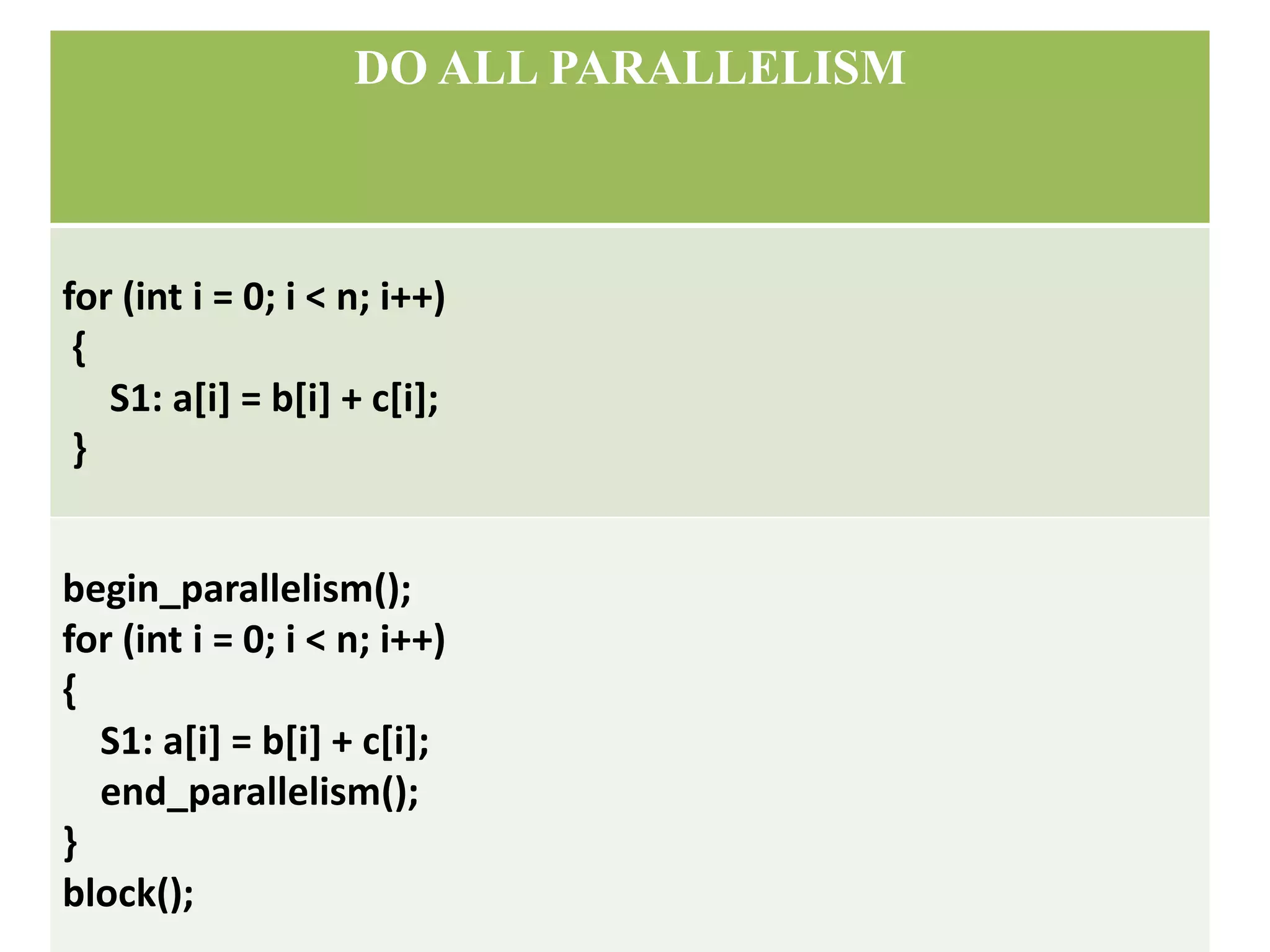

Parallel Programming Concept Dependency And Loop Parallelization Pptx The document discusses parallel programming concepts like synchronization and data parallelism. it provides examples of regularly parallelizable problems like matrix multiplication and sor that can divide the data among processors. The document presents an overview of parallel programming models, categorizing them into machine, architectural, computational, and programming models based on abstraction levels. This document discusses parallel programming concepts including threads, synchronization, and barriers. it defines parallel programming as carrying out many calculations simultaneously. This document discusses loop parallelization and pipelining as well as trends in parallel systems and forms of parallelism. it describes loop transformations like permutation, reversal, and skewing that can be used to parallelize loops.

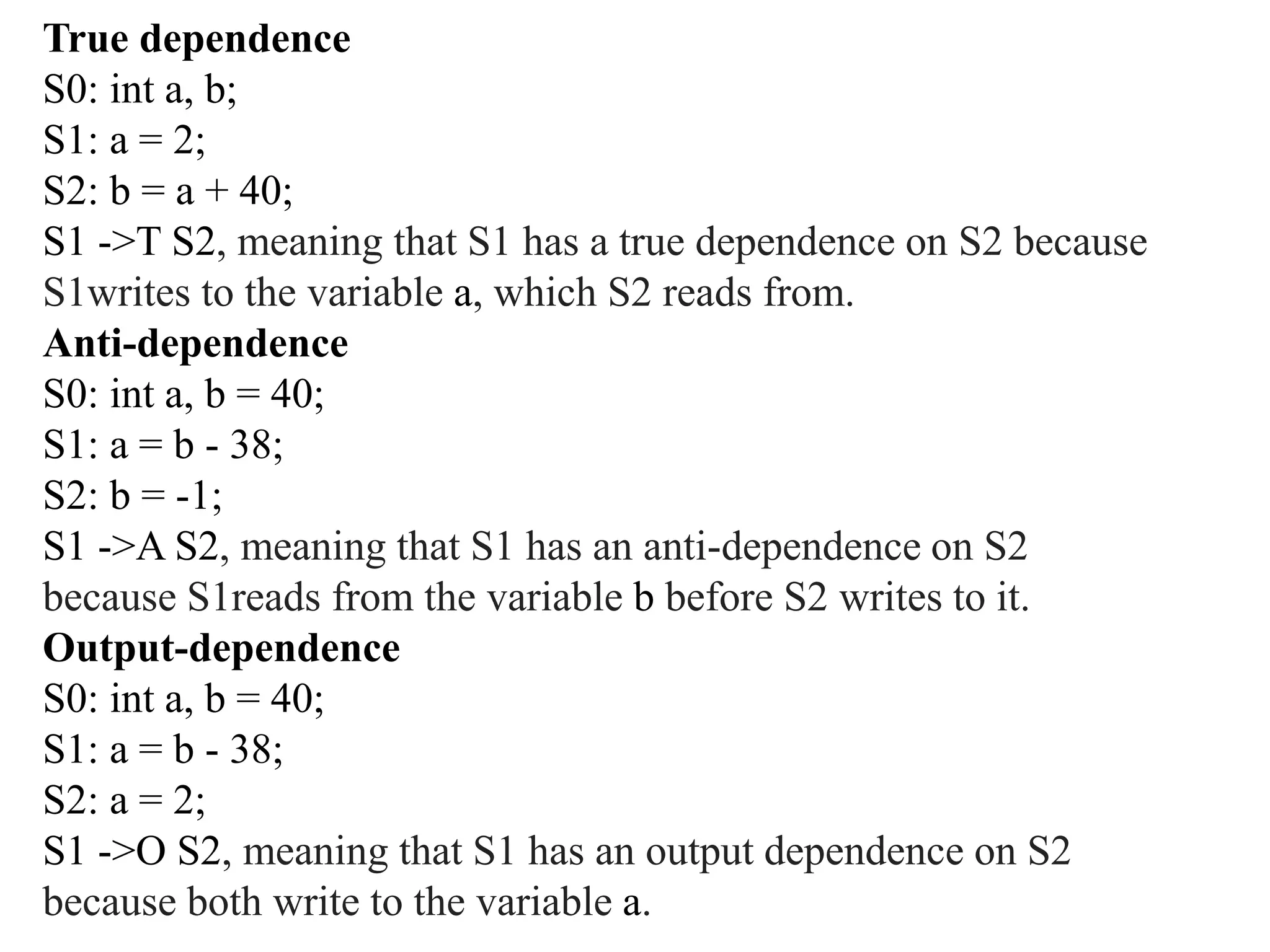

Parallel Programming Concept Dependency And Loop Parallelization Pptx This document discusses parallel programming concepts including threads, synchronization, and barriers. it defines parallel programming as carrying out many calculations simultaneously. This document discusses loop parallelization and pipelining as well as trends in parallel systems and forms of parallelism. it describes loop transformations like permutation, reversal, and skewing that can be used to parallelize loops. It also discusses parallel programming models, how to design parallel programs, and examples of parallel algorithms. In openmp parlance the collection of threads executing the parallel block — the original thread and the new threads — is called a team, the original thread is called the master, and the additional threads are called worker. Parallel computing is the simultaneous use of multiple compute resources to solve a computational problem. concepts and terminology: why use parallel computing?. Loop carried dependence a loop carried dependence is a dependence that is present only if the statements are part of the execution of a loop. otherwise, we call it a loop independent dependence.

Comments are closed.