Opus 4 6 Codex 5 3 And The Post Benchmark Era

Codex 5 3 Vs Opus 4 6 Performance Cost Workflow Comparison Last thursday, february 5th, both openai and anthropic unveiled the next iterations of their models designed as coding assistants, gpt 5.3 codex and claude opus 4.6, respectively. I tested both claude opus 4.6 and gpt 5.3 codex at enterprise scale. here's my honest comparison of benchmarks vs reality.

.png)

Gpt 5 3 Codex Vs Opus 4 6 The Great Convergence Both anthropic and openai shipped their flagship coding models on the same day. we compare claude opus 4.6 and gpt 5.3 codex across benchmarks, pricing, real world coding, agentic capabilities, and developer workflows to help you choose. Gpt 5.3 codex vs claude opus 4.6: in depth comparison of the leading ai coding assistants. see how they perform on complex migrations, 3d game creation, and ui design, plus explore new features like steerability and agent teams. In depth comparison of claude opus 4.6 and gpt 5.3 codex across benchmarks, pricing, context windows, agentic capabilities, and real world performance. discover which frontier ai model best fits your needs. Anthropic’s claude opus 4.6 and openai’s codex 5.3 are now live. both show strong benchmarks, but which one truly stands out? i’ll put them to the test and compare their performance on the same task. let’s see which one comes out on top.

Codex 5 3 Vs Opus 4 6 One Shot Examples And Comparison By Agent In depth comparison of claude opus 4.6 and gpt 5.3 codex across benchmarks, pricing, context windows, agentic capabilities, and real world performance. discover which frontier ai model best fits your needs. Anthropic’s claude opus 4.6 and openai’s codex 5.3 are now live. both show strong benchmarks, but which one truly stands out? i’ll put them to the test and compare their performance on the same task. let’s see which one comes out on top. The “better” model depends on what you need: huge context, safety first code review and long running agents (opus 4.6) — or marginally stronger raw coding benchmark performance, speed and immediate codex integrations (gpt 5.3 codex). see the deep dive below. A workflow first comparison of openai codex 5.3 and anthropic claude opus 4.6. compare api pricing, context windows, and "thinking controls" to optimize your 2026 developer toolkit. Head to head comparison of claude opus 4.6 and gpt 5.3 codex covering benchmarks, coding, pricing, safety, and which model fits your workflow. As ai coding agents transition from benchmarks to real world evaluation, developers must now test multiple models empirically rather than relying on published scores.

Opus 4 6 Codex 5 3 And The Post Benchmark Era The “better” model depends on what you need: huge context, safety first code review and long running agents (opus 4.6) — or marginally stronger raw coding benchmark performance, speed and immediate codex integrations (gpt 5.3 codex). see the deep dive below. A workflow first comparison of openai codex 5.3 and anthropic claude opus 4.6. compare api pricing, context windows, and "thinking controls" to optimize your 2026 developer toolkit. Head to head comparison of claude opus 4.6 and gpt 5.3 codex covering benchmarks, coding, pricing, safety, and which model fits your workflow. As ai coding agents transition from benchmarks to real world evaluation, developers must now test multiple models empirically rather than relying on published scores.

Opus 4 6 Codex 5 3 And The Post Benchmark Era Head to head comparison of claude opus 4.6 and gpt 5.3 codex covering benchmarks, coding, pricing, safety, and which model fits your workflow. As ai coding agents transition from benchmarks to real world evaluation, developers must now test multiple models empirically rather than relying on published scores.

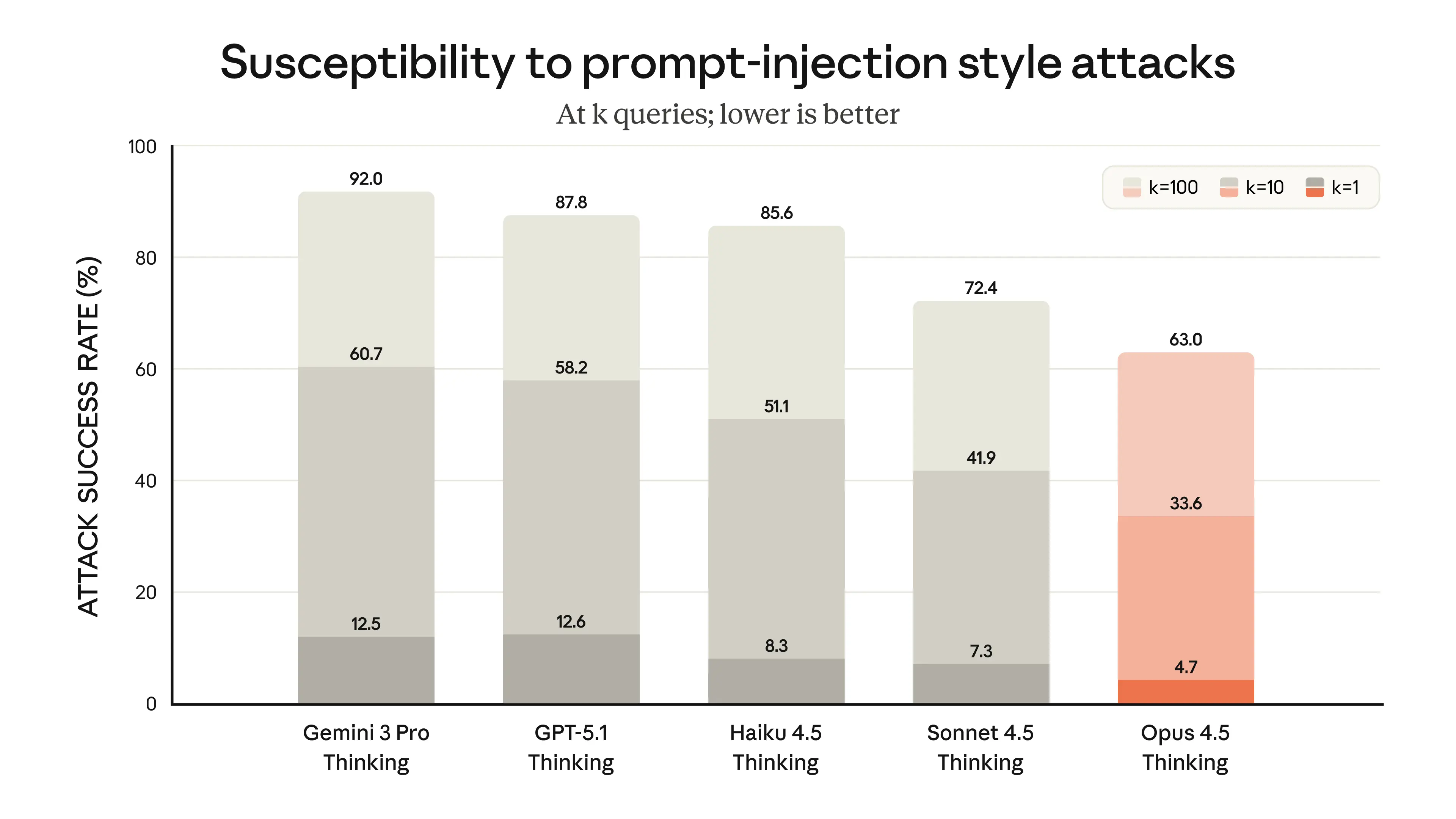

Introducing Claude Opus 4 5 Anthropic

Comments are closed.