Optimizing Tiny Llms For Edge Device Deployment

On Device Llms The Disruptive Shift In Ai Deployment Markovate Privacy‑first regulations that demand on‑device processing of personal data. this article provides a deep dive into the technical, practical, and strategic aspects of optimizing small language models for edge deployment. Our definitive guide to the best small llms for edge devices in 2026. we've partnered with industry experts, tested performance on resource constrained hardware, and analyzed model architectures to uncover the most efficient and capable lightweight language models.

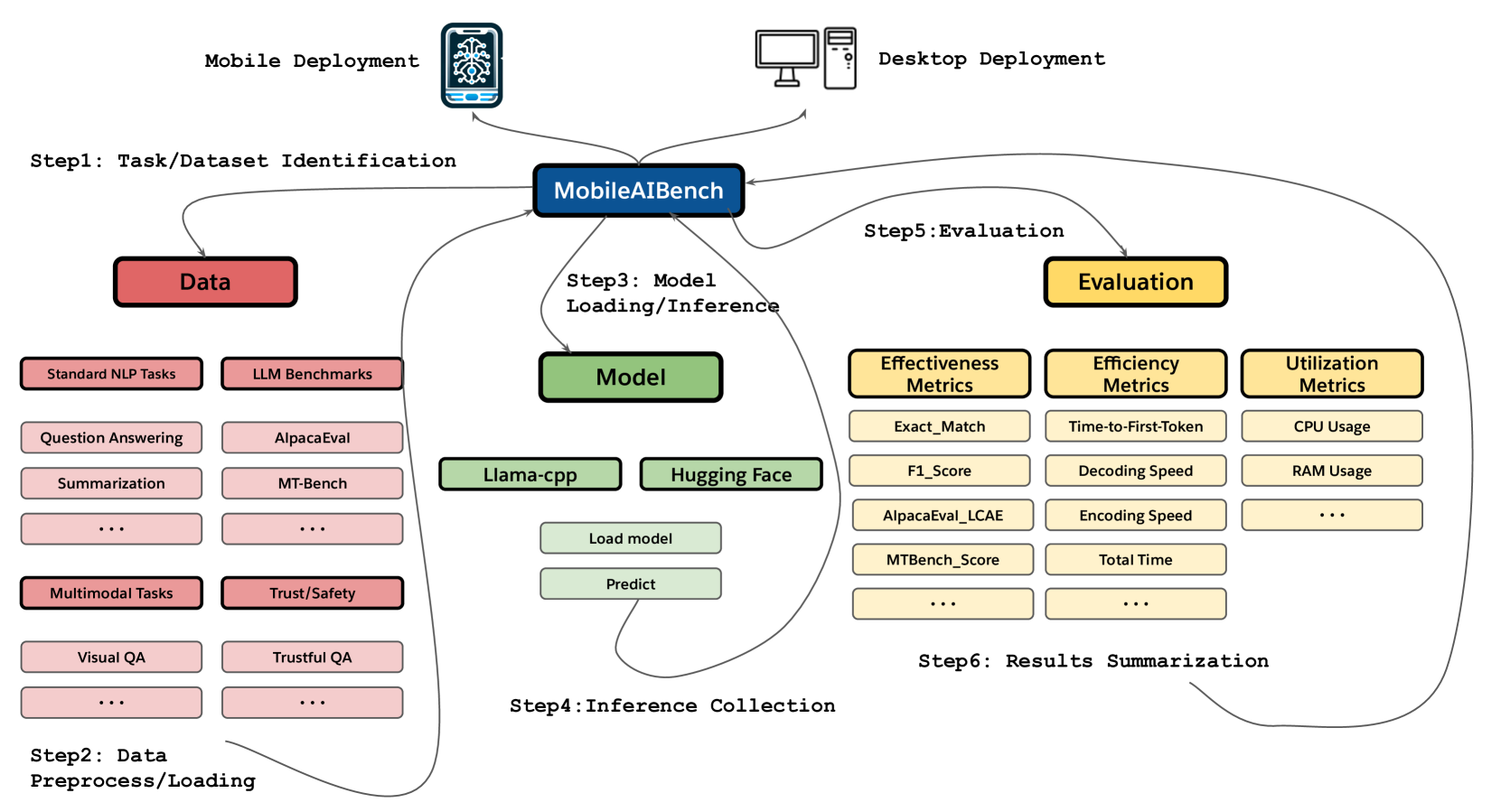

Can Llms Operate On Edge Devices Zilliz Vector Database Explore advanced strategies like quantization, pruning, and knowledge distillation for optimizing large language models (llms) on edge devices. understand challenges including resource constraints, latency, and data privacy in real world edge ai deployments. But edge devices—phones, embedded systems, iot sensors—are severely constrained. in 2025, techniques like quantization, distillation, and pruning make llms practical at the edge. In this paper, we present a comprehensive approach to optimize llm deployment in edge computing environments by combining four existing classes of optimisation techniques: model compression, quantization, distributed inference, and federated learning, in a unified framework. Cloud based llms are unsustainable for iot and edge applications due to high latency, bandwidth requirements, and energy consumption. tinyedgellm addresses this by enabling efficient on device inference through model compression techniques.

Unlocking The Power Of Llms A Guide To Successful Production Deployment In this paper, we present a comprehensive approach to optimize llm deployment in edge computing environments by combining four existing classes of optimisation techniques: model compression, quantization, distributed inference, and federated learning, in a unified framework. Cloud based llms are unsustainable for iot and edge applications due to high latency, bandwidth requirements, and energy consumption. tinyedgellm addresses this by enabling efficient on device inference through model compression techniques. Run large language models on edge devices without the cloud, discover how model compression and optimization can unlock real time performance now. As large language models (llms) continue to advance, deploying them in edge computing environments presents new opportunities and challenges. unlike traditional cloud based llm deployments, edge computing enables on device processing, reducing latency and improving privacy. Dive into the fascinating world of optimizing tiny llms for edge device deployment, where we'll explore the cutting edge techniques to maximize model performance and energy. We investigate the suitability for deployment of smaller models on resource constrained edge platforms and demonstrate that they lead to significantly faster in ference or token generation rates.

Tinybenchmarks Evaluating Llms With Fewer Examples Ai Research Paper Run large language models on edge devices without the cloud, discover how model compression and optimization can unlock real time performance now. As large language models (llms) continue to advance, deploying them in edge computing environments presents new opportunities and challenges. unlike traditional cloud based llm deployments, edge computing enables on device processing, reducing latency and improving privacy. Dive into the fascinating world of optimizing tiny llms for edge device deployment, where we'll explore the cutting edge techniques to maximize model performance and energy. We investigate the suitability for deployment of smaller models on resource constrained edge platforms and demonstrate that they lead to significantly faster in ference or token generation rates.

Edge Llms Vs Cloud Llms Pros Cons And Use Cases Premio Inc Dive into the fascinating world of optimizing tiny llms for edge device deployment, where we'll explore the cutting edge techniques to maximize model performance and energy. We investigate the suitability for deployment of smaller models on resource constrained edge platforms and demonstrate that they lead to significantly faster in ference or token generation rates.

Comments are closed.