Optimizing Rag Systems Effective Evaluation Framework

Optimizing Rag Systems Effective Evaluation Framework A proper rag evaluation framework ensures that the system is constantly being refined and optimized. by measuring key metrics and identifying weaknesses, organizations can make data driven adjustments, which leads to continuous improvement over time. In this blog post, we'll equip you with a series of best practices to identify issues within your rag system and fix them with a transparent, automated evaluation framework.

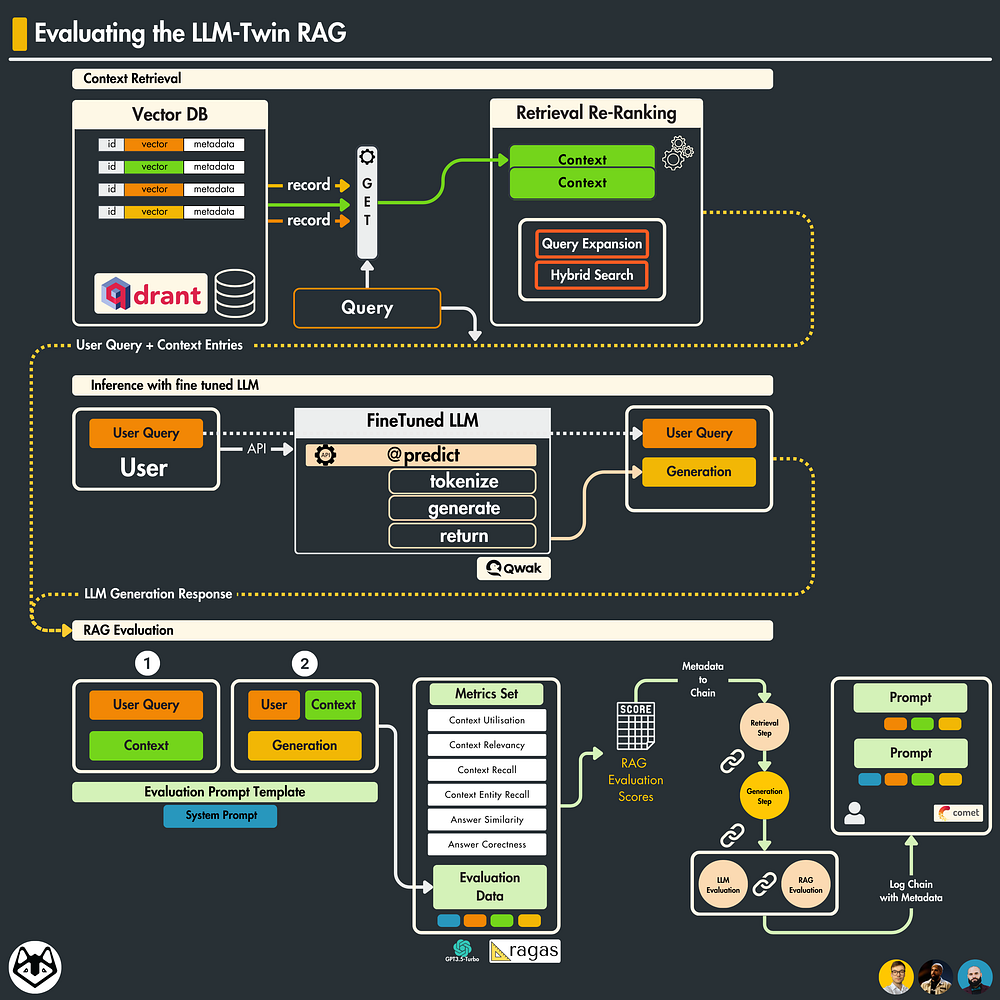

Optimizing Rag Systems Effective Evaluation Framework Rag evaluation requires a multi faceted approach that goes beyond traditional nlp metrics. success depends on measuring both retrieval effectiveness and generation quality while maintaining system performance and user satisfaction. This guide breaks down how to evaluate and test rag systems. you'll learn how to evaluate retrieval and generation quality, build test sets with synthetic data, run experiments, and monitor in production. In this article, you'll learn how to measure rag system performance across retrieval and generation stages, frameworks that automate evaluation at scale, and production practices that catch failures before users do. These recommendations offer a structured approach for optimizing rag, enabling teams to systematically enhance system performance. implementing rag is just the first step; evaluating its effectiveness presents a separate challenge.

How To Evaluate Your Rag Using The Ragas Framework In this article, you'll learn how to measure rag system performance across retrieval and generation stages, frameworks that automate evaluation at scale, and production practices that catch failures before users do. These recommendations offer a structured approach for optimizing rag, enabling teams to systematically enhance system performance. implementing rag is just the first step; evaluating its effectiveness presents a separate challenge. Understand and apply advanced evaluation frameworks like ragas, ares, and develop custom metrics for thorough rag assessment. Several frameworks simplify rag evaluation implementation by providing pre built metrics, evaluation infrastructure, and integration with popular development tools. This paper proposes a thorough, step by step framework for evaluation and monitoring rag question answer systems. it addresses challenges faced by prac titioners, such as the abundance of available metrics and need for decision making among various trade offs. But here’s the catch: figuring out if ai is actually *good* at rag can be tricky. that’s where these open source llm evaluators come in! they’re like special tools designed to test how well.

Advanced Rag Evaluation Monitoring Production Systems Understand and apply advanced evaluation frameworks like ragas, ares, and develop custom metrics for thorough rag assessment. Several frameworks simplify rag evaluation implementation by providing pre built metrics, evaluation infrastructure, and integration with popular development tools. This paper proposes a thorough, step by step framework for evaluation and monitoring rag question answer systems. it addresses challenges faced by prac titioners, such as the abundance of available metrics and need for decision making among various trade offs. But here’s the catch: figuring out if ai is actually *good* at rag can be tricky. that’s where these open source llm evaluators come in! they’re like special tools designed to test how well.

Comments are closed.