Ollama Freephile Wiki

Helm Freephile Wiki Ollama is a tool that allows users to run large language models (llms) directly on their own computers, making powerful ai technology accessible without relying on cloud services. Karpathy’s llm wiki, 100% local with ollama. drop markdown notes → ai extracts concepts → your obsidian wiki auto links and grows. zero cloud. zero sharing. your notes stay yours. kytmanov obsidi.

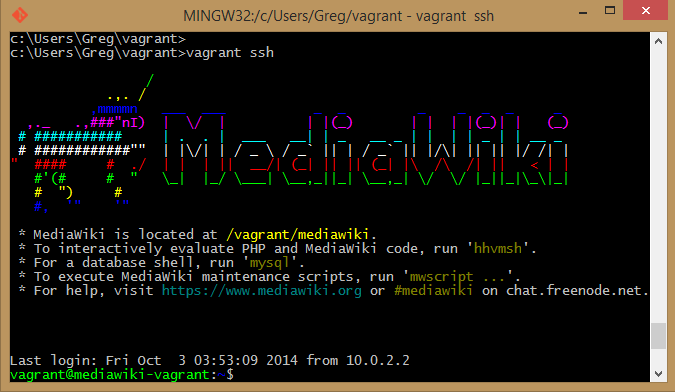

Motd Freephile Wiki Ollama is the easiest way to automate your work using open models, while keeping your data safe. Ollama is an open source tool designed to simplify the deployment and management of large language models (llms) locally on personal computers and servers. it. Ollama is an open source framework designed to facilitate the deployment of large language models on local environments. it aims to simplify the complexities involved in running and managing these models, providing a seamless experience for users across different operating systems. Ollama is the easiest way to get up and running with large language models such as gpt oss, gemma 3, deepseek r1, qwen3 and more.

Ollama Freephile Wiki Ollama is an open source framework designed to facilitate the deployment of large language models on local environments. it aims to simplify the complexities involved in running and managing these models, providing a seamless experience for users across different operating systems. Ollama is the easiest way to get up and running with large language models such as gpt oss, gemma 3, deepseek r1, qwen3 and more. Ollama is a local llm runtime designed to run large language models on consumer hardware with minimal setup. it serves as both a model manager and an inference server. Although you can download or install it from the repo on github github ollama ollama, you can also run it as a docker image ollama ollama[1] however, i ran into multiple issues, and decided to go the straight install route instead. 1 #! bin sh 2 # this script installs ollama on linux. 3 # it detects the current operating system architecture and installs the appropriate version of ollama. 4 5 set eu. Ollama is designed for developers, researchers, and organizations who need offline ai usage, rapid experimentation, and data sovereignty. the platform supports macos and linux natively, with experimental support for windows.

File Display Svg Freephile Wiki Ollama is a local llm runtime designed to run large language models on consumer hardware with minimal setup. it serves as both a model manager and an inference server. Although you can download or install it from the repo on github github ollama ollama, you can also run it as a docker image ollama ollama[1] however, i ran into multiple issues, and decided to go the straight install route instead. 1 #! bin sh 2 # this script installs ollama on linux. 3 # it detects the current operating system architecture and installs the appropriate version of ollama. 4 5 set eu. Ollama is designed for developers, researchers, and organizations who need offline ai usage, rapid experimentation, and data sovereignty. the platform supports macos and linux natively, with experimental support for windows.

Comments are closed.