Nvidia Eagle 2 5 Vision Language Model 8b Parameters Rival Gpt 4o In

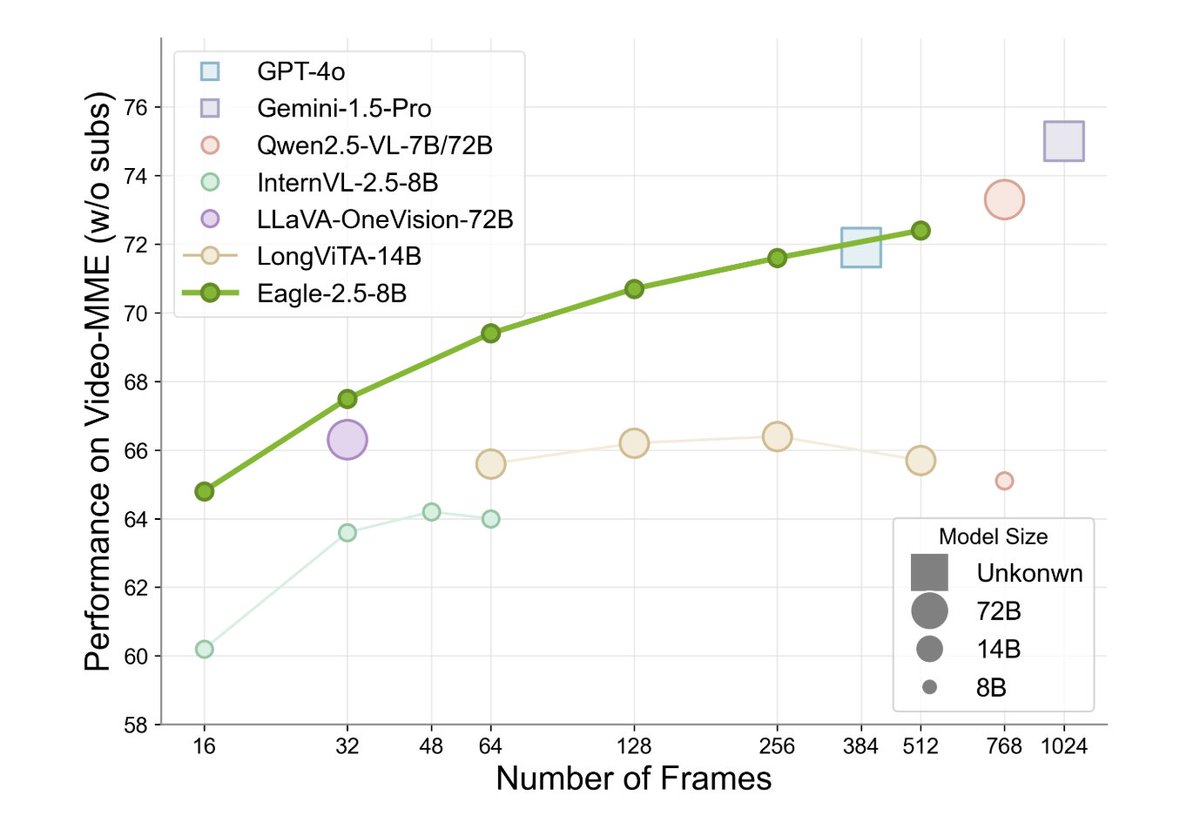

Nvidia Unveils Eagle 2 5 Vision Language Model With 8b Parameters Notably, our best model eagle 2.5 8b achieves 72.4% on video mme with 512 input frames, matching the results of top tier commercial model such as gpt 4o and large scale open source models like qwen2.5 vl 72b and internvl2.5 78b. Notably, eagle 2.5 8b achieves 72.4% on video mme with 512 input frames, matching the results of top tier commercial models such as gpt 4o and large scale open source models like qwen2.5 vl 72b and internvl2.5 78b, despite having significantly fewer parameters.

Nvidia Eagle 2 5 Vision Language Model 8b Parameters Rival Gpt 4o In Nvidia unveiled eagle 2.5, a compact 8b parameter vision language model that achieves state of the art performance on long context video tasks, rivaling much larger models like gpt 4o through innovative training and data strategies. The eagle 2.5 8b model, with just 8 billion parameters, achieves performance comparable to much larger models such as gpt 4o and qwen2.5 vl 72b in long video understanding tasks. Nvidia eagle 2.5 vision language model matches gpt 4o performance with just 8b parameters through innovative training and data strategies. learn how small is becoming mighty in ai. While most existing vlms focus on short context tasks, eagle 2.5 addresses the challenges of long video comprehension and high resolution image understanding, providing a generalist framework for both.

Nvidia Eagle 2 5 Vision Language Model 8b Parameters Rival Gpt 4o In Nvidia eagle 2.5 vision language model matches gpt 4o performance with just 8b parameters through innovative training and data strategies. learn how small is becoming mighty in ai. While most existing vlms focus on short context tasks, eagle 2.5 addresses the challenges of long video comprehension and high resolution image understanding, providing a generalist framework for both. Notably, our best model eagle2.5 8b achieves 72.4\% on video mme with 512 input frames, matching the results of top tier commercial model such as gpt 4o and large scale open source models like qwen2.5 vl 72b and internvl2.5 78b. Our model achieves superior context coverage and exhibits consistent performance scaling with increasing frame counts, attaining competitive results compared to larger models like gpt 4o and qwen2.5 vl 72b while maintaining a significantly smaller parameter footprint. Eagle 2.5 presents a technically grounded approach to long context vision language modeling. its emphasis on preserving contextual integrity, gradual training adaptation, and dataset diversity enables it to achieve strong performance while maintaining architectural generality. Despite a parameter size of only 8b, eagle 2.5 scored as high as 72.4% in the video mme benchmark (512 frames of input), comparable to larger models such as qwen2.5 vl 72b and internvl2.5 78b.

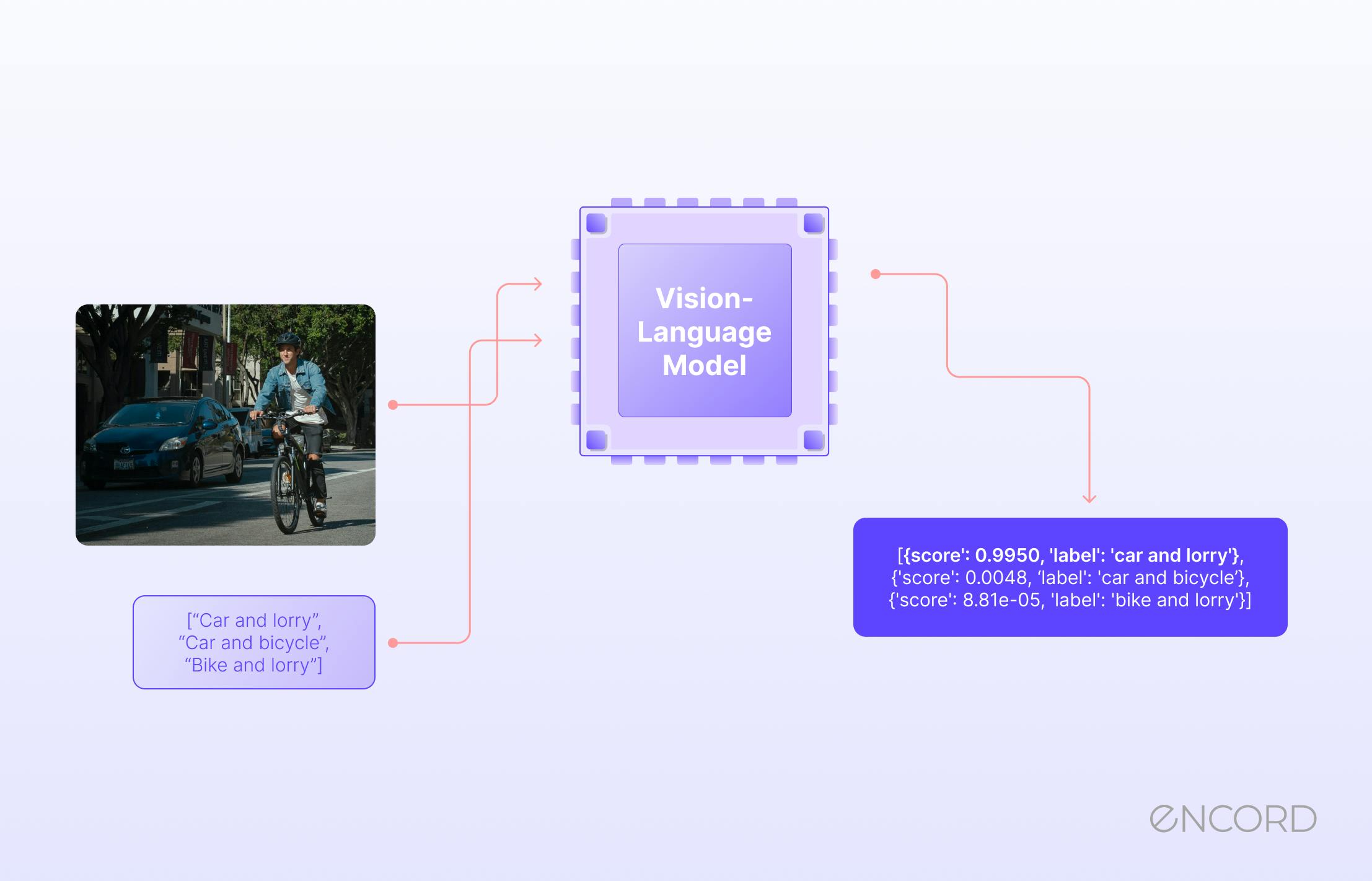

Vision Language Models How They Work Overcoming Key Challenges Encord Notably, our best model eagle2.5 8b achieves 72.4\% on video mme with 512 input frames, matching the results of top tier commercial model such as gpt 4o and large scale open source models like qwen2.5 vl 72b and internvl2.5 78b. Our model achieves superior context coverage and exhibits consistent performance scaling with increasing frame counts, attaining competitive results compared to larger models like gpt 4o and qwen2.5 vl 72b while maintaining a significantly smaller parameter footprint. Eagle 2.5 presents a technically grounded approach to long context vision language modeling. its emphasis on preserving contextual integrity, gradual training adaptation, and dataset diversity enables it to achieve strong performance while maintaining architectural generality. Despite a parameter size of only 8b, eagle 2.5 scored as high as 72.4% in the video mme benchmark (512 frames of input), comparable to larger models such as qwen2.5 vl 72b and internvl2.5 78b.

Nvidia Launches 8b Parameter Eagle 2 5 Vision Language Model Eagle 2.5 presents a technically grounded approach to long context vision language modeling. its emphasis on preserving contextual integrity, gradual training adaptation, and dataset diversity enables it to achieve strong performance while maintaining architectural generality. Despite a parameter size of only 8b, eagle 2.5 scored as high as 72.4% in the video mme benchmark (512 frames of input), comparable to larger models such as qwen2.5 vl 72b and internvl2.5 78b.

Long Context Multimodal Understanding No Longer Requires Massive Models

Comments are closed.