Noiseqa User Centered Qa Evaluation

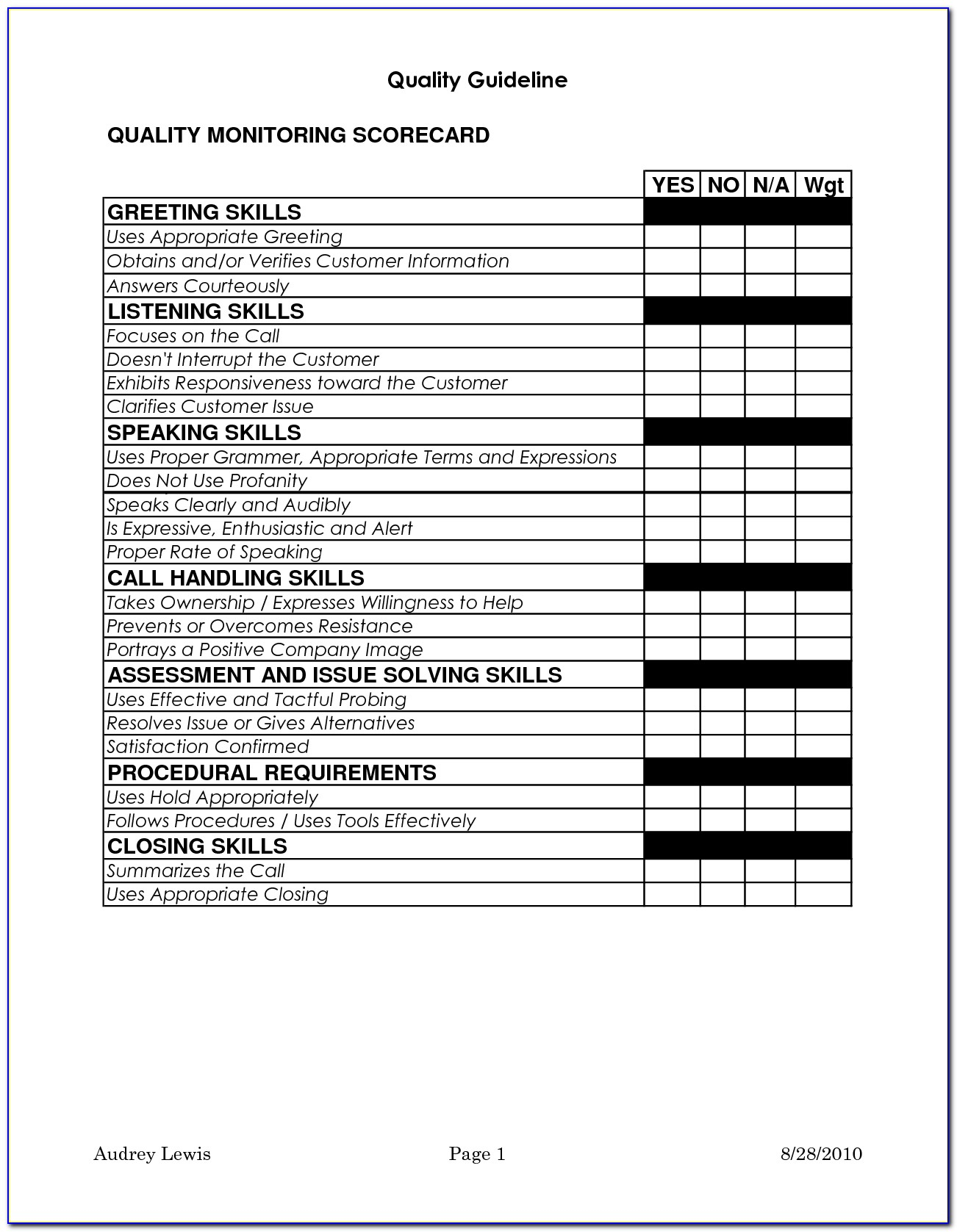

Qa Evaluation Forms Samples Evaluationform Net In this work, we advocate that practitioners construct a range of evaluations, reflecting real world usage scenarios and potential users for their systems. we present: a detailed description of interface noise and associated ‘challenges of the channel’ for qa systems. For more information on how each dataset was created, please refer to our paper, noiseqa: challenge set evaluation for user centric question answering. all files are in json format and follow the original squad dataset file format.

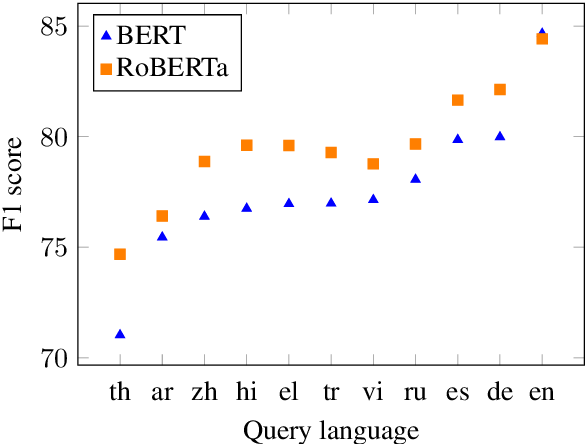

Noiseqa Challenge Set Evaluation For User Centric Question Answering Abstract when question answering (qa) systems are deployed in the real world, users query them through a variety of interfaces, such as speaking to voice assistants, typing questions into a search engine, or even translating questions to languages supported by the qa system. We hope to encourage the development of future user centered or participa tory design approaches to building qa datasets and evaluations, where practitioners work with poten tial users to understand user requirements and the contexts in which systems are used in practice. This paper presents a general framework which learns felicitous paraphrases for various qa tasks and shows that this framework consistently improves performance, achieving competitive results despite the use of simple qa models. When question answering (qa) systems are deployed in the real world, users query them through a variety of interfaces, such as speaking to voice assistants, typing questions into a search.

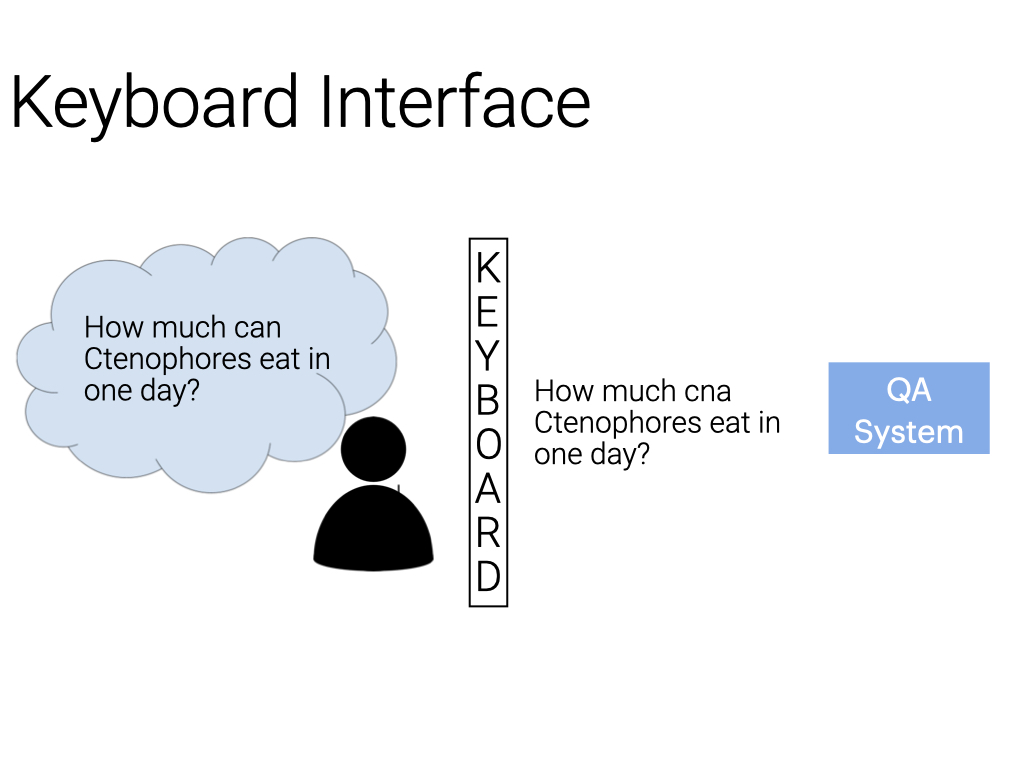

Noiseqa User Centered Qa Evaluation This paper presents a general framework which learns felicitous paraphrases for various qa tasks and shows that this framework consistently improves performance, achieving competitive results despite the use of simple qa models. When question answering (qa) systems are deployed in the real world, users query them through a variety of interfaces, such as speaking to voice assistants, typing questions into a search. View a pdf of the paper titled noiseqa: challenge set evaluation for user centric question answering, by abhilasha ravichander and 5 other authors. In this paper, we perform a broad evaluation of ner using a popular dataset, that takes into consideration various text genres and sources constituting the dataset at hand. The noiseqa dataset introduces three different types of noise to each of these questions:"," machine translation noise, to simulate errors occurring when users ask questions in a different language than the language(s) the qa model was trained on;"," keyboard noise, to simulate the effect of users making spelling errors while typing. Abstract when question answering (qa) systems are deployed in the real world, users query them through a variety of interfaces, such as speaking to voice assistants, typing questions into a search engine, or even translating questions to languages supported by the qa system.

Comments are closed.