No Code Dataflow Apache Beam Pipeline Builder Google Cloud

Running The Apache Beam Samples On Google Cloud Dataflow Apache Hop You can use the apache beam sdk to build pipelines for dataflow. this document lists some resources for getting started with apache beam programming. install the apache beam sdk:. Learn how to build powerful data pipelines in google cloud platform without writing a single line of code!.

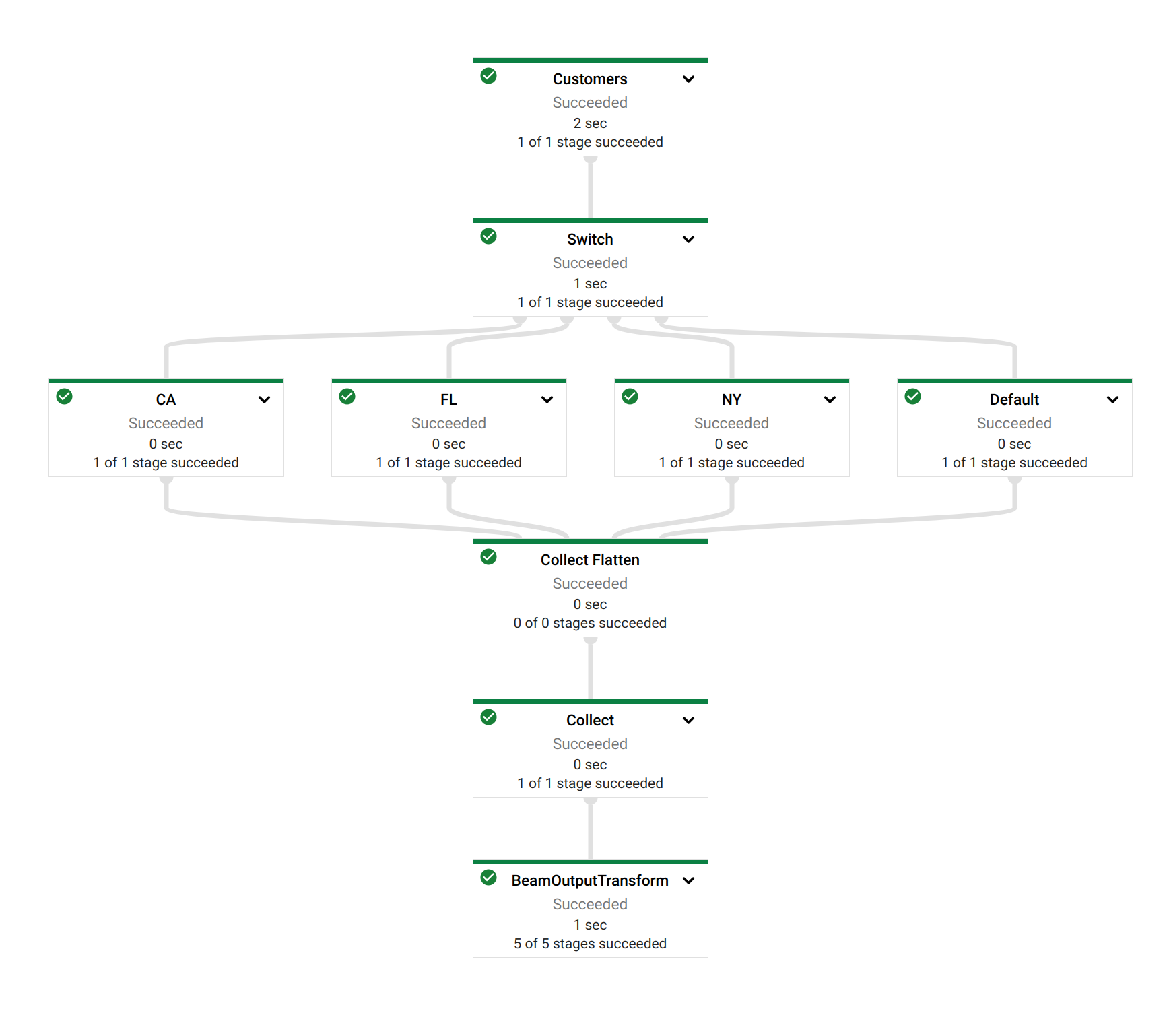

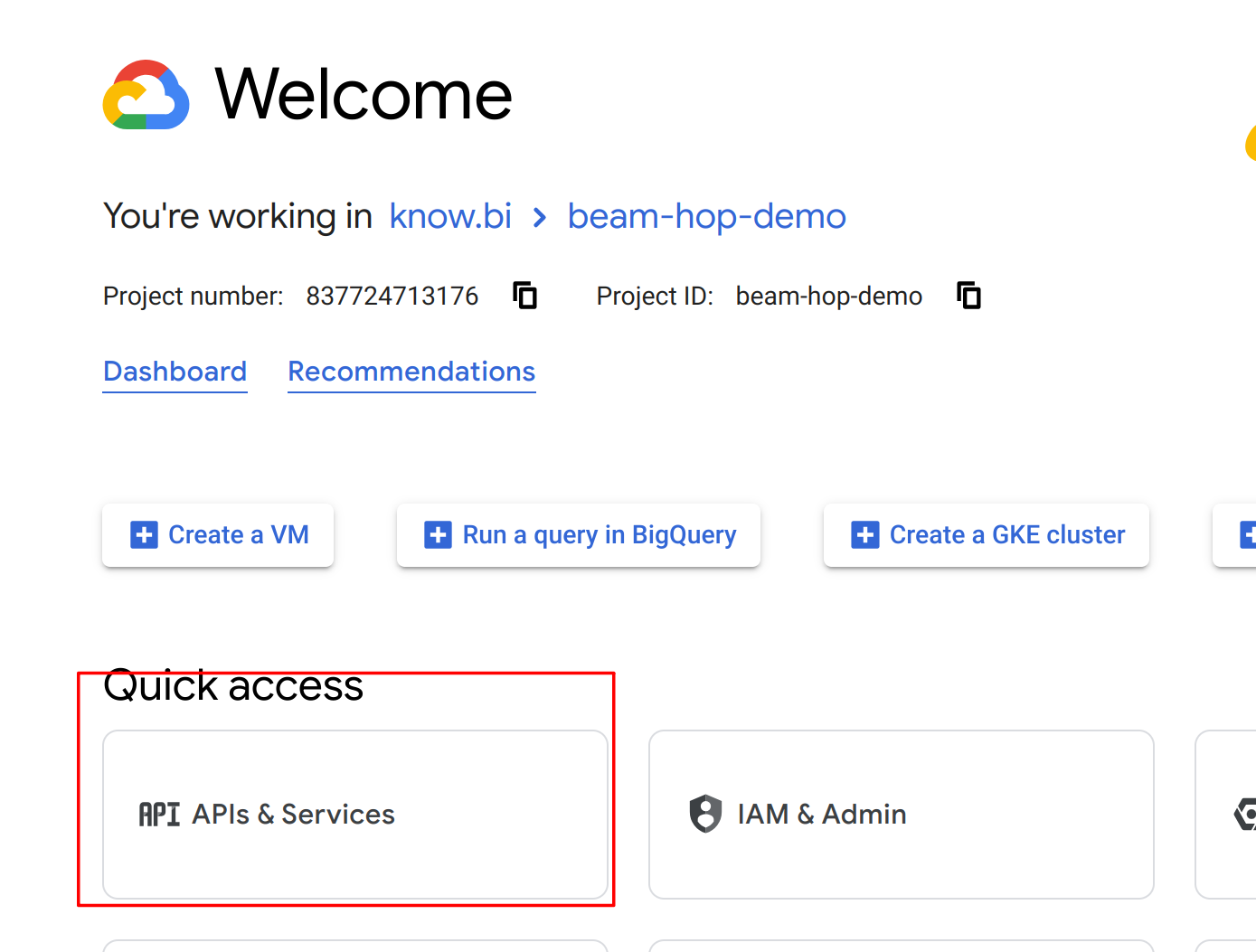

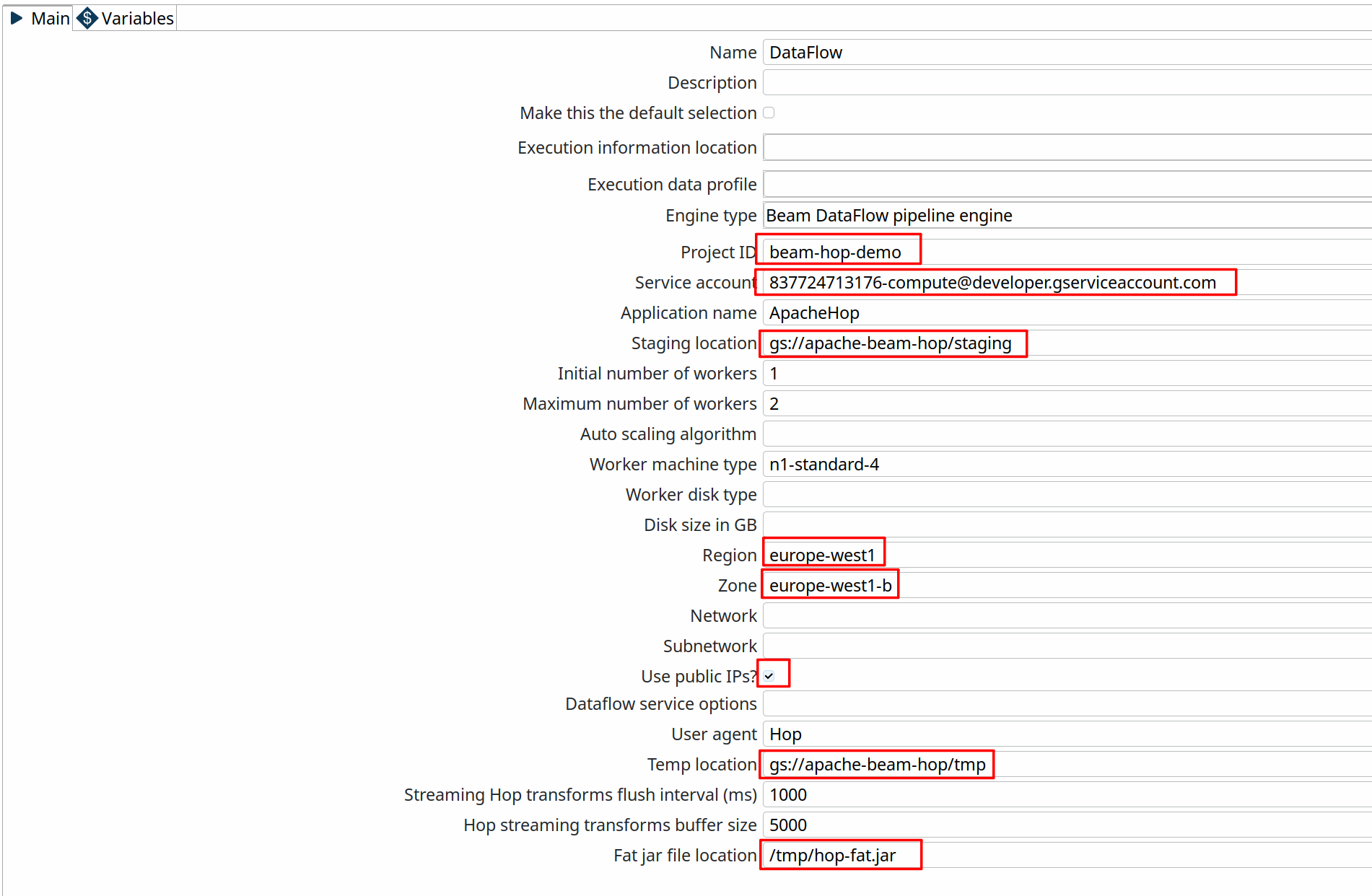

Running The Apache Beam Samples On Google Cloud Dataflow Apache Hop Learn how to build and run your first apache beam data processing pipeline on google cloud dataflow with step by step examples. When you run your pipeline with the cloud dataflow service, the runner uploads your executable code and dependencies to a google cloud storage bucket and creates a cloud dataflow job, which executes your pipeline on managed resources in google cloud platform. Now that we have set up our project and storage bucket, let’s dive into writing and configuring our apache beam pipeline to run on google cloud dataflow. i’ll try to keep the. In this second installment of the dataflow course series, we are going to be diving deeper on developing pipelines using the beam sdk. we start with a review of apache beam concepts. next, we discuss processing streaming data using windows, watermarks and triggers.

Running The Apache Beam Samples On Google Cloud Dataflow Apache Hop Now that we have set up our project and storage bucket, let’s dive into writing and configuring our apache beam pipeline to run on google cloud dataflow. i’ll try to keep the. In this second installment of the dataflow course series, we are going to be diving deeper on developing pipelines using the beam sdk. we start with a review of apache beam concepts. next, we discuss processing streaming data using windows, watermarks and triggers. Discover how to automate data pipelines with apache beam on google cloud dataflow to enhance reliability, scalability, and efficiency in your data workflows. Master google dataflow with hands on projects | apache beam basics to advanced streaming & batch data pipelines. are you looking to master google dataflow and apache beam to build scalable, production ready data pipelines on google cloud platform (gcp)?. Dataflow is a powerful, serverless, and scalable tool for real time and batch data processing. with its foundation in apache beam, it provides a unified way to build and execute etl pipelines on google cloud. If you are a java developer who wants to build an etl pipeline using apache beam and want to deploy it in dataflow service in the google cloud platform, follow us at @crosscutdata to build and deploy your first etl pipeline with a lean codebase.

Comments are closed.