Nlp Classifier Models Metrics

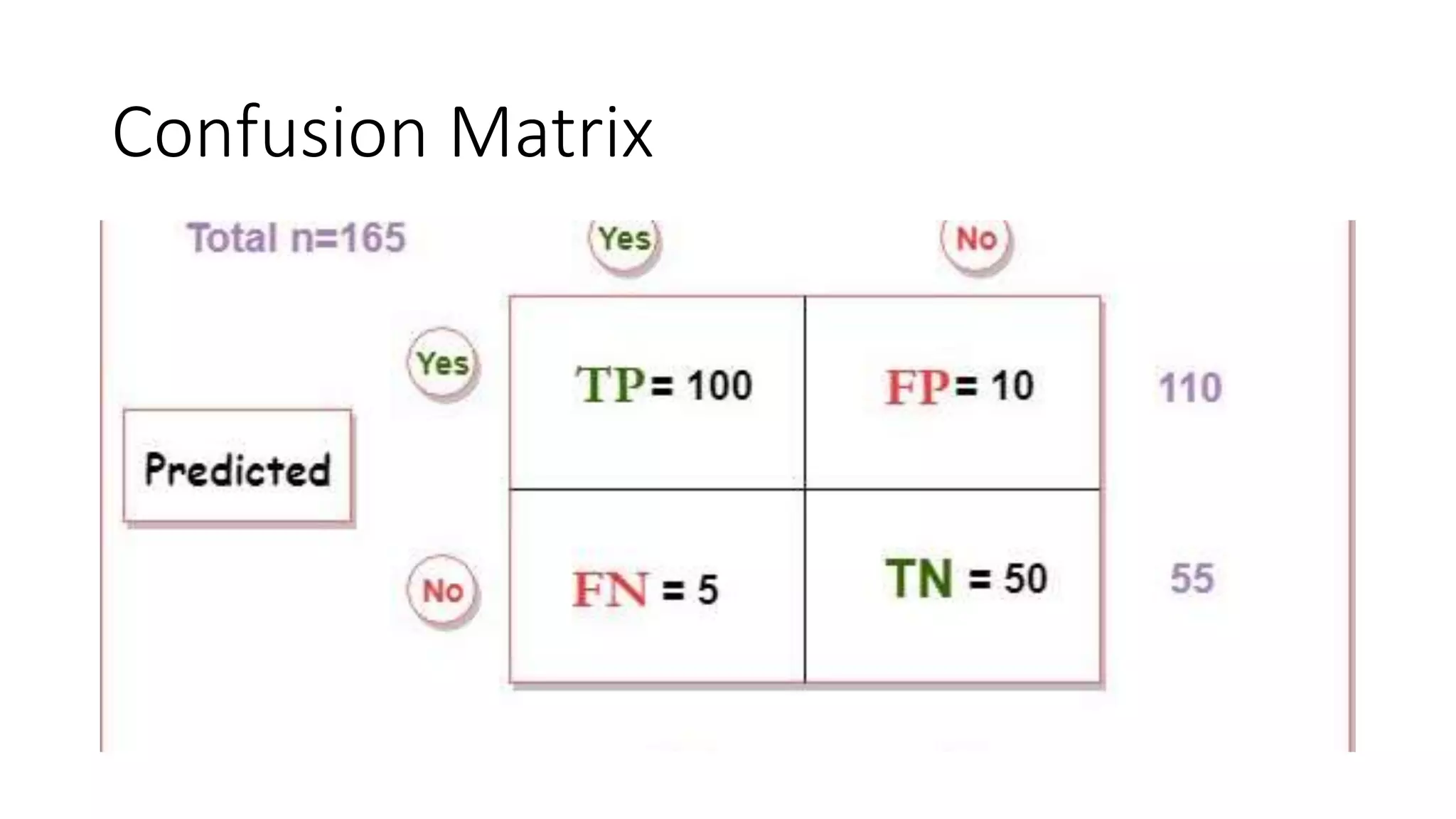

Nlp Classifier Models Metrics Nlp Summit Discover how models for language tasks such as text classification, generation, or machine translation can be evaluated. in depth exploration of essential classification metrics like precision, recall, and f1 score, and introductions to further metrics and benchmarks. Some of the most widely used classification metrics for measuring classifier performance in nlp tasks are accuracy, f1 measure and the area under the curve receiver operating characteristics (auc roc).

Nlp Classifier Models Metrics Ppt Different nlp tasks require different evaluation metrics. while bleu is great for translation, rouge is better for summarization, and precision recall balance is key for classification. To evaluate the performance of classification models, we use the following metrics: 1. accuracy is a fundamental metric used for evaluating the performance of a classification model. it tells us the proportion of correct predictions made by the model out of all predictions. Determining whether the model being used for a specific task is successful depends on 2 key factors: in this article, i will focus only on the first factor – selecting the correct evaluation metric. the evaluation metric we decide to use depends on the type of nlp task that we are doing. Performance metrics provide a quantitative lens to evaluate and improve nlp models that power business applications such as customer sentiment analysis, chatbots, and automated content generation.

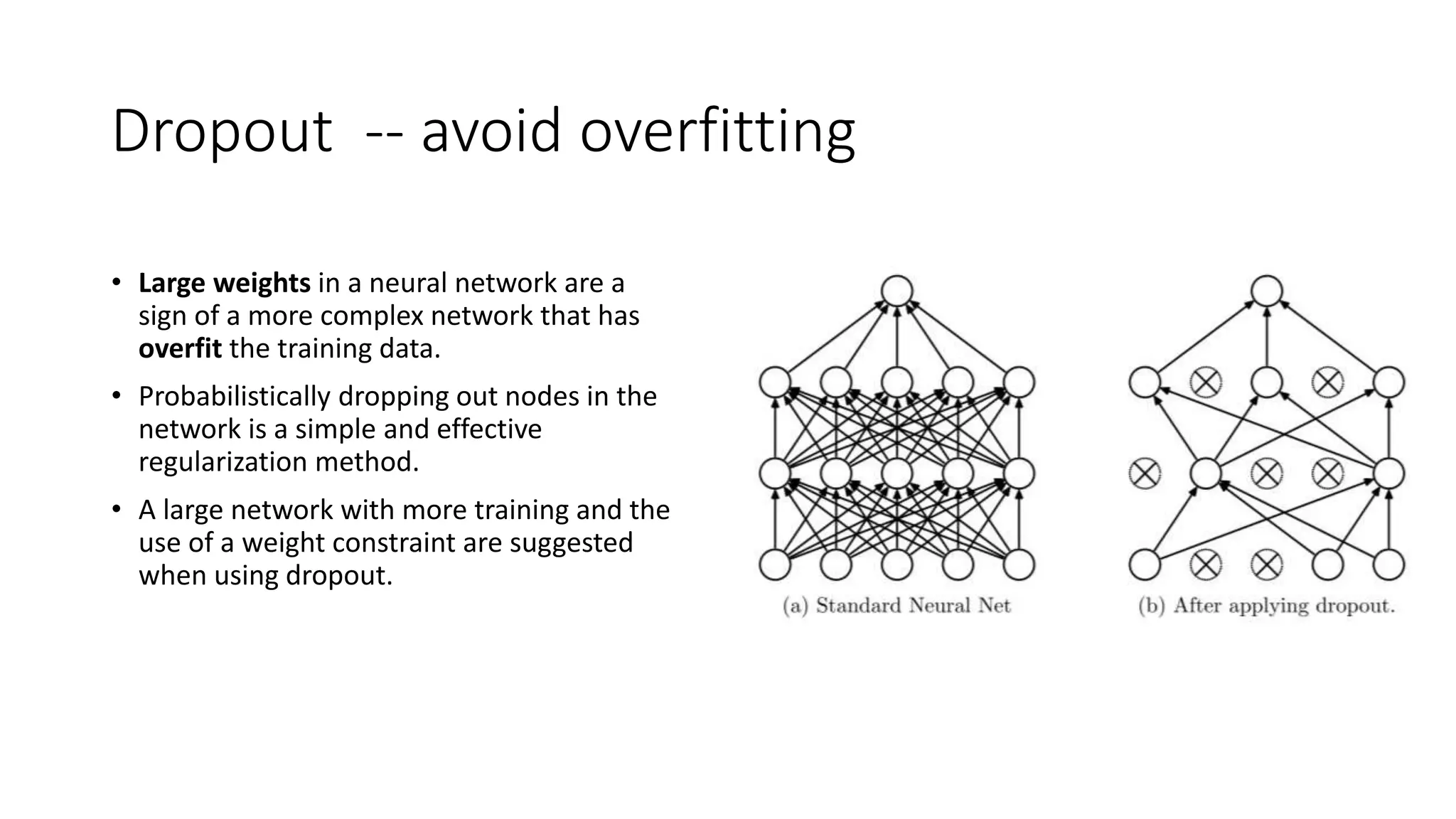

Nlp Classifier Models Metrics Ppt Determining whether the model being used for a specific task is successful depends on 2 key factors: in this article, i will focus only on the first factor – selecting the correct evaluation metric. the evaluation metric we decide to use depends on the type of nlp task that we are doing. Performance metrics provide a quantitative lens to evaluate and improve nlp models that power business applications such as customer sentiment analysis, chatbots, and automated content generation. Along with these concepts, i will also show code snippets in keras to build the classifier. i will conclude with some of the metrics commonly used in measuring the performance of the classifier. Explore key metrics used for assessing natural language processing models, helping data scientists measure performance, accuracy, and reliability in various nlp tasks. In this article, we will explore the essential evaluation metrics for nlp tasks, discuss their strengths and weaknesses, and provide guidance on how to choose the right metrics for your computational linguistics projects. In this tutorial, i will introduce four popular evaluation metrics for nlp model (natural language processing model): rouge, bleu, meteor, and bertscore. evaluating llms is critical to understand their capabilities and limitations across different tasks.

Nlp Classifier Models Metrics Pptx Along with these concepts, i will also show code snippets in keras to build the classifier. i will conclude with some of the metrics commonly used in measuring the performance of the classifier. Explore key metrics used for assessing natural language processing models, helping data scientists measure performance, accuracy, and reliability in various nlp tasks. In this article, we will explore the essential evaluation metrics for nlp tasks, discuss their strengths and weaknesses, and provide guidance on how to choose the right metrics for your computational linguistics projects. In this tutorial, i will introduce four popular evaluation metrics for nlp model (natural language processing model): rouge, bleu, meteor, and bertscore. evaluating llms is critical to understand their capabilities and limitations across different tasks.

Nlp Classifier Models Metrics Pptx In this article, we will explore the essential evaluation metrics for nlp tasks, discuss their strengths and weaknesses, and provide guidance on how to choose the right metrics for your computational linguistics projects. In this tutorial, i will introduce four popular evaluation metrics for nlp model (natural language processing model): rouge, bleu, meteor, and bertscore. evaluating llms is critical to understand their capabilities and limitations across different tasks.

Comments are closed.