Neural Networks 8 1 Sparse Coding Definition

Supervised Deep Sparse Coding Networks Deepai Sparse coding, in simple words, is a machine learning approach in which a dictionary of basis functions is learned and then used to represent input as a linear combination of a minimal number of these basis functions. Audio tracks for some languages were automatically generated. learn more. enjoy the videos and music you love, upload original content, and share it all with friends, family, and the world on.

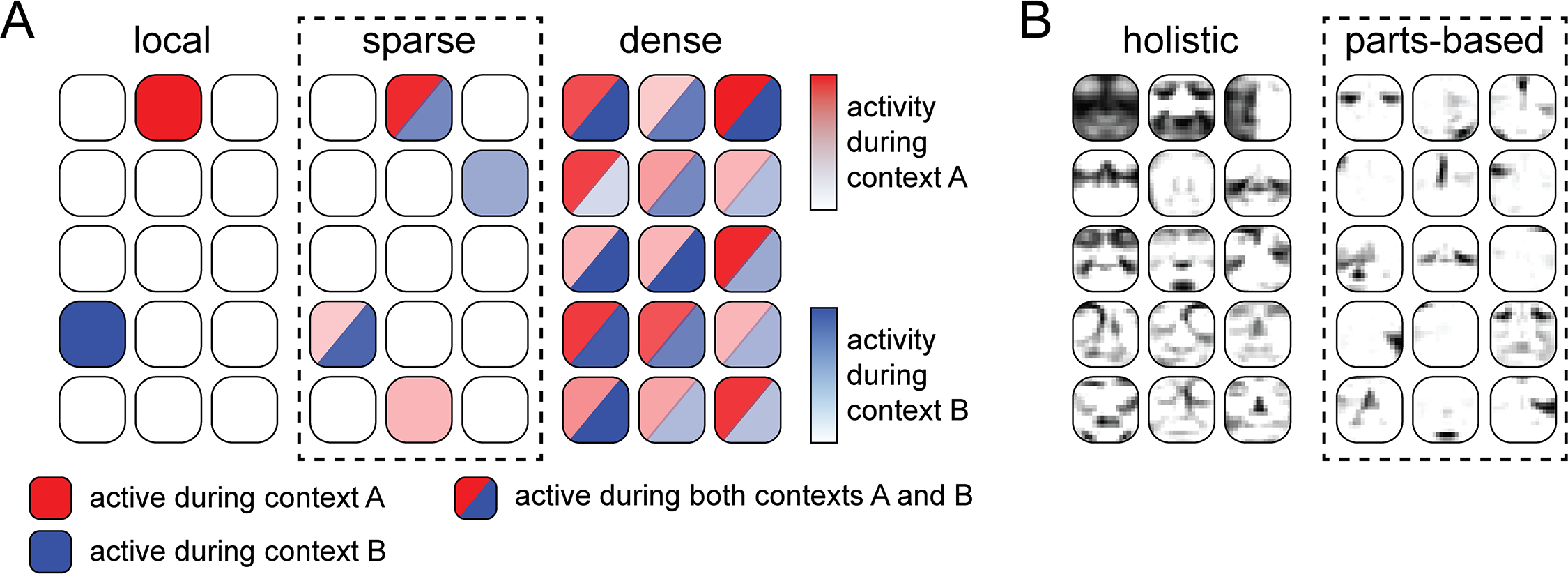

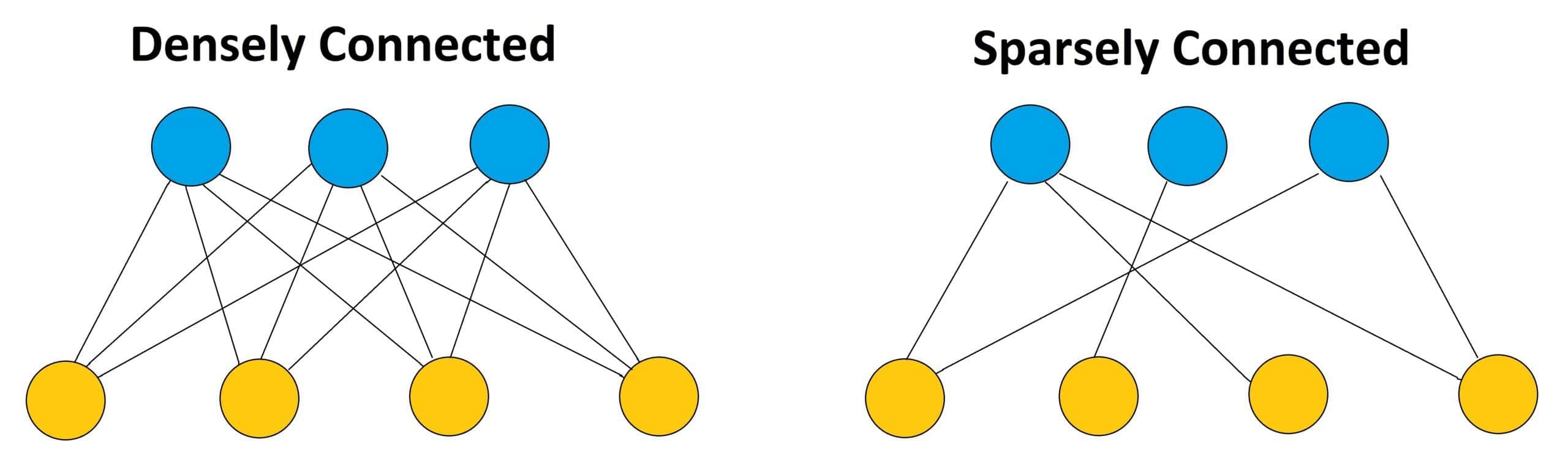

Neural Correlates Of Sparse Coding And Dimensionality Reduction ‣ automatically extract meaningful features for your data ‣ leverage the availability of unlabeled data ‣ add a data dependent regularizer to trainings • we will see 3 neural networks for unsupervised learning ‣ restricted boltzmann machines ‣ autoencoders ‣ sparse coding model. In normal dense neural networks, each neuron is connected to every neuron in the next layer. in our sparse models, each neuron only connects to a few neurons in the next layer. `sparse coding' is a computational hypothesis about visual (and auditory) cortex. sensory neurons e ciently represent `sparse' features and feature combinations. Here, we define sparsity as having few non zero components or having few components not close to zero. the requirement that our coefficients $a i$ be sparse means that given a input vector, we would like as few of our coefficients to be far from zero as possible.

The Concepts Of Dense And Sparse In The Context Of Neural Networks `sparse coding' is a computational hypothesis about visual (and auditory) cortex. sensory neurons e ciently represent `sparse' features and feature combinations. Here, we define sparsity as having few non zero components or having few components not close to zero. the requirement that our coefficients $a i$ be sparse means that given a input vector, we would like as few of our coefficients to be far from zero as possible. Sparse neural networks refer to neural architectures where a substantial portion of the connections or neurons is eliminated, leaving only the most relevant components. In this paper, we consider the area of sparse coding. the sparse coding problem can be viewed as a linear regression problem with the additional assumption that the majority of the basis represen tation coefficients should be zeros. In linear networks, an analog coding scheme of the task emerges; in nonlinear networks, strong spontaneous symmetry breaking leads to either redundant or sparse coding schemes. This problem of similarity based sparse coding has already been treated systematically by stellmann (1992) by investigating methods that can generate roughly similarity preserving sparse binary code vectors from a given similarity matrix.

The Concepts Of Dense And Sparse In The Context Of Neural Networks Sparse neural networks refer to neural architectures where a substantial portion of the connections or neurons is eliminated, leaving only the most relevant components. In this paper, we consider the area of sparse coding. the sparse coding problem can be viewed as a linear regression problem with the additional assumption that the majority of the basis represen tation coefficients should be zeros. In linear networks, an analog coding scheme of the task emerges; in nonlinear networks, strong spontaneous symmetry breaking leads to either redundant or sparse coding schemes. This problem of similarity based sparse coding has already been treated systematically by stellmann (1992) by investigating methods that can generate roughly similarity preserving sparse binary code vectors from a given similarity matrix.

Comments are closed.