Neural Network Infers 3d Human Object Interactions

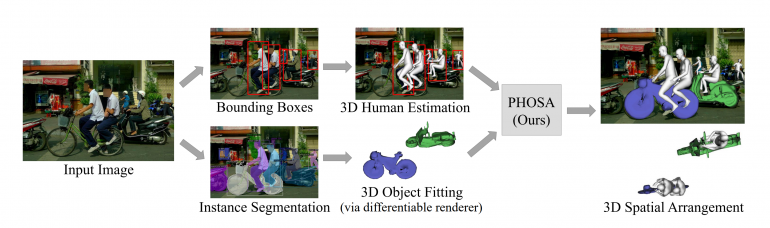

Neural Network Infers 3d Human Object Interactions Researchers from the carnegie mellon university, facebook ai research, argo ai, and the university of california have developed a method that successfully extracts 3d humans and objects along with their spatial relationship from a single in the wild input image. Building on mmhoi, we present mmhoi net, an end to end transformer based neural network for jointly estimating human–object 3d geometries, their interactions, and associated actions.

3d Convolutional Neural Networks For Human Action Recognition Learning 3d human object interactions (hoi) from 2d images is one of the important approaches for understanding human object interactions in 3d space and is crucial for the. We propose a novel framework for detecting 3d human–object interactions (hoi) in construction sites and a toolkit for generating construction related human–object interaction graphs. We present dreamhoi, a novel method for zero shot syn thesis of human object interactions (hois), enabling a 3d human model to realistically interact with any given object based on a textual description. This paper addresses the task of detecting and recognizing human object interactions (hoi) in images and videos. we introduce the graph parsing neural network (gpnn), a framework that incorporates structural knowledge while being di erentiable end to end.

Learning Human Object Interactions By Graph Parsing Neural Networks We present dreamhoi, a novel method for zero shot syn thesis of human object interactions (hois), enabling a 3d human model to realistically interact with any given object based on a textual description. This paper addresses the task of detecting and recognizing human object interactions (hoi) in images and videos. we introduce the graph parsing neural network (gpnn), a framework that incorporates structural knowledge while being di erentiable end to end. Humans initially learn about objects through the sense of touch, in a process called “haptic exploration.” in this paper, we present a neural network model of this learning process. Human action recognition and motion forecasting is becoming increasingly successful, in particular with utilizing graphs. we aim to transfer this success into t. This paper takes the initiative and showcases the potential of generating human object interactions without direct training on text interaction pair data. our key insight in achieving this is that interaction semantics and dy namics can be decoupled. We explore human object interaction when only the cues of the human pose interacting with an unobserved object are available (’input’). we propose a neural model that, for the first time, can infer the location of the object (’result’) from such input.

Task Oriented Human Object Interactions Generation With Implicit Neural Humans initially learn about objects through the sense of touch, in a process called “haptic exploration.” in this paper, we present a neural network model of this learning process. Human action recognition and motion forecasting is becoming increasingly successful, in particular with utilizing graphs. we aim to transfer this success into t. This paper takes the initiative and showcases the potential of generating human object interactions without direct training on text interaction pair data. our key insight in achieving this is that interaction semantics and dy namics can be decoupled. We explore human object interaction when only the cues of the human pose interacting with an unobserved object are available (’input’). we propose a neural model that, for the first time, can infer the location of the object (’result’) from such input.

Comments are closed.