Music Generation Design Project Github

Music Generation Design Project Github Its purpose is to support reproducible research and help junior researchers and engineers get started in the field of audio, music, and speech generation research and development. 🚀 we introduce ace step, a novel open source foundation model for music generation that overcomes key limitations of existing approaches and achieves state of the art performance through a holistic architectural design.

Github Tranphuminhbkhn Musicgeneration The musicgen decoder is a pure language model architecture, trained from scratch on the task of music generation. the novelty in the musicgen model is how the audio codes are predicted. In this blog post, we will explore the fundamental concepts of music generation using pytorch on github, discuss usage methods, common practices, and best practices to help you get started with your own music generation projects. This paper presents a generalized symbolic music generation framework, xmusic, which supports flexible prompts (i.e., images, videos, texts, tags, and humming) to generate emotionally controllable and high quality symbolic music. Ai powered vst3 for real time music generation. generate tempo synced loops, trigger via midi, sculpt the unexpected. 8 track sampler meets infinite sound engine.

Github Fagami1423 Music Generation This paper presents a generalized symbolic music generation framework, xmusic, which supports flexible prompts (i.e., images, videos, texts, tags, and humming) to generate emotionally controllable and high quality symbolic music. Ai powered vst3 for real time music generation. generate tempo synced loops, trigger via midi, sculpt the unexpected. 8 track sampler meets infinite sound engine. In this example we built a prototype of a real time musical performance device, based on the mingus stemgen model. the application allows looping of 4 channels of audio, with the ability to apply reverb, delay and a dj style lowpass highpass filter to each channel. Music generation using a long short term memory (lstm) neural network. the gennhausser project uses tensorflow and music21 libraries to create a synthetic dataset, train an lstm model, and generate music sequences. A curated compilation of ai driven generative music resources and projects. explore the blend of machine learning algorithms and musical creativity. 📝 abstract we introduce ace step, a novel open source foundation model for music generation that overcomes key limitations of existing approaches and achieves state of the art performance through a holistic architectural design. current methods face inherent trade offs between generation speed, musical coherence, and controllability.

Music Generation Github Topics Github In this example we built a prototype of a real time musical performance device, based on the mingus stemgen model. the application allows looping of 4 channels of audio, with the ability to apply reverb, delay and a dj style lowpass highpass filter to each channel. Music generation using a long short term memory (lstm) neural network. the gennhausser project uses tensorflow and music21 libraries to create a synthetic dataset, train an lstm model, and generate music sequences. A curated compilation of ai driven generative music resources and projects. explore the blend of machine learning algorithms and musical creativity. 📝 abstract we introduce ace step, a novel open source foundation model for music generation that overcomes key limitations of existing approaches and achieves state of the art performance through a holistic architectural design. current methods face inherent trade offs between generation speed, musical coherence, and controllability.

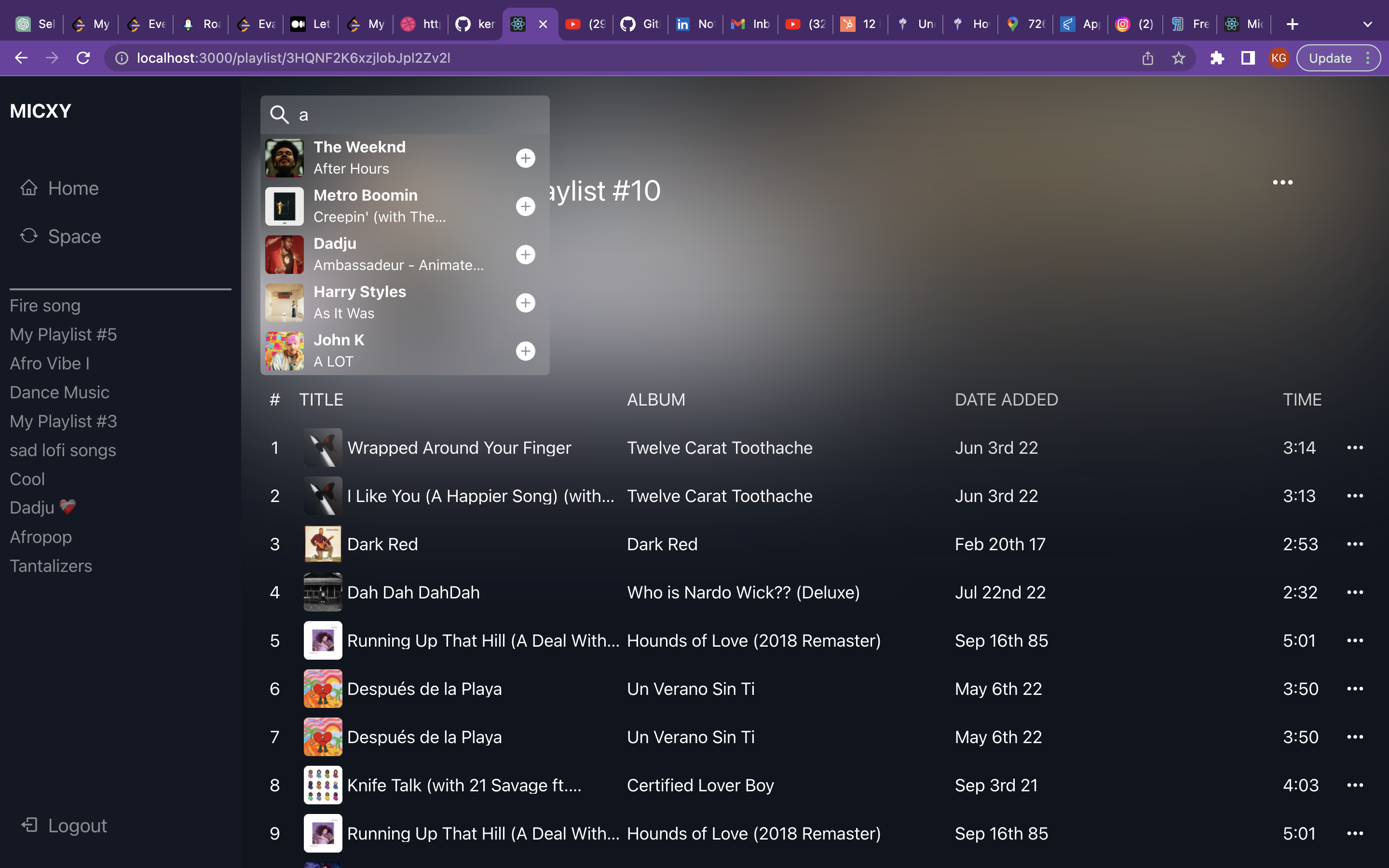

Github Project Musicx Frontend A curated compilation of ai driven generative music resources and projects. explore the blend of machine learning algorithms and musical creativity. 📝 abstract we introduce ace step, a novel open source foundation model for music generation that overcomes key limitations of existing approaches and achieves state of the art performance through a holistic architectural design. current methods face inherent trade offs between generation speed, musical coherence, and controllability.

Comments are closed.