Multimodel Features Representation Based Convolutional Neural Network

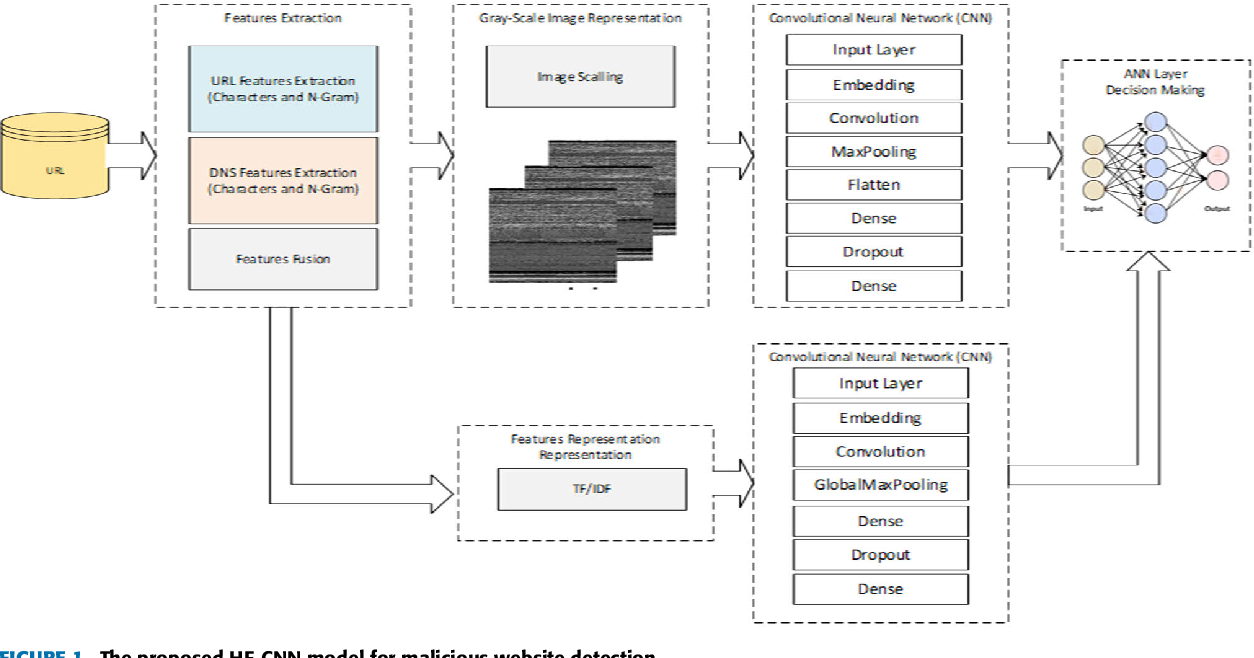

Multimodel Features Representation Based Convolutional Neural Network This study proposes a multimodal representation approach that fuses textual and image based features to enhance the performance of the malicious website detection. Two convolutional neural network (cnn) models were constructed to extract the hidden features from both textual and imagerepresented features. the output layers of both models were combined and used as input for an artificial neural network classifier for decision making.

Pdf Multi Modal Features Representation Based Convolutional Neural Two convolutional neural network (cnn) models were constructed to extract the hidden features from both textual and image represented features. the output layers of both models were. This study proposes a multimodal representation approach that fuses textual and image based features to improve the recital of the malicious website detection. This study proposes a multimodal representation approach that fuses textual and image based features to enhance the performance of the malicious website detection and shows the effectiveness of the proposed model when compared to other models. By incorporating multiple feature sets and leveraging deep learning techniques, the system provides more accurate and reliable detection capabilities compared to traditional approaches. the model's ability to process various website characteristics simultaneously, combined with its adaptive learning capabilities, makes it particularly effective.

Figure 1 From Multi Modal Features Representation Based Convolutional This study proposes a multimodal representation approach that fuses textual and image based features to enhance the performance of the malicious website detection and shows the effectiveness of the proposed model when compared to other models. By incorporating multiple feature sets and leveraging deep learning techniques, the system provides more accurate and reliable detection capabilities compared to traditional approaches. the model's ability to process various website characteristics simultaneously, combined with its adaptive learning capabilities, makes it particularly effective. To start, we propose that multimodal training can be realized in one single network with modality specific bns, enabling implicit fusion via joint feature representation training. To address these limitations, we propose a fused modality enhanced graph convolutional network for multimodal recommendation (fm gcn). Two convolutional neural network (cnn) models were constructed to extract hidden features from the textual and image representations. cnns are capable of simultaneously capturing both local and global features. the results indicate that the proposed model outperforms the other related models.

Feature Representation In Convolutional Neural Networks Deepai To start, we propose that multimodal training can be realized in one single network with modality specific bns, enabling implicit fusion via joint feature representation training. To address these limitations, we propose a fused modality enhanced graph convolutional network for multimodal recommendation (fm gcn). Two convolutional neural network (cnn) models were constructed to extract hidden features from the textual and image representations. cnns are capable of simultaneously capturing both local and global features. the results indicate that the proposed model outperforms the other related models.

Feature Representation In Convolutional Neural Networks Tmbi Two convolutional neural network (cnn) models were constructed to extract hidden features from the textual and image representations. cnns are capable of simultaneously capturing both local and global features. the results indicate that the proposed model outperforms the other related models.

Comments are closed.