Multimodal Ai Models And Modalities Deepgram

Multimodal Ai Models And Modalities Deepgram Models like mistral, imagebind, and llava are making significant contributions to multimodal ai research, and this glossary explores their applications and performance benchmarks. Unified multimodal modeling is an approach that designs machine learning architectures to jointly understand, generate, and reason over diverse data types. it employs techniques like discrete tokenization, fusion transformers, and unified latent spaces to achieve robust cross modal alignment and effective modality fusion.

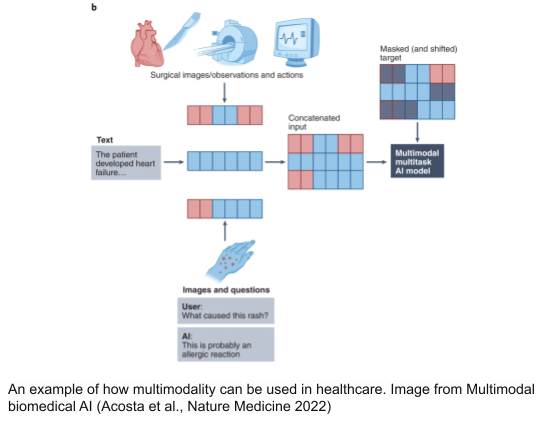

Multimodal Ai Models And Modalities Deepgram What does multimodal actually mean? the word “multimodal” simply refers to multiple modes — or types — of input and output. in the context of ai, a modality is a format of information: text, images, audio, video, code, documents, and so on. traditional ai models were unimodal. a language model read and wrote text. an image recognition model only looked at pictures. a speech recognition. This guide covers the three most practically useful modalities beyond text: vision (analyzing images), text to speech (generating spoken audio), and speech to text (transcription). How to build mcp tools for multimodal ai agents a developer guide to building model context protocol (mcp) servers that give ai agents perception over video, images, audio, and documents. covers the mcp architecture, tool design patterns, and how to expose multimodal search and retrieval as agent callable tools. Multimodal deep learning is a machine learning subfield that aims to train ai models to process and find relationships between different types of data (modalities)—typically, images, video, audio, and text.

Multimodal Ai Models And Modalities Deepgram How to build mcp tools for multimodal ai agents a developer guide to building model context protocol (mcp) servers that give ai agents perception over video, images, audio, and documents. covers the mcp architecture, tool design patterns, and how to expose multimodal search and retrieval as agent callable tools. Multimodal deep learning is a machine learning subfield that aims to train ai models to process and find relationships between different types of data (modalities)—typically, images, video, audio, and text. An overview of gemini embedding 2, our first fully multimodal embedding model that maps text, images, video, audio and documents into a single space. Vision language models (vlms) have achieved remarkable progress in multimodal understanding, yet their positional encoding mechanisms remain suboptimal. existing approaches uniformly assign positional indices to all tokens, overlooking variations in information density within and across modalities, which leads to inefficient attention allocation where redundant visual regions dominate while. This review offers a comprehensive overview of the field, taking a look at the basics of modality integration, fusion methods (early, late, and hybrid), and some of the main architectural. Traditional models operate on a single modality. multimodal systems, however, learn joint embeddings across different data types — text, images, audio, and video.

Comments are closed.