Multi Class Multi Label Classification Loss Function At Lilly Mackey Blog

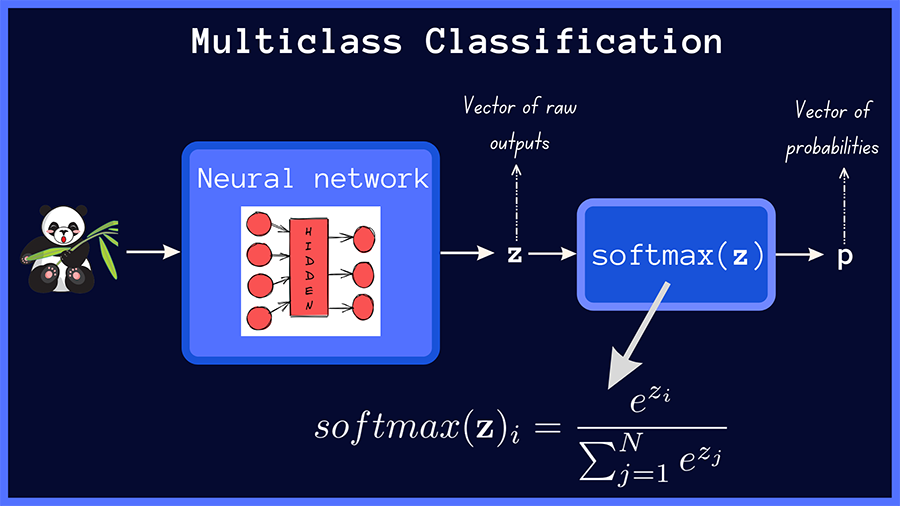

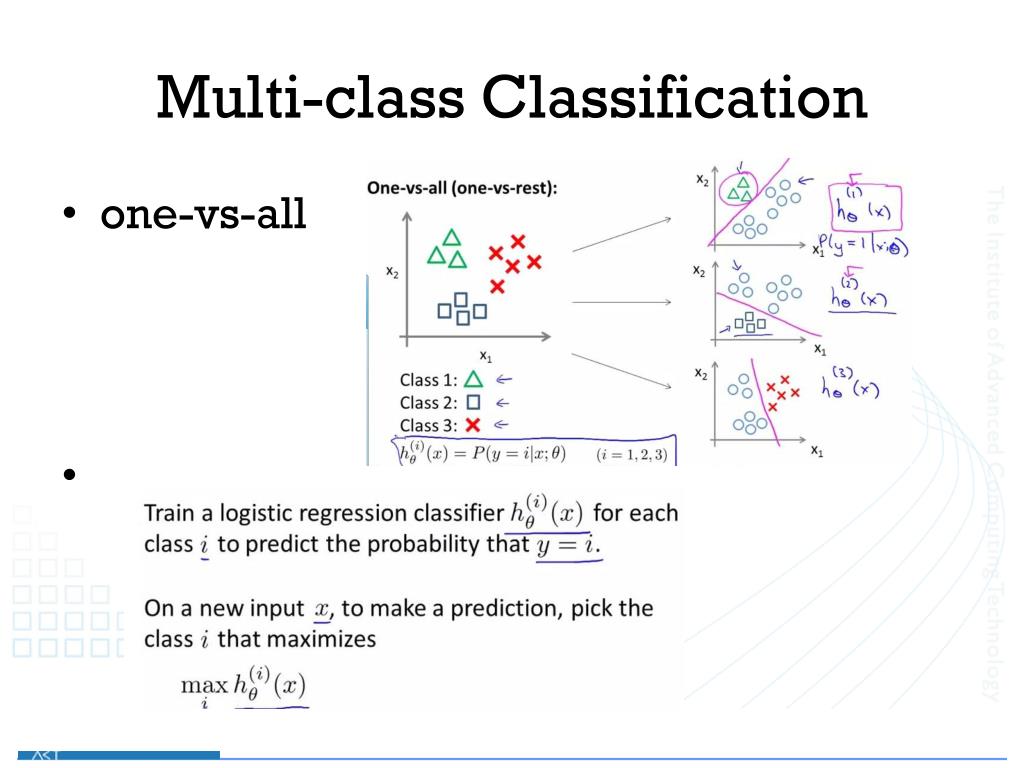

Multi Class Multi Label Classification Loss Function At Lilly Mackey Blog Multi label and single label determines which choice of activation function for the final layer and loss function you should use. for single label, the standard choice is softmax with categorical cross entropy; for multi label, switch to sigmoid activations with binary cross entropy. Are you tired of scratching your head over which loss function to use for multi class classification? well, fret no more! in this blog post, we will unravel the mystery behind loss functions and equip you with the knowledge to make informed decisions.

Multi Class Multi Label Classification Loss Function At Lilly Mackey Blog Focal loss, originally introduced for object detection by lin et al., has proven to be effective in addressing these issues. in this blog, we will explore the fundamental concepts of focal loss for multi label classification in pytorch, its usage methods, common practices, and best practices. Choosing the right loss function for multi label classification is crucial for model performance. bce, focal loss, hamming loss, jaccard loss, and zlpr each have unique strengths and. I'm training a neural network to classify a set of objects into n classes. each object can belong to multiple classes at the same time (multi class, multi label). Loss functions for multilabel classification: focal loss: focal loss is used to address class imbalance by focusing more on hard to classify examples. class balanced focal loss: variation of focal loss adjusts for class imbalance by weighting the loss based on the frequency of each class.

Multi Class Multi Label Classification Loss Function At Lilly Mackey Blog I'm training a neural network to classify a set of objects into n classes. each object can belong to multiple classes at the same time (multi class, multi label). Loss functions for multilabel classification: focal loss: focal loss is used to address class imbalance by focusing more on hard to classify examples. class balanced focal loss: variation of focal loss adjusts for class imbalance by weighting the loss based on the frequency of each class. Here in this code we will train a neural network on the mnist dataset using categorical cross entropy loss for multi class classification. it allows predicting any test image and displays the probability of each class along with the predicted label. So, let’s walk through it. we often deal with multi class classification, where each sample belongs to only one class. In this blog, we will train a multi label classification model on an open source dataset collected by our team to prove that everyone can develop a better solution. before starting the project, please make sure that you have installed the following packages:. In supervised learning, one doesn’t need to backpropagate to labels. they are considered fixed ground truth and only the weights need to be adjusted to match them.

Multi Class Multi Label Classification Loss Function At Lilly Mackey Blog Here in this code we will train a neural network on the mnist dataset using categorical cross entropy loss for multi class classification. it allows predicting any test image and displays the probability of each class along with the predicted label. So, let’s walk through it. we often deal with multi class classification, where each sample belongs to only one class. In this blog, we will train a multi label classification model on an open source dataset collected by our team to prove that everyone can develop a better solution. before starting the project, please make sure that you have installed the following packages:. In supervised learning, one doesn’t need to backpropagate to labels. they are considered fixed ground truth and only the weights need to be adjusted to match them.

Comments are closed.