Mpi Hpc Wiki

Hpc Nrw 02 Mpi Concepts Pdf Data Type Message Passing Interface The first standard document was released in 1994. mpi has become the de facto standard to program hpc cluster systems and is often the only way available. there exist many implementations, open source and proprietary. the latest version of the standard is mpi 3.1 (released in 2015). The mpi effort involved about 80 people from 40 organizations, mainly in the united states and europe. most of the major vendors of concurrent computers were involved in the mpi effort, collaborating with researchers from universities, government laboratories, and industry.

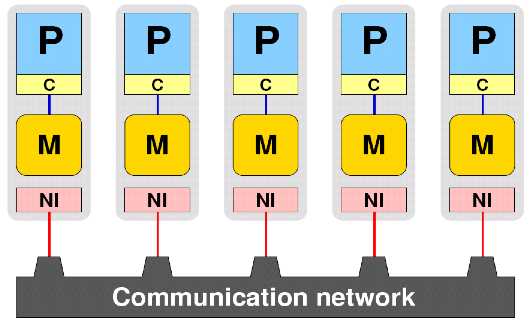

Mpi Hpc Wiki Message passing interface (mpi) is a popular standardized api for parallel processing both within a node and across many nodes. when using mpi, each task in a slurm job runs its the program in its own separate process, which all communicate to each other by mpi (generally using an mpi library). Mpi is a specification for the developers and users of message passing libraries. mpi addresses the message passing parallel programming model: data is moved from the address space of one process to that of another process through cooperative operations on each process. Several mpi implementations are available on the atos hpcf: mellanox hpc x (openmpi based), intel mpi and openmpi (provided by atos). they are not compatible amongst them, so you should only use one to build your entire software stack. It is possible to run an mpi type program on one or more nodes, although if your program is only ever intended to run on one node you should consider openmp instead here.

Mpi Hpc Wiki Several mpi implementations are available on the atos hpcf: mellanox hpc x (openmpi based), intel mpi and openmpi (provided by atos). they are not compatible amongst them, so you should only use one to build your entire software stack. It is possible to run an mpi type program on one or more nodes, although if your program is only ever intended to run on one node you should consider openmp instead here. The message passing interface (mpi) implementation is an important part of an hpc cluster. the vast majority of software that needs to communicate across nodes uses mpi. There are many possible options in configuring mpi for a particular set of hardware (the help file . configure –help for open mpi is about 700 lines), so a very particular setup is needed for best performance. For optimal interconnect performance, use an rdma interconnect like infiniband. compile and link with mpicc (c) or mpic (c ), which will include openmpi options and libs. ¶ what to expect create a very simple mpi program manage the compiling with a basic generic makefile submit to slurm query status review output.

Comments are closed.