Mosaic Memory

Memory Wall Mosaic Rize Massachusetts Instead, we show that llms memorize by assembling information from similar sequences, a phenomenon we call mosaic memory. We propose mosaic memory (mosaicmem), a hybrid spatial memory that lifts patches into 3d for reliable localization and targeted retrieval, while exploiting model’s native conditioning to preserve prompt following generation.

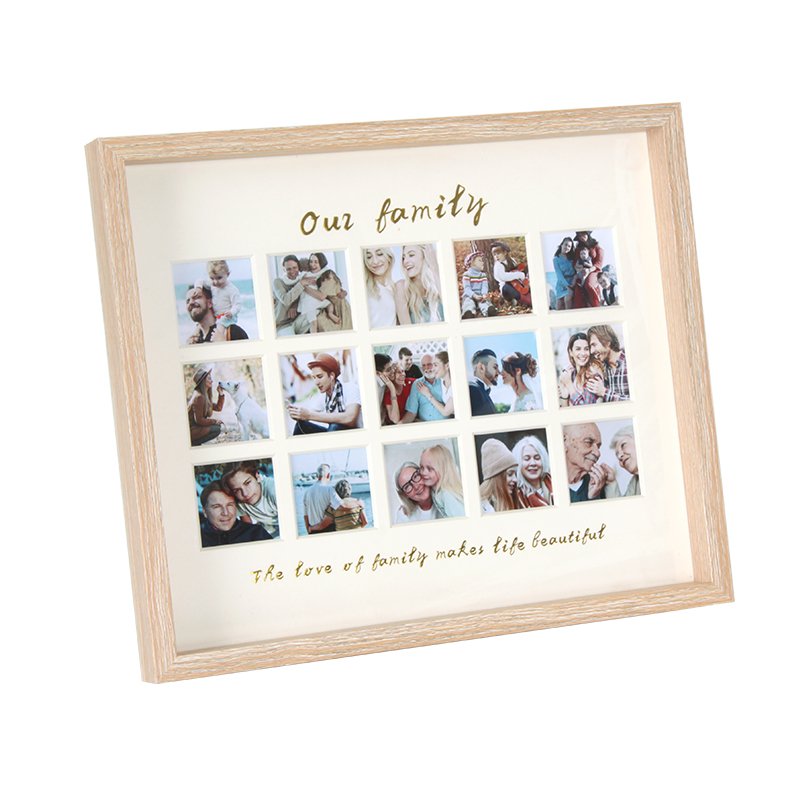

Memory Mosaic Frame Mordeahome We propose mosaic memory (mosaicmem), a hybrid spatial memory that lifts patches into 3d for reliable localization and targeted retrieval, while exploiting model’s native conditioning to preserve prompt following generation. Instead, we posit that llms exhibit a behavior we call mosaic memory, which we define as the model’s ability to memorize an arbitrary sequence of tokens through exposure to its fuzzy duplicates – fragments of text similar to the original sequence with some tokens missing, replaced, or shuffled. We propose mosaic memory (mosaicmem), a hybrid spatial memory that lifts patches into 3d for reliable localization and targeted retrieval, while exploiting the model's native conditioning to preserve prompt following generation. Memory mosaics are networks of associative memories working in concert to achieve a prediction task of interest. like transformers, memory mosaics possess compositional capabilities and in context learning capabilities.

The Memory Mosaic Medium We propose mosaic memory (mosaicmem), a hybrid spatial memory that lifts patches into 3d for reliable localization and targeted retrieval, while exploiting the model's native conditioning to preserve prompt following generation. Memory mosaics are networks of associative memories working in concert to achieve a prediction task of interest. like transformers, memory mosaics possess compositional capabilities and in context learning capabilities. Memorization in llms is widely assumed to only occur as a result of sequences being repeated in the training data. instead, we show that llms memorize by assembling information from similar sequences, a phenomena we call mosaic memory. This tutorial demonstrates how to use mosaic, a post processing memory snapshot analysis tool for pytorch. mosaic helps analyze gpu memory usage in distributed deep learning, providing detailed insights into memory allocations, peak usage, and memory imbalances across parallel workers. Large language models memorize far more than previously thought, by stitching together pieces of text like a mosaic, with real world implications for privacy, confidentiality, model utility and evaluation. The document introduces memory mosaics, a novel architecture for associative memories that achieves prediction tasks with transparency and compositional capabilities, similar to transformers but with clearer internal mechanisms.

Mosaic Memory Photo Montages Jackie Spector Linder Mosaic Artist Memorization in llms is widely assumed to only occur as a result of sequences being repeated in the training data. instead, we show that llms memorize by assembling information from similar sequences, a phenomena we call mosaic memory. This tutorial demonstrates how to use mosaic, a post processing memory snapshot analysis tool for pytorch. mosaic helps analyze gpu memory usage in distributed deep learning, providing detailed insights into memory allocations, peak usage, and memory imbalances across parallel workers. Large language models memorize far more than previously thought, by stitching together pieces of text like a mosaic, with real world implications for privacy, confidentiality, model utility and evaluation. The document introduces memory mosaics, a novel architecture for associative memories that achieves prediction tasks with transparency and compositional capabilities, similar to transformers but with clearer internal mechanisms.

Mosaic Memory Photo Montages Jackie Spector Linder Mosaic Artist Large language models memorize far more than previously thought, by stitching together pieces of text like a mosaic, with real world implications for privacy, confidentiality, model utility and evaluation. The document introduces memory mosaics, a novel architecture for associative memories that achieves prediction tasks with transparency and compositional capabilities, similar to transformers but with clearer internal mechanisms.

Mosaic Memory Photo Montages Jackie Spector Linder Mosaic Artist

Comments are closed.