Modelscan Pypi

Modelscan Pypi Modelscan is an open source project from protect ai that scans models to determine if they contain unsafe code. it is the first model scanning tool to support multiple model formats. Modelscan is an open source project from protect ai that scans models to determine if they contain unsafe code. it is the first model scanning tool to support multiple model formats.

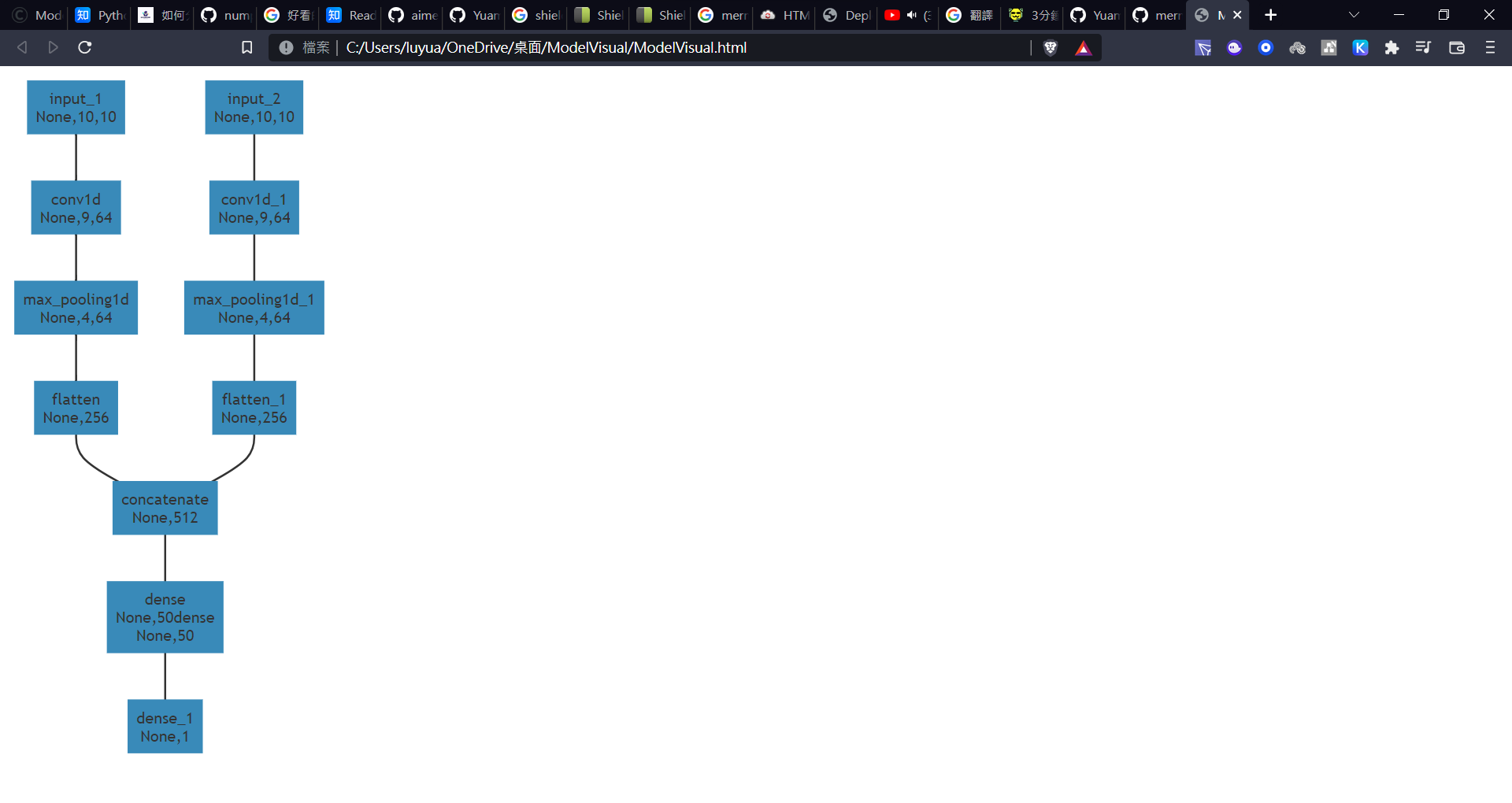

Model Visual Pypi Modelscan save results in json or other reporting formats, and can scan models from a variety of ml libraries including pytorch, tensorflow, keras, and classic ml libraries (sklearn, xgboost etc.). Modelscan is an open source project that scans models to determine if they contain unsafe code. it is the first model scanning tool to support multiple model formats, including h5, pickle, and savedmodel formats. This page explains how to use modelscan effectively for scanning machine learning model files to detect security vulnerabilities. modelscan can be used both as a command line tool and as a python api. The modelscan package is a cli tool for detecting unsafe operations in model files across various model serialization formats. 0.8.7 a package on pypi.

Modelscan Pypi This page explains how to use modelscan effectively for scanning machine learning model files to detect security vulnerabilities. modelscan can be used both as a command line tool and as a python api. The modelscan package is a cli tool for detecting unsafe operations in model files across various model serialization formats. 0.8.7 a package on pypi. The modelscan package is a cli tool for detecting unsafe operations in model files across various model serialization formats. At present, modelscan supports any pickle derived format and many others: | ml library | api | serialization format | modelscan support |. Filter files by name, interpreter, abi, and platform. if you're not sure about the file name format, learn more about wheel file names. the dropdown lists show the available interpreters, abis, and platforms. enable javascript to be able to filter the list of wheel files. uploaded using trusted publishing? no. see more details on using hashes here. Protection against model serialization attacks. contribute to protectai modelscan development by creating an account on github.

Comments are closed.