Model Selection In Multiple Regression

Ch04 Regression Model Selection Pdf The task of identifying the best subset of predictors to include in a multiple regression model, among all possible subsets of predictors, is referred to as variable selection. Two common strategies for adding or removing variables in a multiple regression model are called backward elimination and forward selection. these techniques are often referred to as model selection strategies, because they add or delete one variable at a time as they “step” through the candidate predictors.

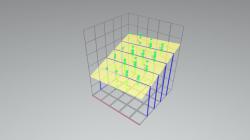

Best Multiple Regression Model Selection Results Download Scientific Additionally, this work explores the application of a physics based model from the manufacturing literature to serve as the basis for a transformation, providing real world contextualization of important topics in regression modeling (e.g., transformations, variable selection, interpretability). We will study several automated methods for model selection. given a specific criterion for selecting a model, stata gives the best predictors. before applying any of the methods, you should plot y against each predictor x1; x2; :::; xk to see whether transformations are needed. The purpose of variable selection in regression is to identify the best subset of predictors among many variables to include in a model. I used several of the model selection procedures to select out predictors. the model selection criteria below point to a more careful analysis of the model with ts and cod as predictors.

Multiple Regression Model Selection Page 1 Stlfinder The purpose of variable selection in regression is to identify the best subset of predictors among many variables to include in a model. I used several of the model selection procedures to select out predictors. the model selection criteria below point to a more careful analysis of the model with ts and cod as predictors. The process for choosing a model involves several procedures (variable selection, verifying assumptions, variable transformation, etc.), but the order of the procedures is not always the same, and the analyst should be alert for unspected structure in the data. Backward selection: starting from the full model, eliminate variables one at a time, choosing the one with the largest p value at each step. mixed selection: starting from some model, include variables one at a time, minimizing the rss at each step. A model selection (more on the method later) will remove one of the variables and consequently overestimates the effect size (effect size too high, true causal value outside the 95% ci). It illustrates the use of indicator variables, as well as variable selection. it shows an example of a regression prediction, illustrating the point that it can be destructive to make predictions using all available independent variables.

Multiple Linear Regression Model Download Scientific Diagram The process for choosing a model involves several procedures (variable selection, verifying assumptions, variable transformation, etc.), but the order of the procedures is not always the same, and the analyst should be alert for unspected structure in the data. Backward selection: starting from the full model, eliminate variables one at a time, choosing the one with the largest p value at each step. mixed selection: starting from some model, include variables one at a time, minimizing the rss at each step. A model selection (more on the method later) will remove one of the variables and consequently overestimates the effect size (effect size too high, true causal value outside the 95% ci). It illustrates the use of indicator variables, as well as variable selection. it shows an example of a regression prediction, illustrating the point that it can be destructive to make predictions using all available independent variables.

Introduction To Multiple Linear Regression A model selection (more on the method later) will remove one of the variables and consequently overestimates the effect size (effect size too high, true causal value outside the 95% ci). It illustrates the use of indicator variables, as well as variable selection. it shows an example of a regression prediction, illustrating the point that it can be destructive to make predictions using all available independent variables.

Comments are closed.