Ml Experiment Tracking

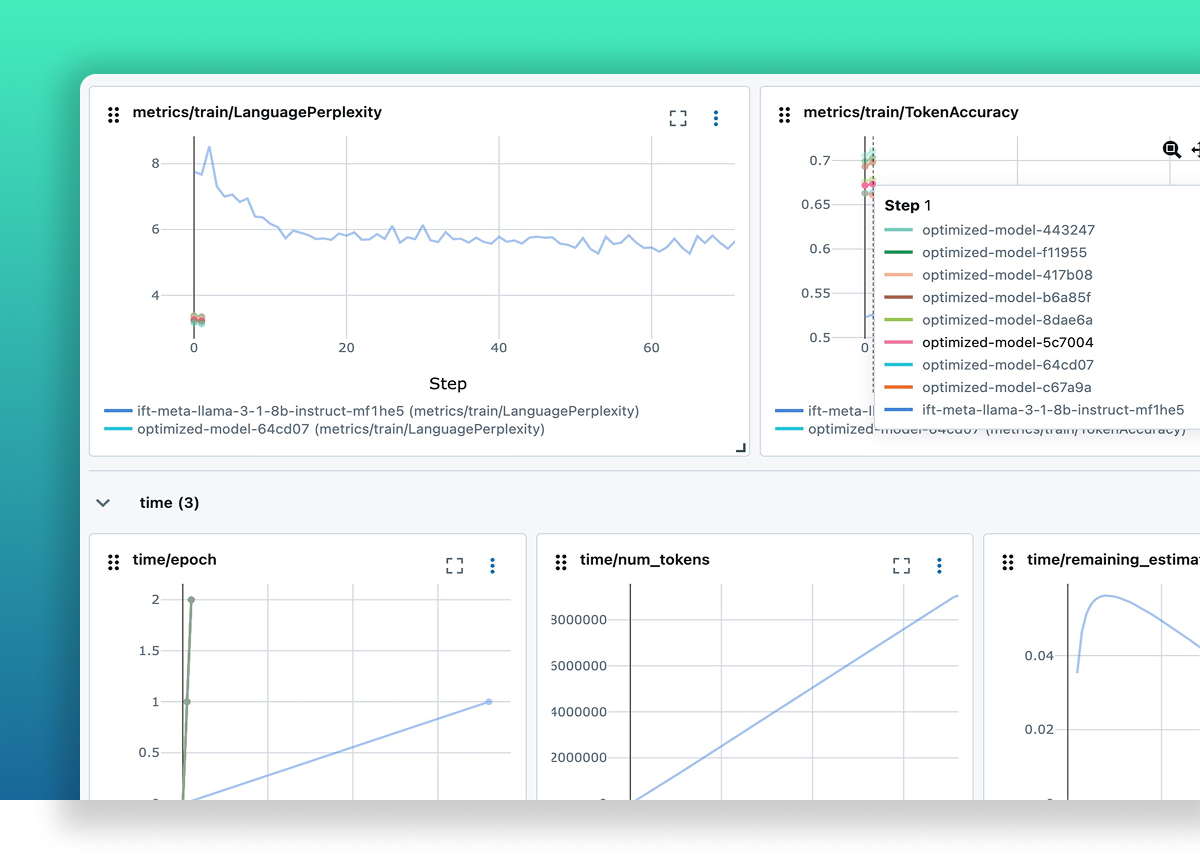

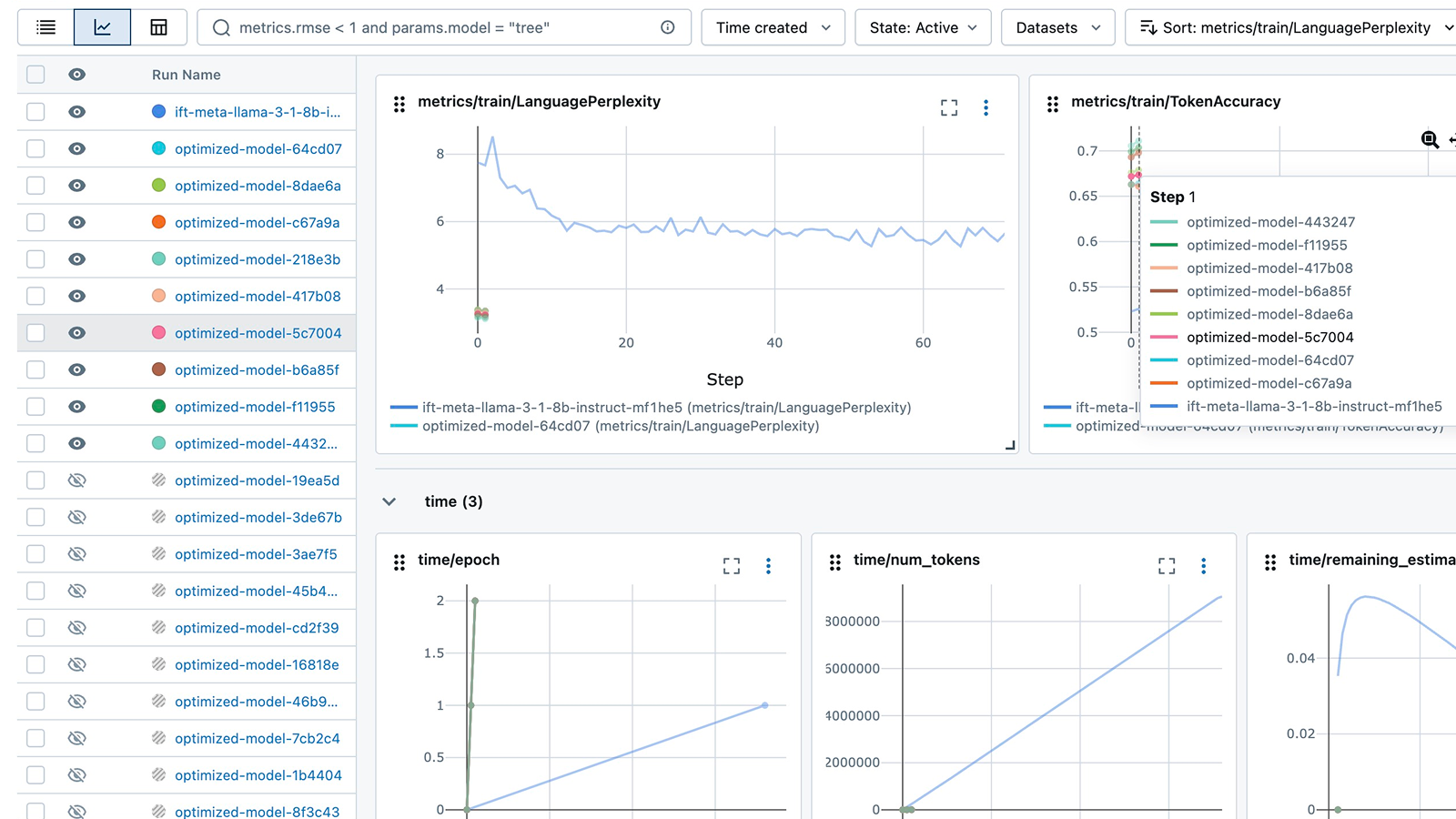

Ml Experiment Tracking Mlflow Ai Platform In this post, we will be looking at the top 7 ml experiment tracking tools that are user friendly, come with a lightweight api, and have an interactive dashboard to view and manage the experiments. Log parameters, metrics, and artifacts for ml experiments. compare runs, visualize results, and reproduce models.

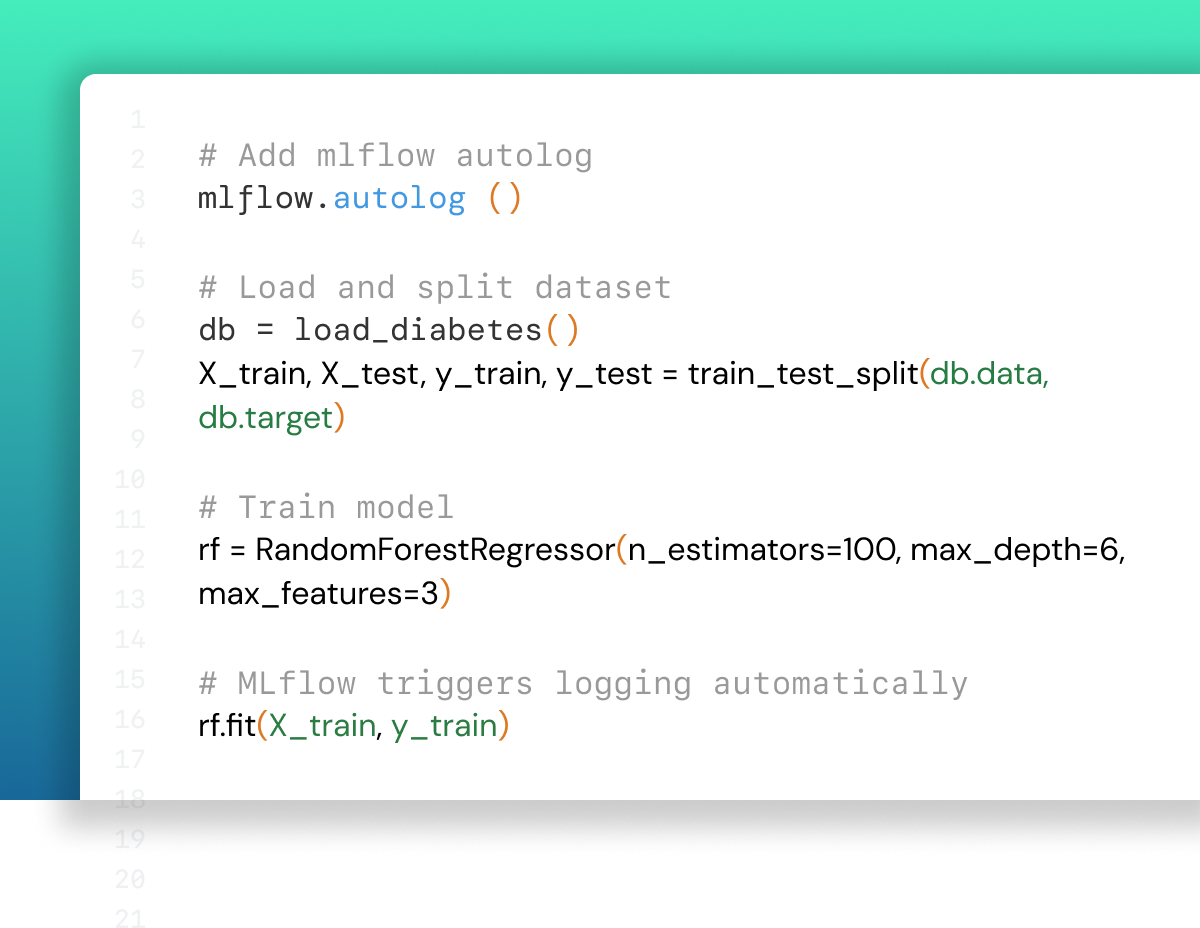

Ml Experiment Tracking Mlflow Ai Platform A curated list of awesome open source tools and commercial products for ml experiment tracking and management 🚀. aim: an easy to use and performant open source experiment tracker. comet: manage and optimize the entire ml lifecycle, from experiment tracking to model production monitoring. Get automatic experiment tracking for ml with tools to version datasets, debug and reproduce models, visualize performance, and collaborate with teammates. Ml experiment tracking is the process of recording, organizing, and analyzing the results of ml experiments. it helps data scientists keep track of their experiments, reproduce their results, and collaborate with others effectively. Complete mlflow reference guide with essential commands, examples, and best practices for ml experiment tracking and model management.

Ml Experiment Tracking Mlflow Ai Platform Ml experiment tracking is the process of recording, organizing, and analyzing the results of ml experiments. it helps data scientists keep track of their experiments, reproduce their results, and collaborate with others effectively. Complete mlflow reference guide with essential commands, examples, and best practices for ml experiment tracking and model management. In this article, i will provide a detailed explanation of ml experiment tracking. logging and tracking all the important stuff about your ml experiments so you don't lose your mind trying to remember what you did. From definition to implementation to tools, this guide offers a complete rundown on experiment tracking in machine learning. experiment tracking, or experiment logging, is a key aspect of mlops. So far, we've been training and evaluating our different baselines but haven't really been tracking these experiments. we'll fix this but defining a proper process for experiment tracking which we'll use for all future experiments (including hyperparameter optimization). Experiment tracking is the practice of systematically recording every detail of a training run — hyperparameters, metrics, code version, data version, and the resulting model artifact — so you can reproduce, compare, and audit your work.

Comments are closed.