Measuring Gpu Compute Performance Imagination

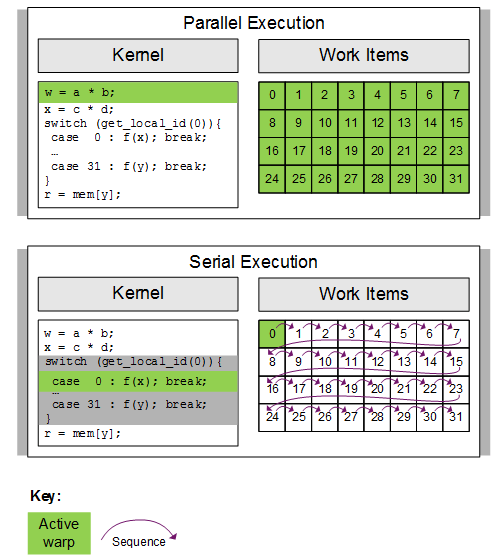

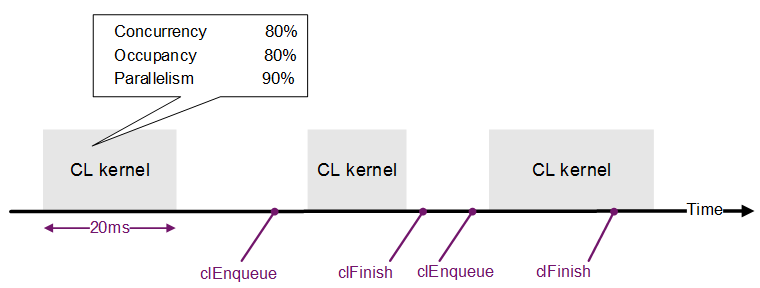

Measuring Gpu Compute Performance Imagination A guide to help developers measure the gpu compute performance of their opencl kernels on the powervr rogue architecture. We propose a methodology to profile resource interference of gpu kernels across these dimensions and discuss how to build gpu schedulers that provide strict performance guarantees while colocating applications to minimize cost.

Measuring Gpu Compute Performance Imagination Gpu compute performance estimation: the mathematical foundation behind ai hardware benchmarks when evaluating gpus for ai workloads, the performance numbers can seem like magic. The performance of vector code running on a gpu is more difficult to quantify. this technical article is published by an embedded vision alliance member company. Whether you’re gaming, developing vr applications, or running ai cloud models in the cloud, these performance indicators will guide you in choosing the right gpu for your needs. Top 5 gpu performance metrics for deep learning success in ai datacenter. utilize cloud gpu for ai cloud and optimize cloud for deep learning efficiency.

Measuring Gpu Compute Performance Imagination Whether you’re gaming, developing vr applications, or running ai cloud models in the cloud, these performance indicators will guide you in choosing the right gpu for your needs. Top 5 gpu performance metrics for deep learning success in ai datacenter. utilize cloud gpu for ai cloud and optimize cloud for deep learning efficiency. Monitoring the right gpu performance metrics can go a long way in helping you train and deploy deep learning applications. here are the top 5 metrics you should monitor: 1. gpu utilization. gpu utilization is one of the primary metrics to observe during a deep learning training session. Providing predictable performance guarantees requires a deep understanding of how applications contend for shared gpu resources such as block schedulers, compute units, l1 l2 caches, and. When you’re using gpus for model inference, you want the most performance per dollar possible. understanding utilization is key for this — a high gpu utilization means fewer gpus are needed to serve high traffic workloads. Optimise compute tasks on imagination gpus with our top tips for high performance and efficiency, leveraging the latest developer documentation and tools.

Measuring Gpu Compute Performance Imagination Monitoring the right gpu performance metrics can go a long way in helping you train and deploy deep learning applications. here are the top 5 metrics you should monitor: 1. gpu utilization. gpu utilization is one of the primary metrics to observe during a deep learning training session. Providing predictable performance guarantees requires a deep understanding of how applications contend for shared gpu resources such as block schedulers, compute units, l1 l2 caches, and. When you’re using gpus for model inference, you want the most performance per dollar possible. understanding utilization is key for this — a high gpu utilization means fewer gpus are needed to serve high traffic workloads. Optimise compute tasks on imagination gpus with our top tips for high performance and efficiency, leveraging the latest developer documentation and tools.

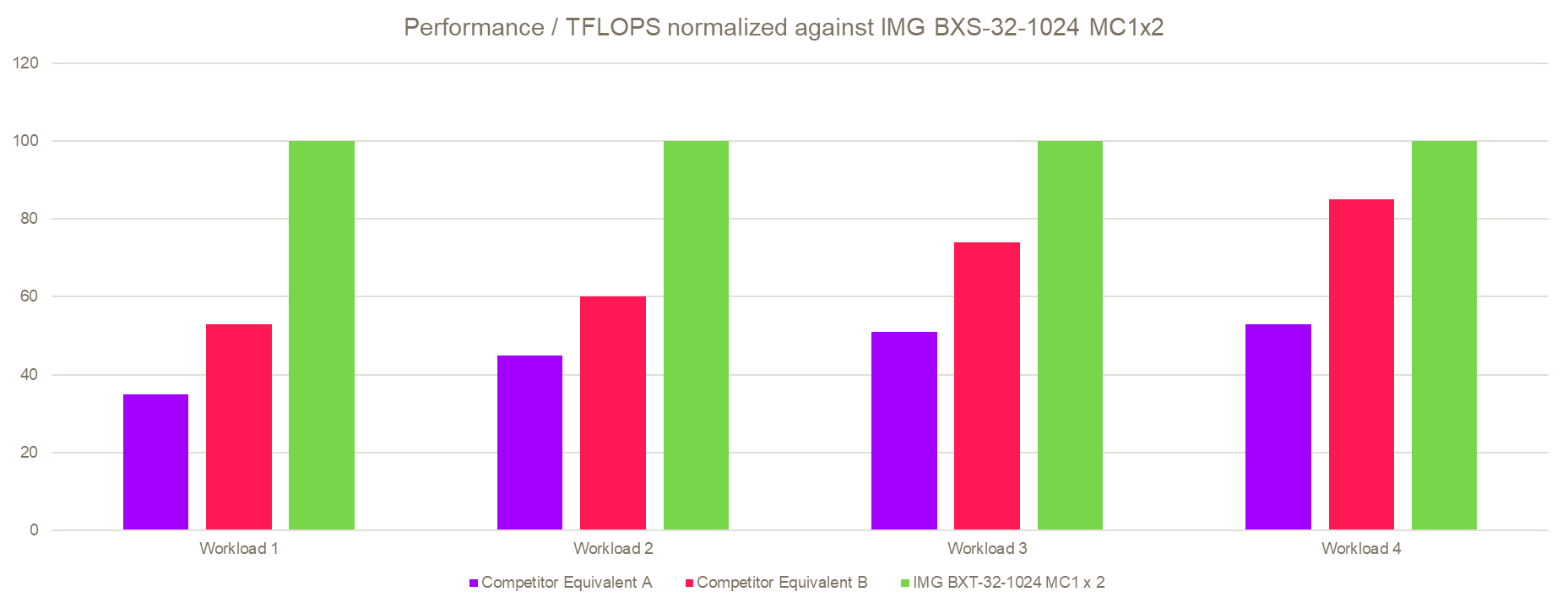

Why Gpu Performance Efficiency Beats Peak Performance When you’re using gpus for model inference, you want the most performance per dollar possible. understanding utilization is key for this — a high gpu utilization means fewer gpus are needed to serve high traffic workloads. Optimise compute tasks on imagination gpus with our top tips for high performance and efficiency, leveraging the latest developer documentation and tools.

Comments are closed.