Mastering Model Evaluation

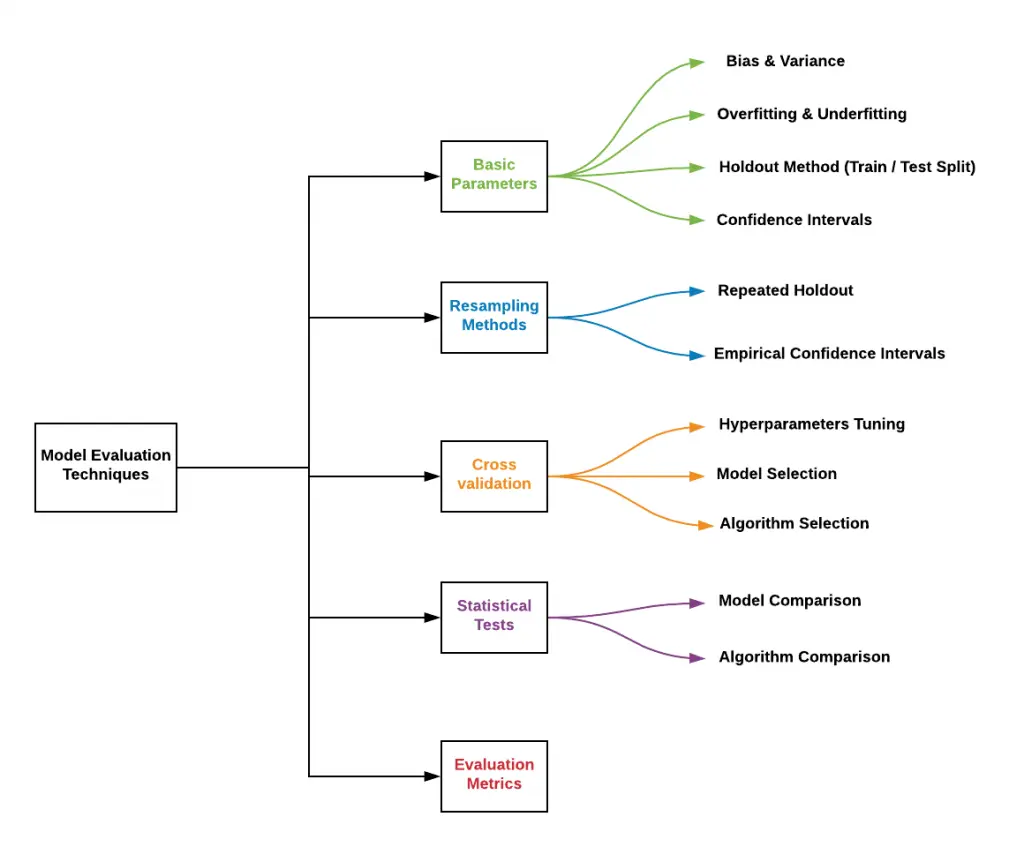

301 Moved Permanently Learn the essential techniques and metrics for evaluating model performance in data analysis, ensuring informed decision making and optimal results. Mastering model evaluation is indeed essential for building effective machine learning models, and it’s a skill that separates good models from great ones. cross validation techniques, such as k fold, and a thorough understanding of performance metrics are the cornerstones of this process.

Mastering Model Evaluation Understanding the distinction between loss functions and evaluation metrics is crucial for effective model development. Learn how to evaluate machine learning models effectively with cross validation and model selection techniques. Why evaluation metrics are crucial in machine learning. explain that training accuracy alone isn’t enough — metrics tell how well a model performs in the real world. mention that metrics differ. ‘meaningful predictive modeling’ is an indispensable course for anyone serious about predictive analytics. it transforms you from a model builder into a discerning model evaluator, empowering you to make informed choices and deliver truly impactful data products.

Latest Posts Code Mastery Centre Why evaluation metrics are crucial in machine learning. explain that training accuracy alone isn’t enough — metrics tell how well a model performs in the real world. mention that metrics differ. ‘meaningful predictive modeling’ is an indispensable course for anyone serious about predictive analytics. it transforms you from a model builder into a discerning model evaluator, empowering you to make informed choices and deliver truly impactful data products. By applying evaluation methods such as cross validation, holdout testing and error metrics, we can identify whether a model has truly learned patterns, detect its weaknesses and decide if it is ready for real world deployment. When mastering these concepts, you’ll be equipped to build, evaluate, and improve models that drive effective solutions. keep exploring, and remember that no single metric tells the whole story. Abstract: this article presents a comprehensive framework for mastering model selection in artificial intelligence and machine learning applications across diverse domains. The summary of this lesson is about sharpening our understanding of model evaluation in machine learning. we discussed the importance of evaluating the performance of predictive models post optimization using metrics such as accuracy, precision, recall, and the f1 score.

Mastering Model Evaluation A Comprehensive Guide To Understanding By applying evaluation methods such as cross validation, holdout testing and error metrics, we can identify whether a model has truly learned patterns, detect its weaknesses and decide if it is ready for real world deployment. When mastering these concepts, you’ll be equipped to build, evaluate, and improve models that drive effective solutions. keep exploring, and remember that no single metric tells the whole story. Abstract: this article presents a comprehensive framework for mastering model selection in artificial intelligence and machine learning applications across diverse domains. The summary of this lesson is about sharpening our understanding of model evaluation in machine learning. we discussed the importance of evaluating the performance of predictive models post optimization using metrics such as accuracy, precision, recall, and the f1 score.

Model Evaluation Saturn Cloud Abstract: this article presents a comprehensive framework for mastering model selection in artificial intelligence and machine learning applications across diverse domains. The summary of this lesson is about sharpening our understanding of model evaluation in machine learning. we discussed the importance of evaluating the performance of predictive models post optimization using metrics such as accuracy, precision, recall, and the f1 score.

Mastering Model Evaluation Key Metrics For Machine Learning Success

Comments are closed.