Mars Efficient Multi Token Generation For Llms

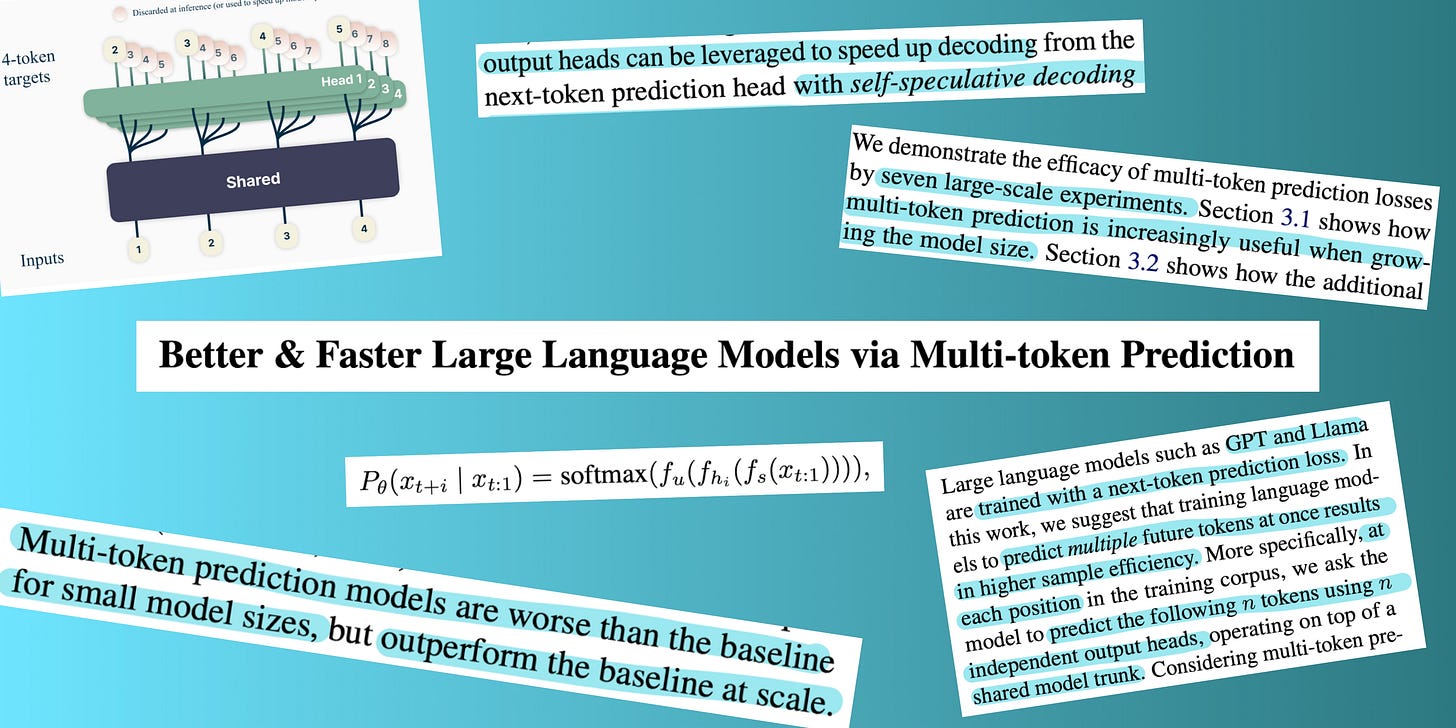

Multi Token Prediction In Llms By Celine We presented mars, a lightweight fine tuning method that gives instruction tuned ar models the ability to generate multiple tokens per forward pass, with no architectural changes, no additional parameters, and a single checkpoint. The paper introduces a fine tuning method that enables multi token generation in ar models without modifying the underlying architecture. it uses a dual stream training strategy, combining a clean stream with a masked block prediction to balance conventional ar loss and maintain generation order.

Enhanced Efficiency Meta S Multi Token Prediction For Llms Fusion Chat Mars enables existing ar instruction tuned models to generate multiple tokens per forward pass with zero architectural changes and a single checkpoint. the ar model remains fully functional mars adds multi token prediction as an additional capability through masked fine tuning. In this ai research roundup episode, alex discusses the paper: 'mars: enabling autoregressive models multi token generation' mars is a novel fine tuning framework that allows standard. Mars is a fine tuning method that enables autoregressive language models to predict multiple tokens per forward pass without architectural changes, maintaining accuracy while improving throughput and supporting dynamic speed adjustment. This paper introduces mars (mask autoregression), a lightweight fine tuning method that enables an instruction tuned autoregressive language model to predict multiple tokens in a single forward pass.

Enhanced Efficiency Meta S Multi Token Prediction For Llms Fusion Chat Mars is a fine tuning method that enables autoregressive language models to predict multiple tokens per forward pass without architectural changes, maintaining accuracy while improving throughput and supporting dynamic speed adjustment. This paper introduces mars (mask autoregression), a lightweight fine tuning method that enables an instruction tuned autoregressive language model to predict multiple tokens in a single forward pass. When generating one token at a time, mars matches or beats baseline ar models across six benchmarks. with multi token generation, it achieves 1.5–1.7× higher throughput while maintaining accuracy. Mars: enabling autoregressive models multi token generation: paper and code. autoregressive (ar) language models generate text one token at a time, even when consecutive tokens are highly predictable given earlier context. we introduce mars (mask autoregression), a lightweight fine tuning method that teaches an instruction tuned ar model to predict multiple tokens per forward pass. mars adds. Mars, short for mask autoregression, lets an instruction‑tuned autoregressive model emit multiple tokens per forward pass while preserving the original calling interface. Mars enables standard autoregressive models to predict multiple tokens per forward pass. the headline is not just model speed. it is a new cost structure for chat driven agents.

Multi Token Prediction For Faster And Efficient Llms By M When generating one token at a time, mars matches or beats baseline ar models across six benchmarks. with multi token generation, it achieves 1.5–1.7× higher throughput while maintaining accuracy. Mars: enabling autoregressive models multi token generation: paper and code. autoregressive (ar) language models generate text one token at a time, even when consecutive tokens are highly predictable given earlier context. we introduce mars (mask autoregression), a lightweight fine tuning method that teaches an instruction tuned ar model to predict multiple tokens per forward pass. mars adds. Mars, short for mask autoregression, lets an instruction‑tuned autoregressive model emit multiple tokens per forward pass while preserving the original calling interface. Mars enables standard autoregressive models to predict multiple tokens per forward pass. the headline is not just model speed. it is a new cost structure for chat driven agents.

Comments are closed.