Markov Chains Explained Visually

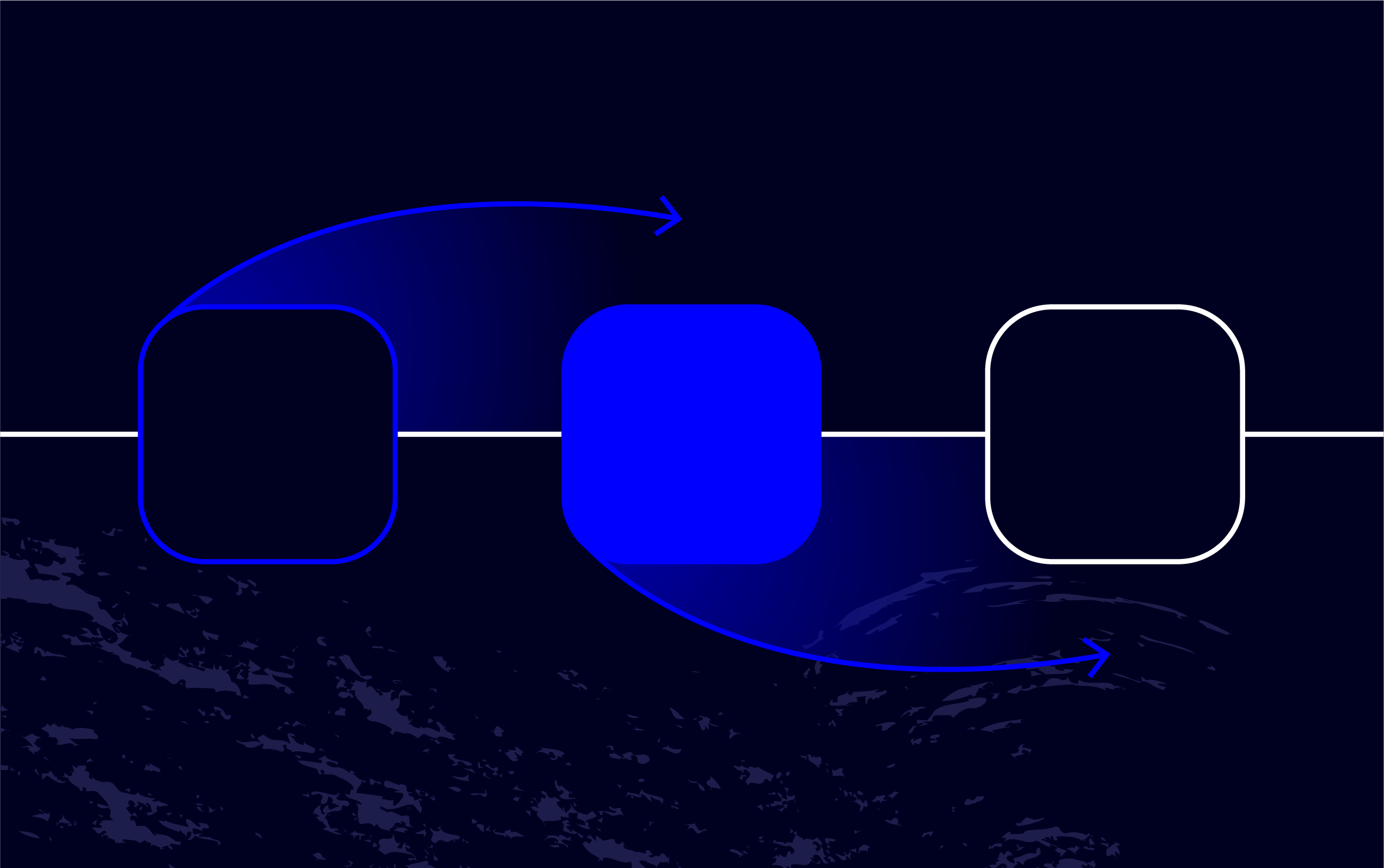

Markov Chains Explained In 10 Minutes Pdf Markov Chain Mathematics Markov chains, named after andrey markov, are mathematical systems that hop from one "state" (a situation or set of values) to another. This video provides an introduction to markov chains, explaining their underlying principles, including states, transitions, probabilities, and the memoryless property.

Markov Chains Definition And Examples Puzzledata In this article we introduced the concept of the markov property and used that idea to construct and understand a basic markov chain. this stochastic process appears in many aspects of data science and machine learning so it is important to have some familiarity of it. Markov chains, named after andrey markov, are mathematical systems that hop from one "state" (a situation or set of values) to another. Even if you already know what markov chains are or use them regularly, you can use the full screen version to enter your own set of transition probabilities. then let the simulation run. A countably infinite sequence, in which the chain moves state at discrete time steps, gives a discrete time markov chain (dtmc). a continuous time process is called a continuous time markov chain (ctmc). markov processes are named in honor of the russian mathematician andrey markov.

Markov Chains Explained Pdf Stochastic Process Markov Chain Even if you already know what markov chains are or use them regularly, you can use the full screen version to enter your own set of transition probabilities. then let the simulation run. A countably infinite sequence, in which the chain moves state at discrete time steps, gives a discrete time markov chain (dtmc). a continuous time process is called a continuous time markov chain (ctmc). markov processes are named in honor of the russian mathematician andrey markov. Learn how markov chains work through interactive visualizations and real world examples. understand state transitions, probability matrices, and practical applications. A markov chain is a way to describe a system that moves between different situations called "states", where the chain assumes the probability of being in a particular state at the next step depends solely on the current state. In this chapter, you will learn to: write transition matrices for markov chain problems. use the transition matrix and the initial state vector to find the state vector that gives the distribution after a specified number of transitions. Get valuable lessons and insights on a variety of topics, including data visualization, web development, usability testing, and much more.

What Are Markov Chains 5 Nifty Real World Uses Learn how markov chains work through interactive visualizations and real world examples. understand state transitions, probability matrices, and practical applications. A markov chain is a way to describe a system that moves between different situations called "states", where the chain assumes the probability of being in a particular state at the next step depends solely on the current state. In this chapter, you will learn to: write transition matrices for markov chain problems. use the transition matrix and the initial state vector to find the state vector that gives the distribution after a specified number of transitions. Get valuable lessons and insights on a variety of topics, including data visualization, web development, usability testing, and much more.

Comments are closed.