Lujunru Lu Junru Github

Lujunru Lu Junru Github Lujunru has 53 repositories available. follow their code on github. Junru lu studying leetcode: 200 1047 (2019.07.10) kick start: nowcoder, ks nlp: github zibuyu research tao blob master 00 nlp.md pytorch: zhihu, udacity.

Eliminating Biased Length Reliance Of Direct Preference Optimization Memochat: tuning llms to use memos for consistent long range open domain conversation lujunru memochat. Lujunru.github.io pdf en resume.pdf · experience: tencent · education: university of warwick · location: united kingdom · 87 connections on linkedin. view junru lu’s profile on. Proceedings of the 2024 conference on empirical methods in natural language … proceedings of the 28th international conference on computational … proceedings of the 31st international conference on. 本文整理leetcode上与stack有关的题目 sum of subarray minimums problem: given an array of integers a, find the sum of min (b), where b ranges over every substring o 这篇文章不仅是总结leetcode上关于dp的题,也恰好是总结一下算法课中关于dp的内容。 longest common subsequence problem: 最长公共子序列。 给定两个字符串,判定公共子序列的最大长度,这里的子序列可以是不连续的。 leetcode上有一道近似题:given two.

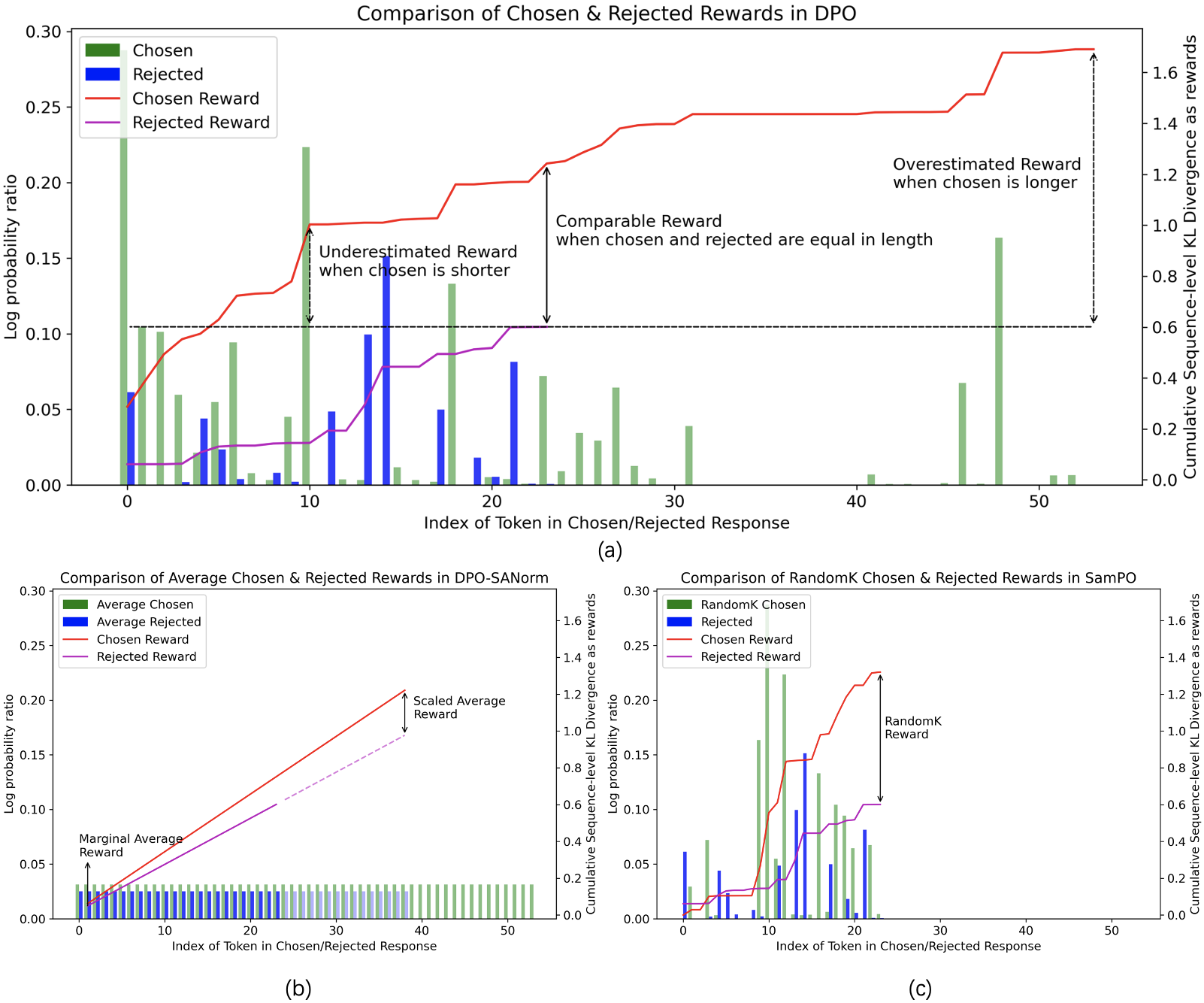

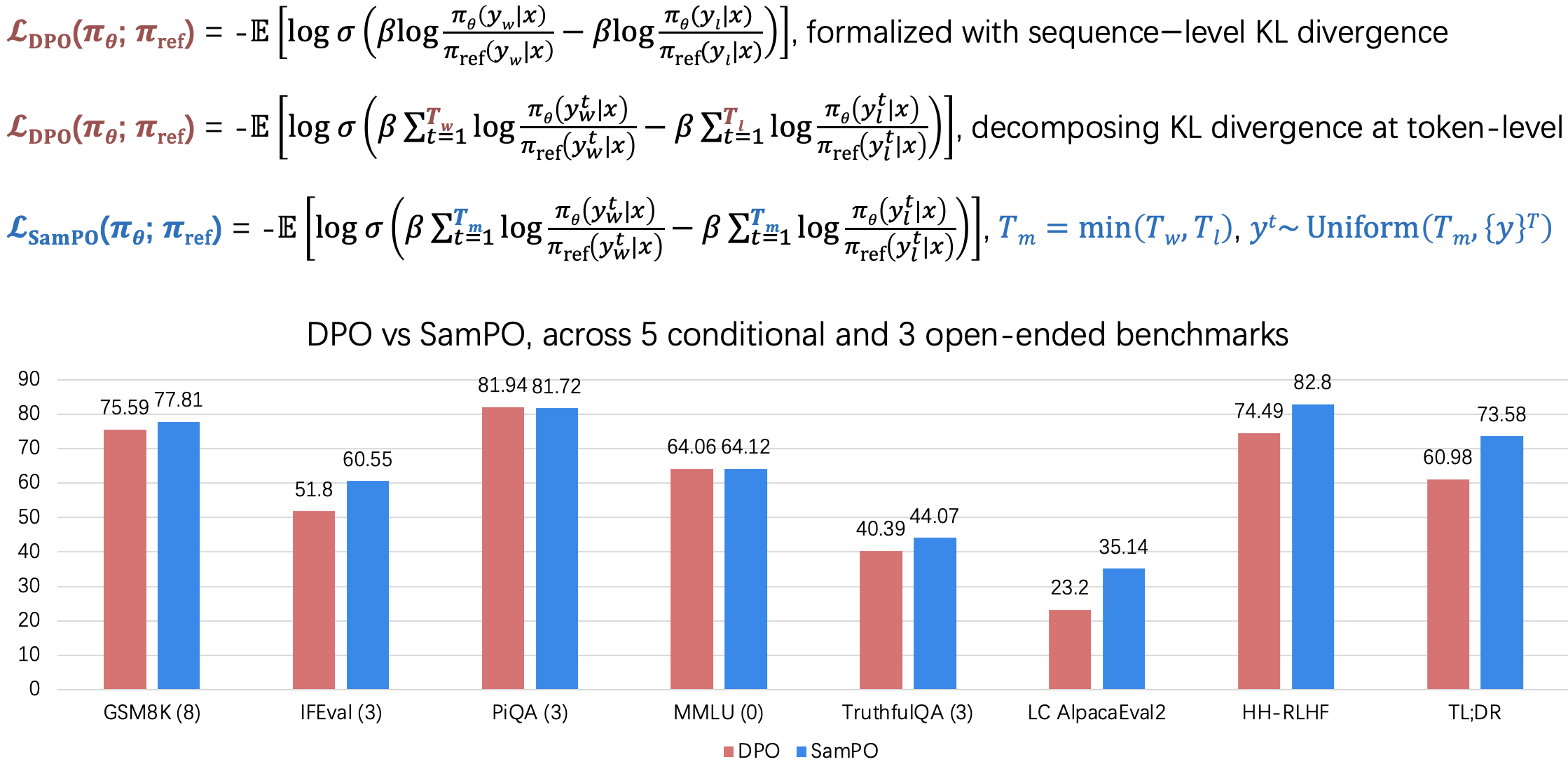

Eliminating Biased Length Reliance Of Direct Preference Optimization Proceedings of the 2024 conference on empirical methods in natural language … proceedings of the 28th international conference on computational … proceedings of the 31st international conference on. 本文整理leetcode上与stack有关的题目 sum of subarray minimums problem: given an array of integers a, find the sum of min (b), where b ranges over every substring o 这篇文章不仅是总结leetcode上关于dp的题,也恰好是总结一下算法课中关于dp的内容。 longest common subsequence problem: 最长公共子序列。 给定两个字符串,判定公共子序列的最大长度,这里的子序列可以是不连续的。 leetcode上有一道近似题:given two. Our codes, data and models are available here: github lujunru memochat. ab we propose memochat, a pipeline for refining instructions that enables large language models (llms) to effectively employ self composed memos for maintaining consistent long range open domain conversations. By leveraging insights from this dataset, along with tulu2 models and diverse fine tuning strategies, we validate the efficacy of the fipo framework across five public benchmarks and six testing models. our dataset and codes are available at: github lujunru fipo project. Rolemrc: a fine grained composite benchmark for role playing and instruction following. role playing is important for large language models (llms) to follow diverse instructions while maintaining role identity and the role's pre defined ability limits. Direct preference optimization (dpo) has emerged as a prominent algorithm for the direct and robust alignment of large language models (llms) with human preferences, offering a more straightforward alternative to the complex reinforcement learning from human feedback (rlhf).

Mingyu Lu Github Our codes, data and models are available here: github lujunru memochat. ab we propose memochat, a pipeline for refining instructions that enables large language models (llms) to effectively employ self composed memos for maintaining consistent long range open domain conversations. By leveraging insights from this dataset, along with tulu2 models and diverse fine tuning strategies, we validate the efficacy of the fipo framework across five public benchmarks and six testing models. our dataset and codes are available at: github lujunru fipo project. Rolemrc: a fine grained composite benchmark for role playing and instruction following. role playing is important for large language models (llms) to follow diverse instructions while maintaining role identity and the role's pre defined ability limits. Direct preference optimization (dpo) has emerged as a prominent algorithm for the direct and robust alignment of large language models (llms) with human preferences, offering a more straightforward alternative to the complex reinforcement learning from human feedback (rlhf).

Yujungliu Rolemrc: a fine grained composite benchmark for role playing and instruction following. role playing is important for large language models (llms) to follow diverse instructions while maintaining role identity and the role's pre defined ability limits. Direct preference optimization (dpo) has emerged as a prominent algorithm for the direct and robust alignment of large language models (llms) with human preferences, offering a more straightforward alternative to the complex reinforcement learning from human feedback (rlhf).

Lu Lingyun Github

Comments are closed.