Local Tool Calling With Llamacpp

Local Tool Calling With Llamacpp R Postai Multiple parallel tool calling is supported on some models but disabled by default, enable it by passing "parallel tool calls": true in the completion endpoint payload. This week features significant technical updates for local ai, including critical fixes for gemma4's tool calling in llama.cpp, a deep dive into a major cublas performance bug affecting rtx gpus, and a new local first ui integrating whisper and ollama for multimodal tasks.

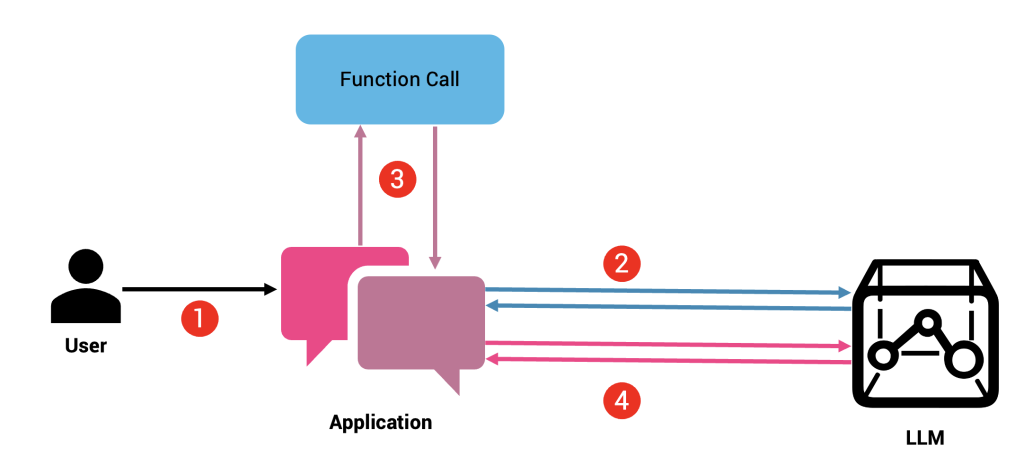

Basic Llama 3 2 3b Tool Calling With Langchain And Ollama By Netanel In this article, we concentrate on how to develop and incorporate custom function calls in a locally installed llm using llama.cpp. Openai functions and tools localai supports running the openai functions and tools api across multiple backends. the openai request shape is the same regardless of which backend runs your model — localai is responsible for extracting structured tool calls from the model’s output before returning the response. to learn more about openai functions, see also the openai api blog post. localai. Learn how to build and optimize a local ai workstation using llama.cpp, windows 11, rtx 5060, and qwen 3.5 for architecture, coding, and technical writing workflows. This article will show you how to setup and run your own selfhosted gemma 4 with llama.cpp – no cloud, no subscriptions, no rate limits.

Enhancing Applications With Function Calling Using The Llama Api By Learn how to build and optimize a local ai workstation using llama.cpp, windows 11, rtx 5060, and qwen 3.5 for architecture, coding, and technical writing workflows. This article will show you how to setup and run your own selfhosted gemma 4 with llama.cpp – no cloud, no subscriptions, no rate limits. To get started and use all the features shown below, we recommend using a model that has been fine tuned for tool calling. we will use hermes 2 pro llama 3 8b gguf from nousresearch. Run llms locally with llama.cpp. learn hardware choices, installation, quantization, tuning, and performance optimization. Qwen3 4b tool calling with llama cpp python a specialized 4b parameter model fine tuned for function calling and tool usage, optimized for local deployment with llama cpp python. The web content provides a comprehensive guide on running open source language models using llama.cpp on a local cpu, with instructions on setting up the server, installing necessary modules, and building streamlit applications to interact with the models.

How To Run Local Ai On Android With Llama Cpp And Termux To get started and use all the features shown below, we recommend using a model that has been fine tuned for tool calling. we will use hermes 2 pro llama 3 8b gguf from nousresearch. Run llms locally with llama.cpp. learn hardware choices, installation, quantization, tuning, and performance optimization. Qwen3 4b tool calling with llama cpp python a specialized 4b parameter model fine tuned for function calling and tool usage, optimized for local deployment with llama cpp python. The web content provides a comprehensive guide on running open source language models using llama.cpp on a local cpu, with instructions on setting up the server, installing necessary modules, and building streamlit applications to interact with the models.

I Made Llama Cpp Easier To Use R Localllama Qwen3 4b tool calling with llama cpp python a specialized 4b parameter model fine tuned for function calling and tool usage, optimized for local deployment with llama cpp python. The web content provides a comprehensive guide on running open source language models using llama.cpp on a local cpu, with instructions on setting up the server, installing necessary modules, and building streamlit applications to interact with the models.

How To Get The Llamacpp Server Ui R Localllama

Comments are closed.