Llm Guardrails

Guardrails Need To Be Integrated With Ai Alignment Platforms Gradient Guardrails help you generate structured data from llms. guardrails hub is a collection of pre built measures of specific types of risks (called 'validators'). multiple validators can be combined together into input and output guards that intercept the inputs and outputs of llms. Guardrails help you build safe, compliant ai applications by validating and filtering content at key points in your agent’s execution. they can detect sensitive information, enforce content policies, validate outputs, and prevent unsafe behaviors before they cause problems.

Github Rish1508 Llm Guardrails Guardrails For Llm Workflows In this notebook we share examples of how to implement guardrails for your llm applications. a guardrail is a generic term for detective controls that aim to steer your application. Just like the steel barriers lining our roads, llm guardrails are the essential safety systems that keep these powerful ai models on track, preventing them from veering into misinformation,. Learn about the 20 essential llm guardrails that ensure the safe, ethical, and responsible use of ai language models. Explore llm guardrails, types, challenges, and best practices for building safe, reliable, and aligned ai systems in 2025.

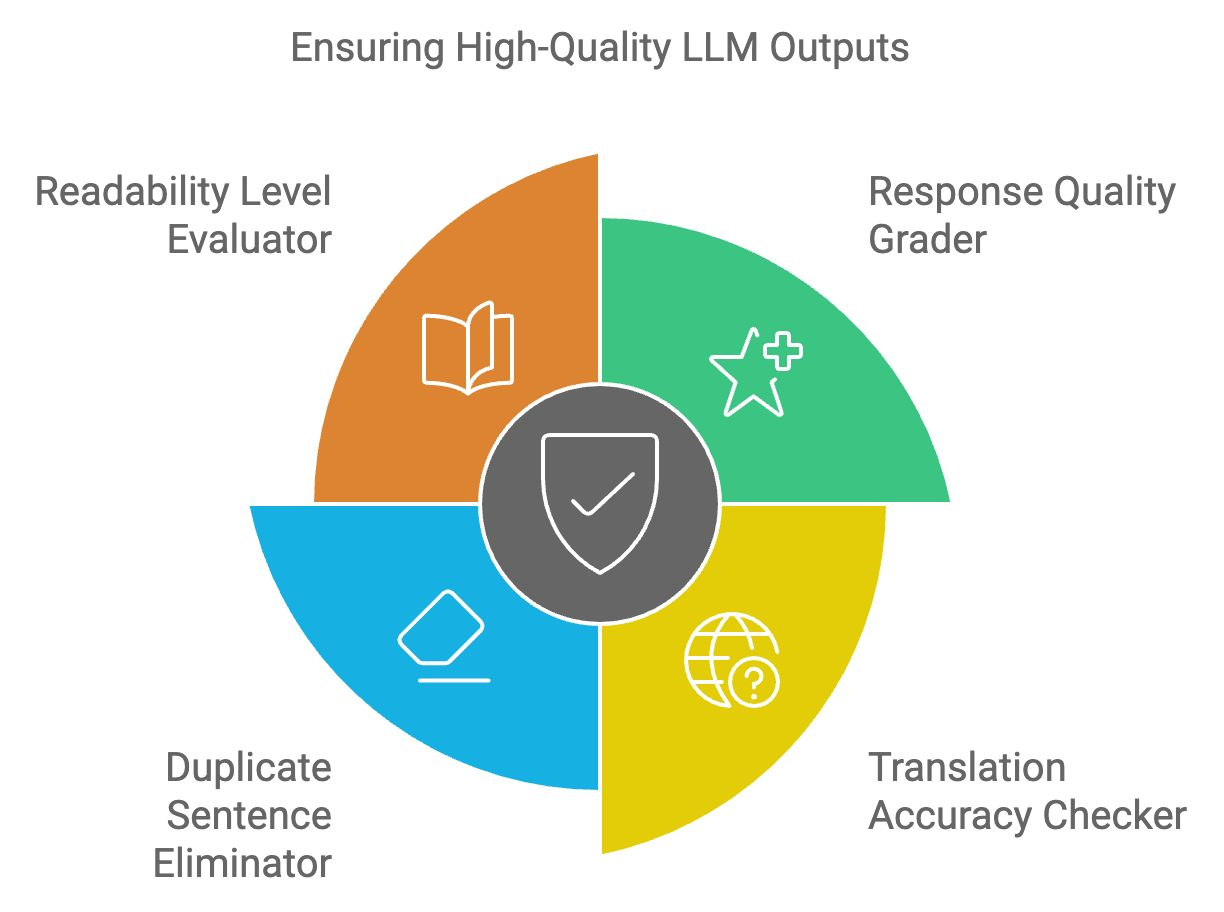

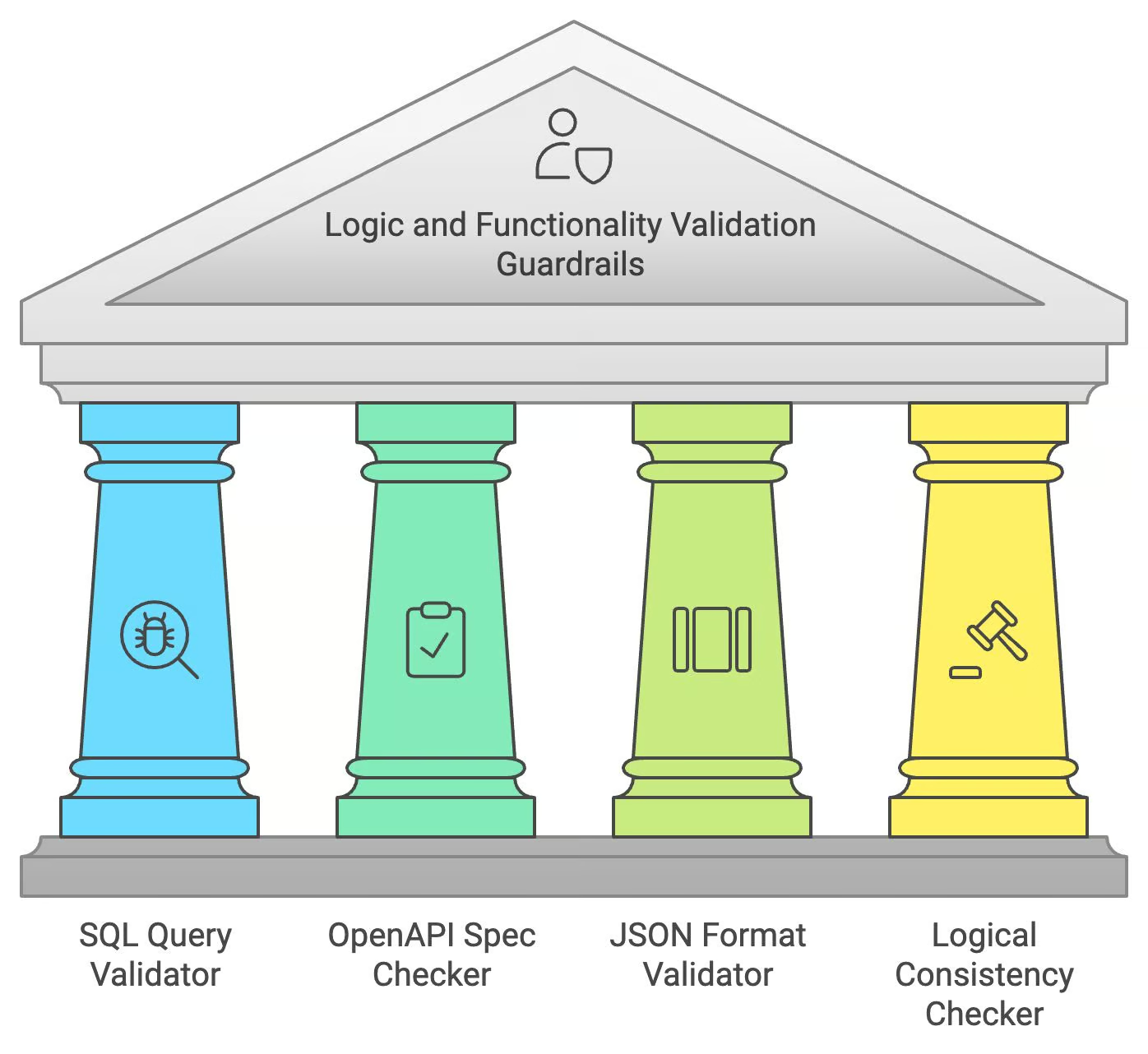

Mastering Llm Guardrails Complete 2025 Guide Generative Ai Learn about the 20 essential llm guardrails that ensure the safe, ethical, and responsible use of ai language models. Explore llm guardrails, types, challenges, and best practices for building safe, reliable, and aligned ai systems in 2025. What are llm guardrails? llm guardrails are technical controls that restrict how ai powered applications behave in production. rather than modifying the model itself, guardrails wrap the model with policies that govern what it can see, what it can say, and what it can do, on every request. What are llm guardrails? llm guardrails are predefined rules, filters, or mechanisms that constrain how a language model behaves during inference—either by screening inputs, modifying outputs, or enforcing ethical boundaries. Guardrails platforms serve as an enforcement layer between user prompts and model outputs, creating a safety net that monitors, validates, and corrects responses before they are delivered. what are llm guardrails platforms? llm guardrails platforms are software frameworks designed to enforce rules, policies, and constraints on ai generated outputs. Tldr: llm guardrails platforms protect your ai applications from harmful outputs, data leaks, and misuse. they filter prompts and responses, enforce policies, and monitor behavior in real time.

Llm Guardrails What are llm guardrails? llm guardrails are technical controls that restrict how ai powered applications behave in production. rather than modifying the model itself, guardrails wrap the model with policies that govern what it can see, what it can say, and what it can do, on every request. What are llm guardrails? llm guardrails are predefined rules, filters, or mechanisms that constrain how a language model behaves during inference—either by screening inputs, modifying outputs, or enforcing ethical boundaries. Guardrails platforms serve as an enforcement layer between user prompts and model outputs, creating a safety net that monitors, validates, and corrects responses before they are delivered. what are llm guardrails platforms? llm guardrails platforms are software frameworks designed to enforce rules, policies, and constraints on ai generated outputs. Tldr: llm guardrails platforms protect your ai applications from harmful outputs, data leaks, and misuse. they filter prompts and responses, enforce policies, and monitor behavior in real time.

Top 20 Llm Guardrails With Examples Datacamp Guardrails platforms serve as an enforcement layer between user prompts and model outputs, creating a safety net that monitors, validates, and corrects responses before they are delivered. what are llm guardrails platforms? llm guardrails platforms are software frameworks designed to enforce rules, policies, and constraints on ai generated outputs. Tldr: llm guardrails platforms protect your ai applications from harmful outputs, data leaks, and misuse. they filter prompts and responses, enforce policies, and monitor behavior in real time.

Top 20 Llm Guardrails With Examples Datacamp

Comments are closed.