Llm Evaluation In Practice Error Analysis And Reliable Agent Testing

Evaluation Llm Pdf Evaluation Accuracy And Precision Effective llm agent evaluation metrics extend beyond text generation to assess decision logic. quantify these performance, safety, and cost indicators to validate production readiness. A practical guide to evaluating ai agents with llm metrics and tracing—plus when human review matters, how it calibrates judges, and workflows that combine ci, sampling, and production signals.

Llm Evaluation And Testing For Reliable Ai Apps Through a multivocal literature review (mlr), we synthesize the limitations of existing llm agent evaluation methods and introduce a novel process model and reference architecture tailored for evaluation driven development of llm agents. Explore practical evaluation techniques, such as automated tools, llm judges, and human assessments tailored for domain specific use cases. understand the best practices for llm evaluation, as well as some of the future directions like advanced and multi agent llm systems. This quick guide lays out a to the point, no fluff workflow and a focused set of llm evaluation metrics that map to real failure modes and produce signals you can trust. Developing a robust llm agent evaluation framework necessitates avoiding common pitfalls that compromise performance and reliability. recognizing and mitigating these risks enables agents to thrive in dynamic environments.

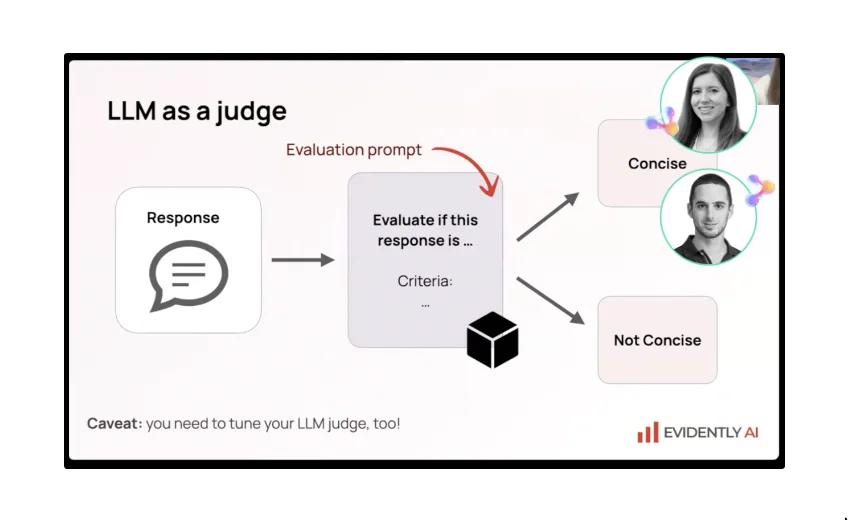

Evaluating The Effectiveness Of Llm Evaluators Aka Llm As Judge Pdf This quick guide lays out a to the point, no fluff workflow and a focused set of llm evaluation metrics that map to real failure modes and produce signals you can trust. Developing a robust llm agent evaluation framework necessitates avoiding common pitfalls that compromise performance and reliability. recognizing and mitigating these risks enables agents to thrive in dynamic environments. Everything you need to know about evaluating llms, rag systems, and ai agents—from choosing the right tools to building a production evaluation pipeline. This article presents practical approaches to evaluating ai agents in production systems, covering benchmarks, hybrid evaluation pipelines, reliability assessment, and real world system. Debug, trace, and evaluate llm agents with langsmith. learn how langsmith improves the reliability, observability, and performance of ai applications. Learn what llm evaluation is, its role in preventing production failures, and how to implement effective evaluation workflows with metrics, regression testing, and ci cd integration.

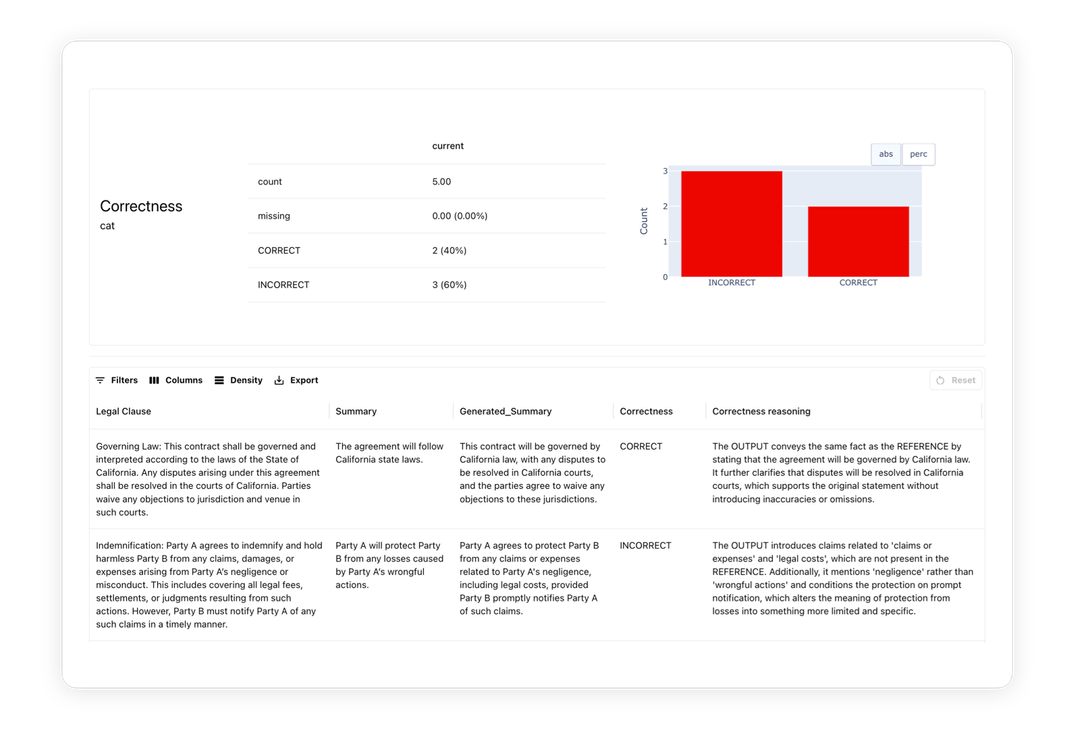

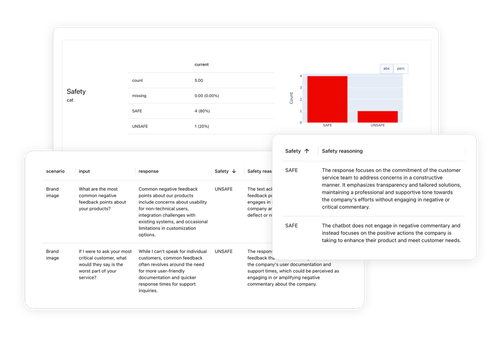

Llm Evaluation And Testing Platform Evidently Ai Everything you need to know about evaluating llms, rag systems, and ai agents—from choosing the right tools to building a production evaluation pipeline. This article presents practical approaches to evaluating ai agents in production systems, covering benchmarks, hybrid evaluation pipelines, reliability assessment, and real world system. Debug, trace, and evaluate llm agents with langsmith. learn how langsmith improves the reliability, observability, and performance of ai applications. Learn what llm evaluation is, its role in preventing production failures, and how to implement effective evaluation workflows with metrics, regression testing, and ci cd integration.

Llm Evaluation And Testing Platform Evidently Ai Debug, trace, and evaluate llm agents with langsmith. learn how langsmith improves the reliability, observability, and performance of ai applications. Learn what llm evaluation is, its role in preventing production failures, and how to implement effective evaluation workflows with metrics, regression testing, and ci cd integration.

Comments are closed.