Error Analysis To Evaluate Llm Applications With Langfuse Open Source

Error Analysis To Evaluate Llm Applications Langfuse Blog A practical guide to identifying, categorizing, and analyzing failure modes in llm applications using langfuse. This error analysis produces a quantified, application specific understanding of your primary issues. these insights provide a clear roadmap for targeted improvements, whether in your prompts, rag pipeline, or model selection.

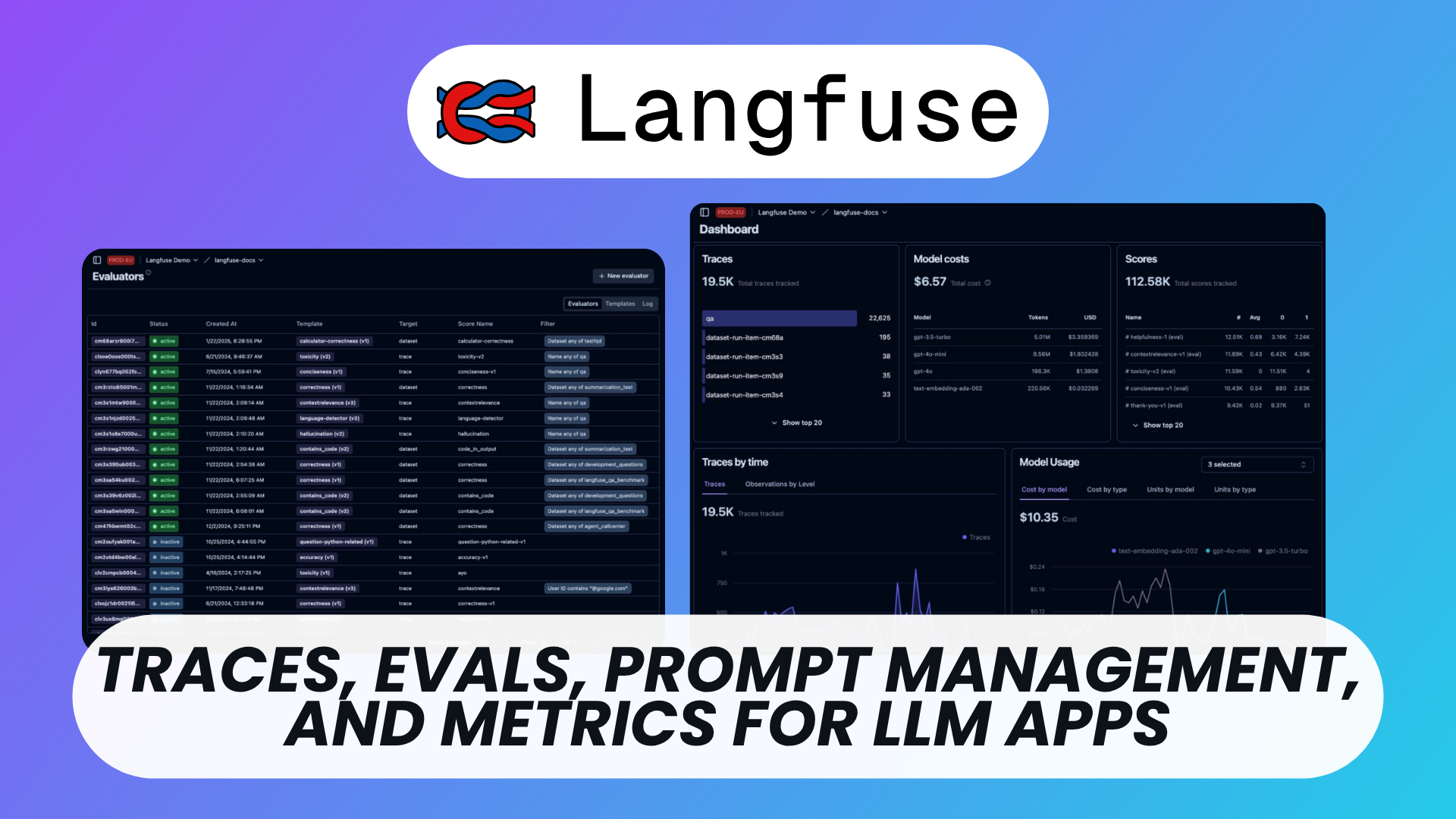

Error Analysis To Evaluate Llm Applications Langfuse Blog Learn the fundamentals of llm monitoring and observability, from tracing to evaluation and setting up a dashboard using langfuse. let’s start with an example: you have built a complex llm application that responds to user queries about a specific domain. Discover how langfuse brings visibility, organization, and quality control to llm apps. follow this tutorial to build a complete document q&a project. Open source application tracing and observability for llm apps. capture traces, monitor latency, track costs, and debug issues across openai, langchain, llamaindex, and more. with langfuse you can capture all your llm evaluations in one place. Error analysis provides this crucial context. this guide describes a four step process to identify, categorize, and quantify your application’s unique failure modes.

Langfuse Free Open Source Llm Engineering Platform Open source application tracing and observability for llm apps. capture traces, monitor latency, track costs, and debug issues across openai, langchain, llamaindex, and more. with langfuse you can capture all your llm evaluations in one place. Error analysis provides this crucial context. this guide describes a four step process to identify, categorize, and quantify your application’s unique failure modes. Traces, evals, prompt management and metrics to debug and improve your llm application. Evaluations are key to the llm application development workflow, and langfuse adapts to your needs. it supports llm as a judge, user feedback collection, manual labeling, and custom evaluation pipelines via apis sdks. datasets enable test sets and benchmarks for evaluating your llm application. Langfuse is an open source llm engineering platform (github) that helps teams collaboratively debug, analyze, and iterate on their llm applications. all platform features are natively integrated to accelerate the development workflow. A practical guide to systematically evaluating llm applications through observability, error analysis, testing, synthetic datasets, and experiments.

Comments are closed.