Llm Driven Cold Start Optimization In Serverless Computing Uplatz

Distributed Computing Strategies To Accelerate Llm Adoption Cold starts are one of the biggest performance challenges in serverless architectures — causing latency spikes and inconsistent user experiences. but with th. Given a serverless function f, its workload w , and environment h, determine the optimal configuration parameters θ∗ that minimize cold start latency l, subject to cost and resource constraints, using llm guided optimization.

Cold Start Optimization A Guide For Developers To address this problem, we present hydraserve, a serverless llm serving system designed to minimize cold start latency in public clouds. hydraserve proactively distributes models across servers to quickly fetch them, and overlaps cold start stages within workers to reduce startup latency. Unfortunately, the existing solutions to alleviate the cold start delay are not resource efficient as they follow a fixed policy over time. thereby, this article proposes a novel two layer adaptive approach to tackle this issue. The cold start problem in serverless computing leads to increased latency when functions are invoked after being idle. this paper proposes a predictive pre warming strategy that leverages machine learning and historical data analysis to mitigate the cold start problem. As for serverless computing challenges, they discussed the start up latency and described the existing solutions for the cold start challenge, such as the use of cache technology, snapshot, sandbox, etc.

Cold Start Optimization A Guide For Developers The cold start problem in serverless computing leads to increased latency when functions are invoked after being idle. this paper proposes a predictive pre warming strategy that leverages machine learning and historical data analysis to mitigate the cold start problem. As for serverless computing challenges, they discussed the start up latency and described the existing solutions for the cold start challenge, such as the use of cache technology, snapshot, sandbox, etc. In this section, we discuss some of the most significant strategies for optimizing cold start latency in serverless computing. we present the algorithms, formulas, and technical details related to different optimization techniques derived from the literature. In this paper, an optimized concurrent provisioning methodology for the aws lambda environment has been proposed along with a cold start prediction technique based on deep learning. Serverless computing is envisioned as the de facto standard for next generation cloud computing. however, the cold start dilemma has impeded its adoption by delay sensitive and burst. This project introduces a solution to reduce the cold start latency in serverless applications using an lru warm container approach. it helps to speed up the startup time of serverless functions (like aws lambda, google cloud functions, etc.) by keeping containers "warm" and ready for use.

A Survey On The Cold Start Latency Approaches In Serverless Computing In this section, we discuss some of the most significant strategies for optimizing cold start latency in serverless computing. we present the algorithms, formulas, and technical details related to different optimization techniques derived from the literature. In this paper, an optimized concurrent provisioning methodology for the aws lambda environment has been proposed along with a cold start prediction technique based on deep learning. Serverless computing is envisioned as the de facto standard for next generation cloud computing. however, the cold start dilemma has impeded its adoption by delay sensitive and burst. This project introduces a solution to reduce the cold start latency in serverless applications using an lru warm container approach. it helps to speed up the startup time of serverless functions (like aws lambda, google cloud functions, etc.) by keeping containers "warm" and ready for use.

Reinforcement Learning Rl Augmented Cold Start Frequency Reduction In Serverless computing is envisioned as the de facto standard for next generation cloud computing. however, the cold start dilemma has impeded its adoption by delay sensitive and burst. This project introduces a solution to reduce the cold start latency in serverless applications using an lru warm container approach. it helps to speed up the startup time of serverless functions (like aws lambda, google cloud functions, etc.) by keeping containers "warm" and ready for use.

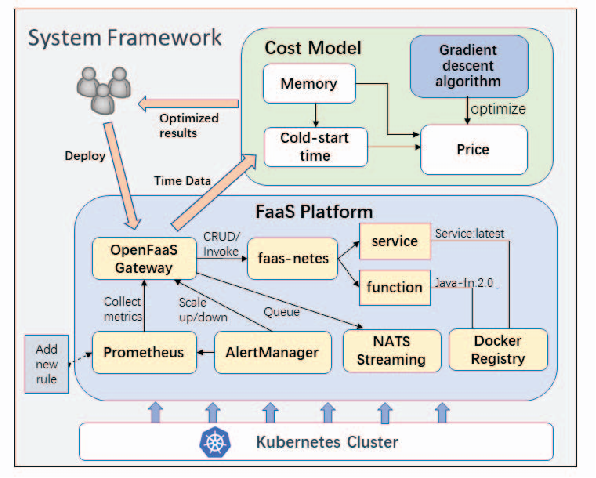

Figure 1 From Cost Sensitive Cold Start Latency Optimization Mechanism

Comments are closed.