Lecture Gpu 17 Pdf Graphics Processing Unit Cpu Cache

Gpu Graphics Processing Unit Pdf Graphics Processing Unit Lecture gpu 17 free download as pdf file (.pdf), text file (.txt) or view presentation slides online. The redesigned kepler host to gpu workflow shows the new grid management unit, which allows it to manage the actively dispatching grids, pause dispatch, and hold pending and suspended grids.

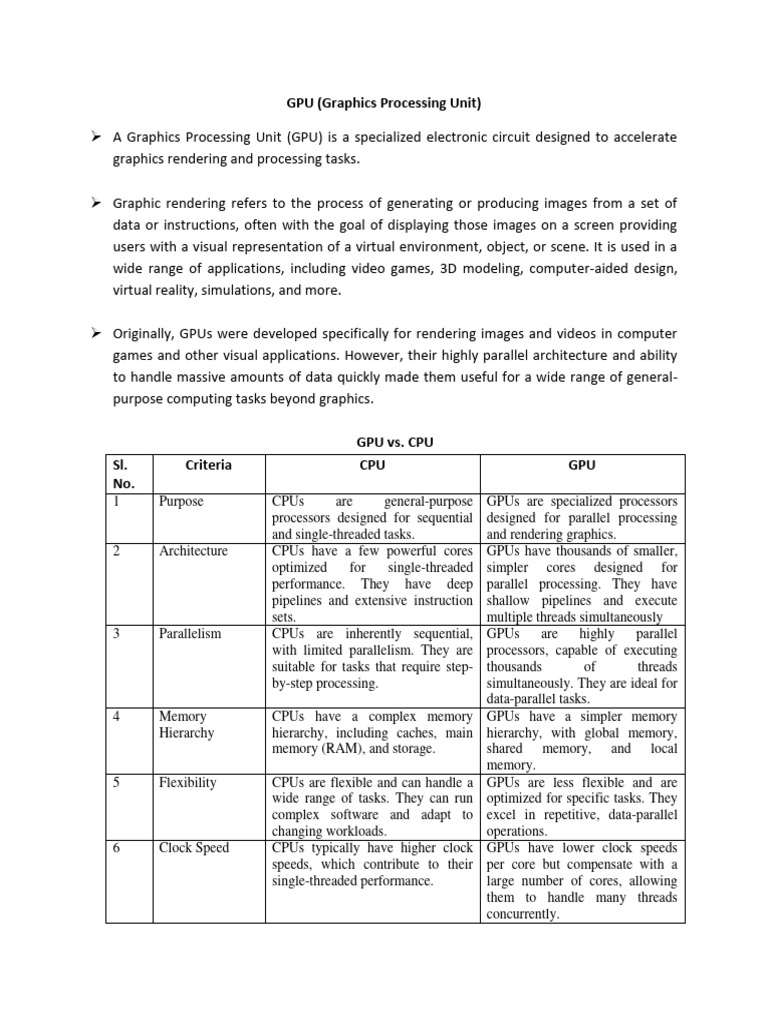

Gpu Pdf Computer Monitor Graphics Processing Unit The document discusses the unique architecture of gpus, highlighting their ability to excel in data parallel execution through features like stream processors, a hierarchical cache system, and hardware scheduling. This book discusses the topic of graphics processing units, which are specialized units found in most modern computer architectures. although we can do operations on graphics data in regular arithmetic logic units (alu's) via software, the hardware approach is much faster. Gp us students free download as pdf file (.pdf), text file (.txt) or read online for free. The processors connect with four 64 bit wide dram partitions via an interconnection network. each sm has eight sp cores, two special function units (sfus), instruction and constant caches, a multithreaded instruction unit, and a shared memory.

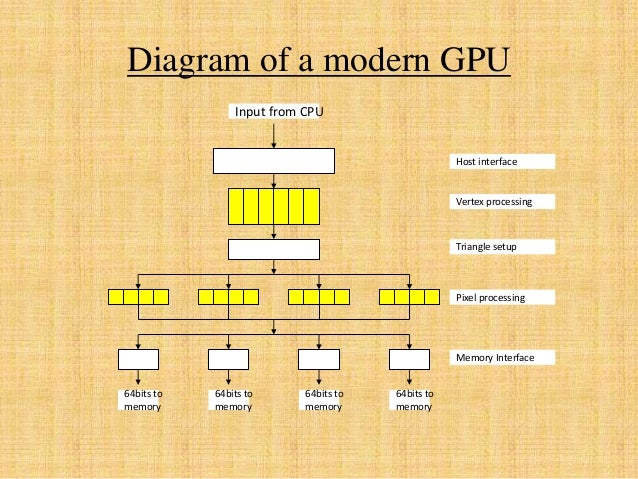

Graphics Processing Unit Gpu Gp us students free download as pdf file (.pdf), text file (.txt) or read online for free. The processors connect with four 64 bit wide dram partitions via an interconnection network. each sm has eight sp cores, two special function units (sfus), instruction and constant caches, a multithreaded instruction unit, and a shared memory. The processors connect with four 64 bit wide dram partitions via an interconnection network. each sm has eight sp cores, two special function units (sfus), instruction and constant caches, a multithreaded instruction unit, and a shared memory. Allows any thread in a warp to read registers of any other thread in the same warp in a single step, instead of going through shared memory with separate ld st insts. high level languages (cuda) are hard to use. The document discusses gpu architectures from a cpu perspective. it begins with an introduction to data parallelism and how graphics workloads that involve identical, independent computations on multiple data inputs can be executed in parallel on gpus. Although all data are cached now, gpu may still optimized texture like memory reads (e.g., with prefetches) therefore, it may still be beneficial to use texture memory.

Presentation On Graphics Processing Unit Gpu Pptx The processors connect with four 64 bit wide dram partitions via an interconnection network. each sm has eight sp cores, two special function units (sfus), instruction and constant caches, a multithreaded instruction unit, and a shared memory. Allows any thread in a warp to read registers of any other thread in the same warp in a single step, instead of going through shared memory with separate ld st insts. high level languages (cuda) are hard to use. The document discusses gpu architectures from a cpu perspective. it begins with an introduction to data parallelism and how graphics workloads that involve identical, independent computations on multiple data inputs can be executed in parallel on gpus. Although all data are cached now, gpu may still optimized texture like memory reads (e.g., with prefetches) therefore, it may still be beneficial to use texture memory.

Comments are closed.