Lcip Loss Controlled Inverse Projection Of High Dimensional Data Supplementary Video

Ppt Ics 278 Data Mining Lecture 5 Low Dimensional Representations Supplementary video for research paper lcip: loss controlled inverse projection of high dimensional data [arxiv:2602.11141]. In this work, we enable inverse projections to represent surface like structures whose location in data space is not fixed but controllable by the user.

Guide To Modern Projector Imaging Technology Lcd Dlp And Lcos This gui is designed for our proposed controllable inverse projection method. you can control the inverse projection locally by dragging the sliders, and see the changes in real time. Lcip introduces a loss controlled inverse projection framework that separates information preserved by a projection from information lost during projection, enabling a user driven sweep of the high dimensional data space. Controllable inverse projection system for high dimensional image data. lcip adds a user controlled latent direction so exploration is not limited to a single fixed reconstruction surface. That's the core problem lcip solves. when you project high dimensional image data (all the pixels and colors) down to lower dimensions, you lose information. traditional inverse projection—the process of going backward—doesn't know how to recover what was lost. you end up with poor reconstructions.

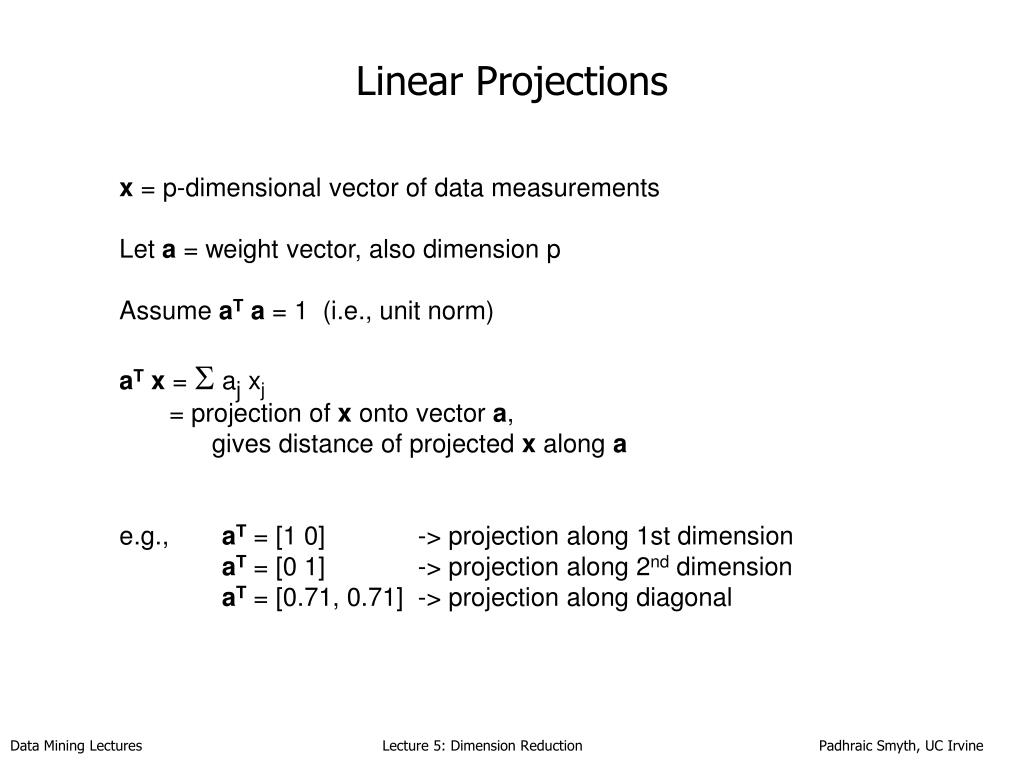

Segregation Of Multimodal Lcic Projection Patterns At P12 Controllable inverse projection system for high dimensional image data. lcip adds a user controlled latent direction so exploration is not limited to a single fixed reconstruction surface. That's the core problem lcip solves. when you project high dimensional image data (all the pixels and colors) down to lower dimensions, you lose information. traditional inverse projection—the process of going backward—doesn't know how to recover what was lost. you end up with poor reconstructions. Projections (or dimensionality reduction) methods $p$ aim to map high dimensional data to typically 2d scatterplots for visual exploration. inverse projection methods $p^ { 1}$ aim to map this 2d s. Based on a given projection, we train aes to learn a mapping into 2d space and an inverse mapping into the original space. we perform a quantitative and qualitative comparison on four datasets of varying dimensionality and pattern complexity using t sne. We should stop trying to salvage lossy projections and just admit that once the dimensionality is gone, it's time to kill the process and start a new embedding. Our method works generically for any $p$ technique and dataset, is controlled by two intuitive user set parameters, and is simple to implement. we demonstrate it by an extensive application involving image manipulation for style transfer.

Figure 1 1 From Computational Methods For Large Scale Inverse Problems Projections (or dimensionality reduction) methods $p$ aim to map high dimensional data to typically 2d scatterplots for visual exploration. inverse projection methods $p^ { 1}$ aim to map this 2d s. Based on a given projection, we train aes to learn a mapping into 2d space and an inverse mapping into the original space. we perform a quantitative and qualitative comparison on four datasets of varying dimensionality and pattern complexity using t sne. We should stop trying to salvage lossy projections and just admit that once the dimensionality is gone, it's time to kill the process and start a new embedding. Our method works generically for any $p$ technique and dataset, is controlled by two intuitive user set parameters, and is simple to implement. we demonstrate it by an extensive application involving image manipulation for style transfer.

Left Panel Projection Of The Illustrative Model Of The Colliding Lic We should stop trying to salvage lossy projections and just admit that once the dimensionality is gone, it's time to kill the process and start a new embedding. Our method works generically for any $p$ technique and dataset, is controlled by two intuitive user set parameters, and is simple to implement. we demonstrate it by an extensive application involving image manipulation for style transfer.

Comments are closed.