Lasso Regression Explained

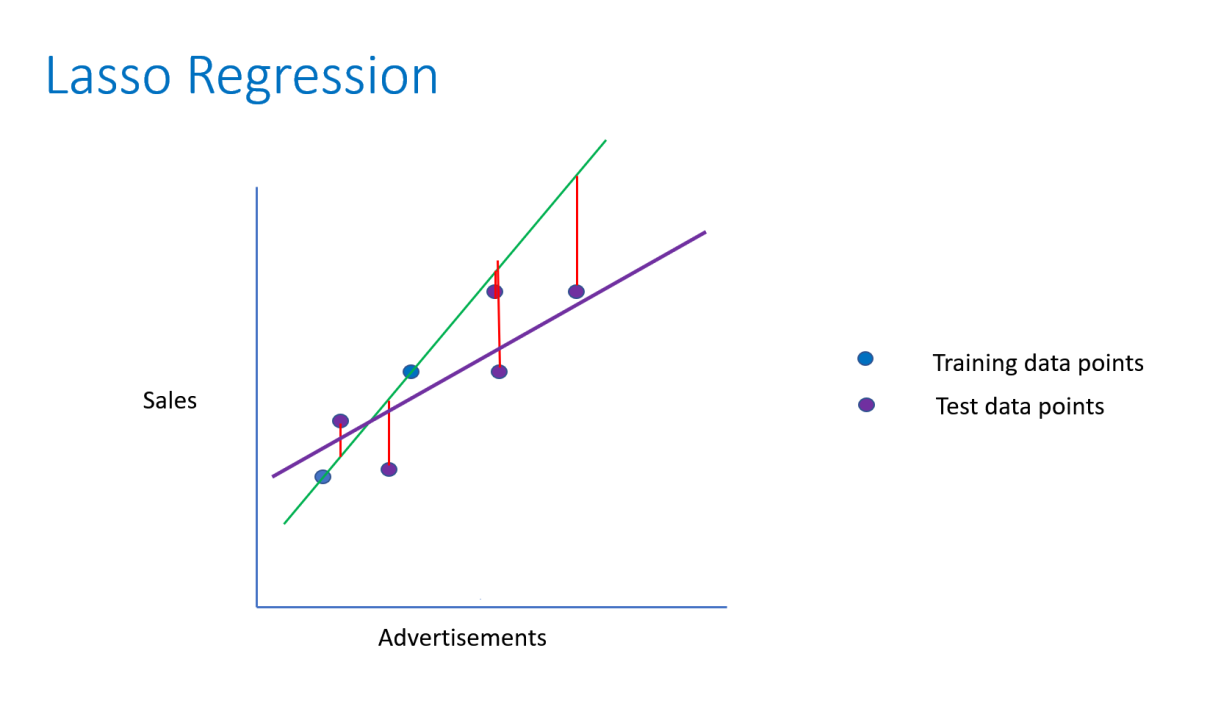

Introduction To Lasso Regression Lasso regression (least absolute shrinkage and selection operator) is a linear regression technique with l1 regularization that improves model generalization by adding a penalty. Lasso regression is a powerful and versatile tool for building predictive models. its ability to perform feature selection and prevent overfitting makes it a valuable addition to any data scientist’s toolkit.

Lasso Regression Clearly Explained This tutorial provides an introduction to lasso regression, including an explanation and examples. The lasso method has a completely different but also useful advantage. it performs both feature selection and regularization in order to enhance the prediction accuracy and interpretability of. Lasso regression, also known as l1 regularization, is a popular technique used in statistical modeling and machine learning to estimate the relationships between variables and make predictions. lasso stands for least absolute shrinkage and selection operator. In this article, you will learn everything you need to know about lasso regression, the differences between lasso and ridge, as well as how you can start using lasso regression in your own machine learning projects.

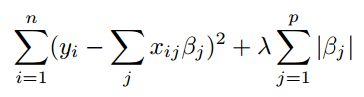

Lasso Regression Simple Definition Statistics How To Lasso regression, also known as l1 regularization, is a popular technique used in statistical modeling and machine learning to estimate the relationships between variables and make predictions. lasso stands for least absolute shrinkage and selection operator. In this article, you will learn everything you need to know about lasso regression, the differences between lasso and ridge, as well as how you can start using lasso regression in your own machine learning projects. Lasso was originally formulated for linear regression models. this simple case reveals a substantial amount about the estimator. these include its relationship to ridge regression and best subset selection and the connections between lasso coefficient estimates and so called soft thresholding. Understanding lasso regression requires a deep dive into its mathematical formulation and implications. this section explores the formulation of the l1 penalty, the derivation of its objective function, and its geometric interpretation. We illustrate the use of lasso regression on a data frame called “hitters” with 20 variables and 322 observations of major league players (see this documentation for more information about the data). Lasso regression, short for least absolute shrinkage and selection operator, is a technique in linear regression that performs both variable selection and regularization to enhance the prediction accuracy and interpretability of the statistical model.

Lasso Regression Explained With Example And Application Lasso was originally formulated for linear regression models. this simple case reveals a substantial amount about the estimator. these include its relationship to ridge regression and best subset selection and the connections between lasso coefficient estimates and so called soft thresholding. Understanding lasso regression requires a deep dive into its mathematical formulation and implications. this section explores the formulation of the l1 penalty, the derivation of its objective function, and its geometric interpretation. We illustrate the use of lasso regression on a data frame called “hitters” with 20 variables and 322 observations of major league players (see this documentation for more information about the data). Lasso regression, short for least absolute shrinkage and selection operator, is a technique in linear regression that performs both variable selection and regularization to enhance the prediction accuracy and interpretability of the statistical model.

Comments are closed.