L76 Implement Lru Cache Linked List

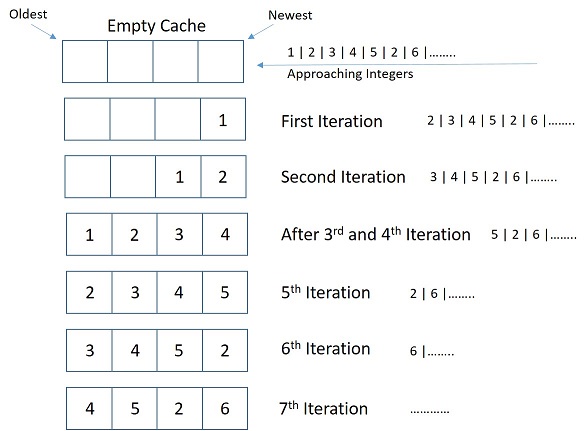

Github Zlaazlaa Lru Cache Implement An Implement Of Lru Cache Based 63,589 views • feb 25, 2025 • linked list | complete playlist for faang interviews. The basic idea behind implementing an lru (least recently used) cache using a key value pair approach is to manage element access and removal efficiently through a combination of a doubly linked list and a hash map.

Lru Cache In Python Using Doubly Linked List The lru cache is a valuable data structure for implementing efficient caching mechanisms. it allows fast insertion, retrieval, and updating of key value pairs while also limiting the cache size. Intuition: to efficiently implement an lru cache, we can use a combination of a hashmap and a doubly linked list. the hashmap will store the key value pairs, and the doubly linked list will maintain the order of the elements based on their usage. Implement lru cache – educative guides you through designing a least recently used (lru) cache using a hash map doubly linked list, enabling get and put in o (1) time. learn eviction logic, edge cases, and language specific solutions in this hands on tutorial. So our implementation of lru cache will have hashmap and doubly linkedlist. in which hashmap will hold the keys and address of the nodes of doubly linkedlist . and doubly linkedlist.

Implement Least Recently Used Lru Cache Implement lru cache – educative guides you through designing a least recently used (lru) cache using a hash map doubly linked list, enabling get and put in o (1) time. learn eviction logic, edge cases, and language specific solutions in this hands on tutorial. So our implementation of lru cache will have hashmap and doubly linkedlist. in which hashmap will hold the keys and address of the nodes of doubly linkedlist . and doubly linkedlist. The least recently used (lru) cache is a popular caching strategy that discards the least recently used items first to make room for new elements when the cache is full. Linkedhashmap internally uses a doubly linked list to keep track of element order. we can set it to insertion order (default) or access order (true). with access order, whenever you get or put a key, that entry is moved to the end (most recent). While deque stands for double ended queue, it essentially functions as a doubly linked list with efficient operations on both ends. below is the implementation of the above approach:. Design a data structure that follows the constraints of a least recently used (lru) cache. implement the lrucache class: lrucache(int capacity) initialize the lru cache with positive size capacity. int get(int key) return the value of the key if the key exists, otherwise return 1.

Understanding Lru Cache Implementation With Double Linked List In Go The least recently used (lru) cache is a popular caching strategy that discards the least recently used items first to make room for new elements when the cache is full. Linkedhashmap internally uses a doubly linked list to keep track of element order. we can set it to insertion order (default) or access order (true). with access order, whenever you get or put a key, that entry is moved to the end (most recent). While deque stands for double ended queue, it essentially functions as a doubly linked list with efficient operations on both ends. below is the implementation of the above approach:. Design a data structure that follows the constraints of a least recently used (lru) cache. implement the lrucache class: lrucache(int capacity) initialize the lru cache with positive size capacity. int get(int key) return the value of the key if the key exists, otherwise return 1.

Github Tenshun Lru Cache While deque stands for double ended queue, it essentially functions as a doubly linked list with efficient operations on both ends. below is the implementation of the above approach:. Design a data structure that follows the constraints of a least recently used (lru) cache. implement the lrucache class: lrucache(int capacity) initialize the lru cache with positive size capacity. int get(int key) return the value of the key if the key exists, otherwise return 1.

Designing An Lru Cache Javaninja

Comments are closed.