L1 Vs L2 Regularizations W Caps Machinelearning Datascience Statistics Deeplearning

Why Do L1 And L2 Regularizations Reduce Weights Differently By Anna In this article, we will focus on two regularization techniques, l1 and l2, explain their differences and show how to apply them in python. what is regularization and why is it important? in simple terms, regularizing a model means changing its learning behavior during the training phase. The l1 and the l2 regularization are two techniques that help prevent overfitting, and in this video, we explore the differences between them. more.

Regularization L1 Vs L2 And When To Use Them Data Interview Question What is the difference between l1 and l2 regularization? the main difference between l1 and l2 regularization lies in the penalty terms added to the loss function during training. A detailed explanation of l1 and l2 regularization, focusing on their theoretical insights, geometric interpretations, and practical implications for machine learning models. In this article, we will focus on two regularization techniques, l1 and l2, explain their differences and show how to apply them in python. what is regularization and why is it important?. Learn about the importance of regularization in machine learning, the differences between l1 and l2 methods, and how to apply each for optimal model performance.

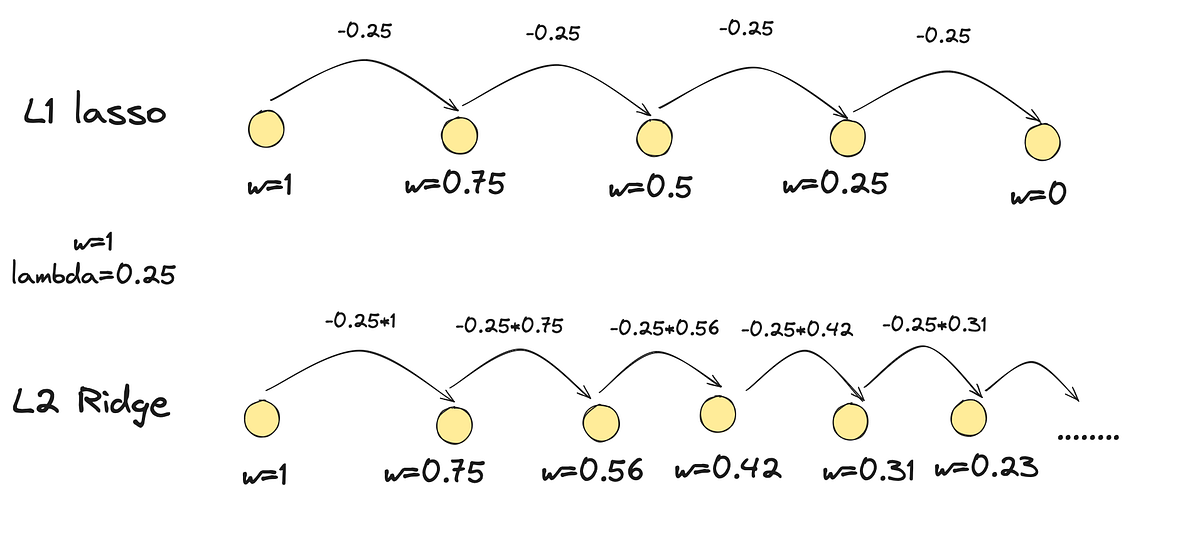

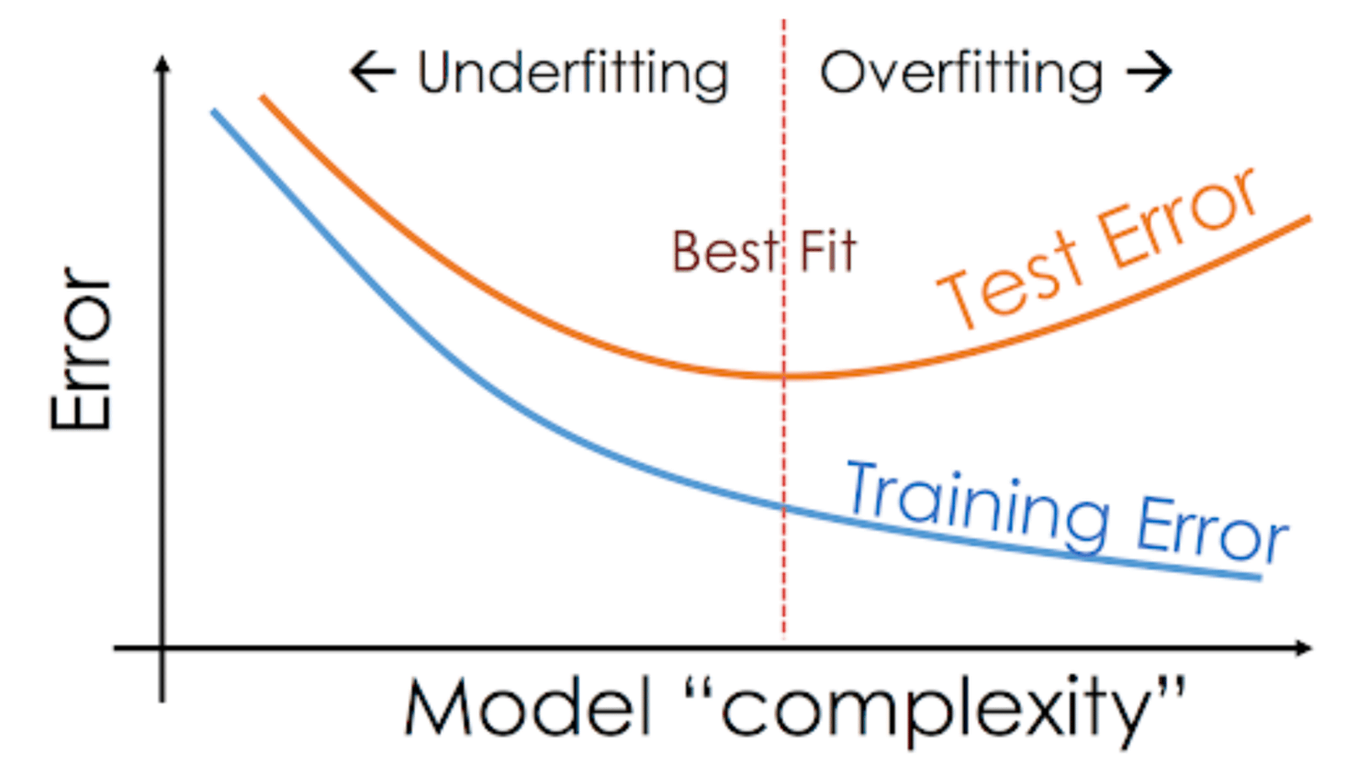

L1 Vs L2 Regularization In Machine Learning Differences Advantages In this article, we will focus on two regularization techniques, l1 and l2, explain their differences and show how to apply them in python. what is regularization and why is it important?. Learn about the importance of regularization in machine learning, the differences between l1 and l2 methods, and how to apply each for optimal model performance. Regularization is a technique used in machine learning to prevent overfitting, which otherwise causes models to perform poorly on unseen data. by adding a penalty for complexity, regularization encourages simpler and more generalizable models. Learn how l1 (lasso) and l2 (ridge) regularization prevent overfitting, enhance model generalization, and enable effective feature selection. When you learn about machine learning, you’re bound to come across l1 and l2 regularization. i think many awesome blogs explain these concepts intuitively with visualizations. however, only a few blogs explain l1 and l2 regularization with analytic and probabilistic views in detail. It turns out they have different but equally useful properties. from a practical standpoint, l1 tends to shrink coefficients to zero whereas l2 tends to shrink coefficients evenly. l1 is therefore useful for feature selection, as we can drop any variables associated with coefficients that go to zero.

Github Shuaibiqbal L1 Vs L2 Regularization In Machine Learning With Regularization is a technique used in machine learning to prevent overfitting, which otherwise causes models to perform poorly on unseen data. by adding a penalty for complexity, regularization encourages simpler and more generalizable models. Learn how l1 (lasso) and l2 (ridge) regularization prevent overfitting, enhance model generalization, and enable effective feature selection. When you learn about machine learning, you’re bound to come across l1 and l2 regularization. i think many awesome blogs explain these concepts intuitively with visualizations. however, only a few blogs explain l1 and l2 regularization with analytic and probabilistic views in detail. It turns out they have different but equally useful properties. from a practical standpoint, l1 tends to shrink coefficients to zero whereas l2 tends to shrink coefficients evenly. l1 is therefore useful for feature selection, as we can drop any variables associated with coefficients that go to zero.

Quickly Master L1 Vs L2 Regularization Ml Interview Q A When you learn about machine learning, you’re bound to come across l1 and l2 regularization. i think many awesome blogs explain these concepts intuitively with visualizations. however, only a few blogs explain l1 and l2 regularization with analytic and probabilistic views in detail. It turns out they have different but equally useful properties. from a practical standpoint, l1 tends to shrink coefficients to zero whereas l2 tends to shrink coefficients evenly. l1 is therefore useful for feature selection, as we can drop any variables associated with coefficients that go to zero.

L1 Vs L2 Regularization The Intuitive Difference By Dhaval Taunk

Comments are closed.