L1 And L2 Regularization

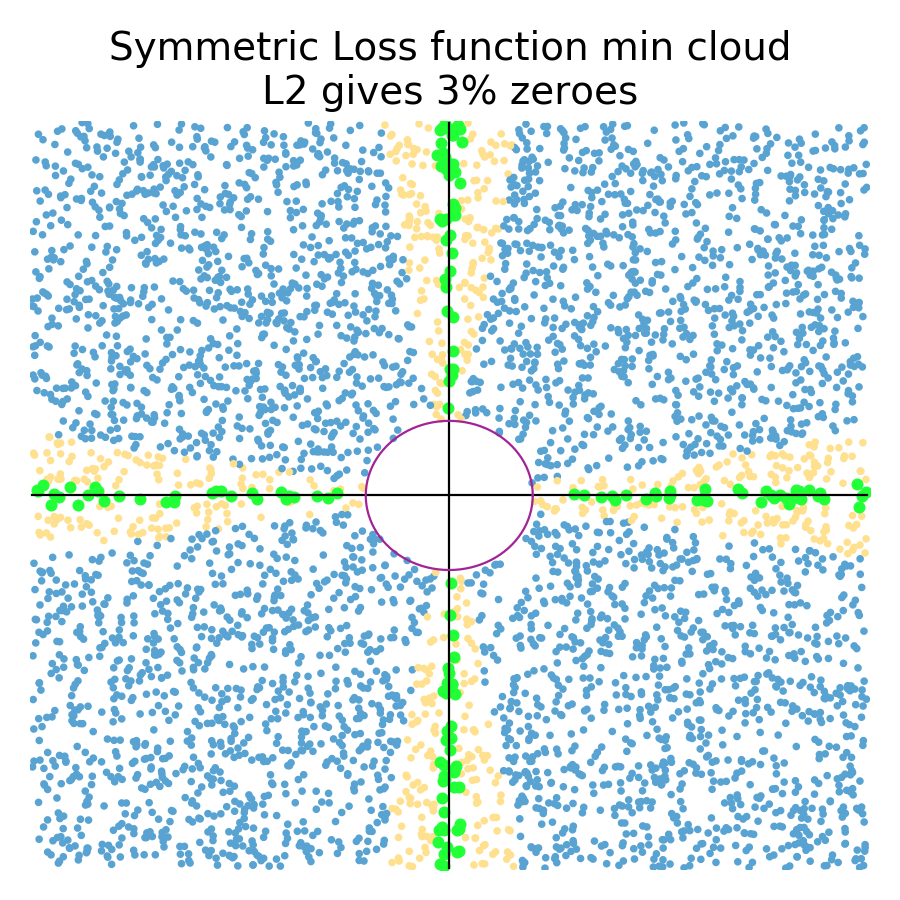

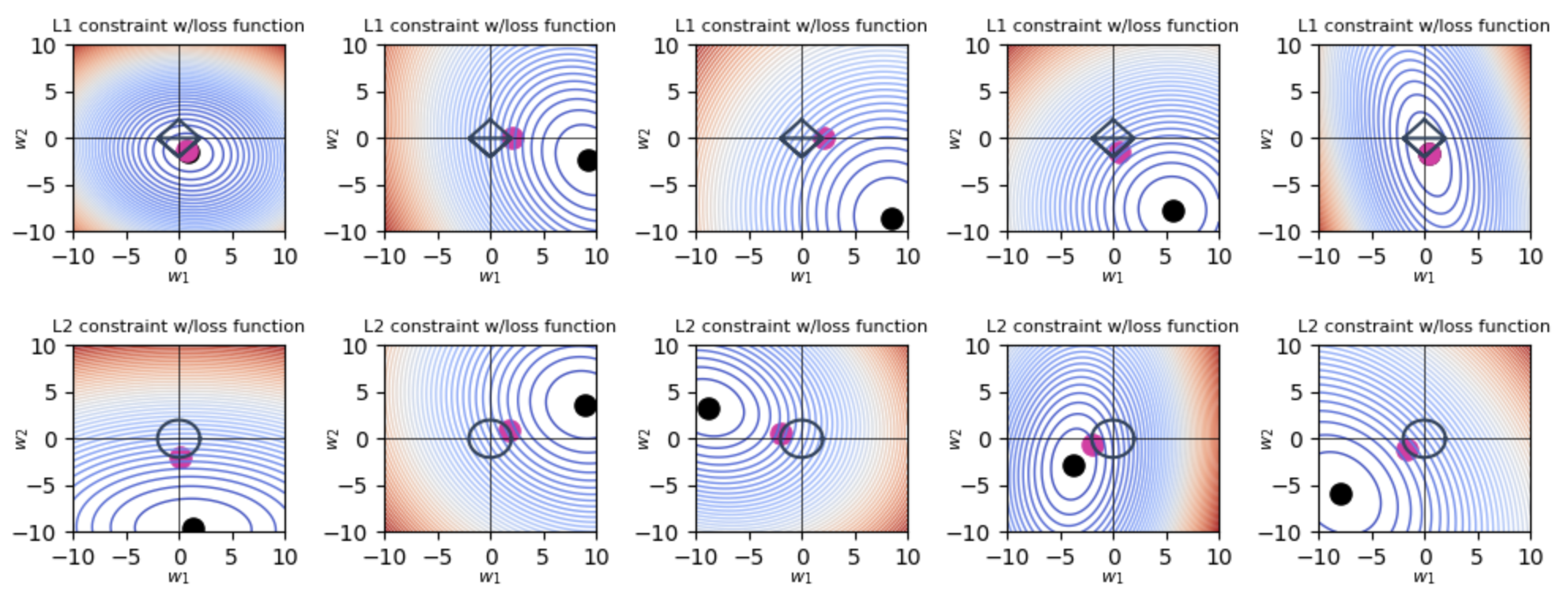

Regularization Neural Networks Artificial Intelligence And Machine Elastic net regression is a combination of both l1 as well as l2 regularization. that shows that we add the absolute norm of the weights as well as the squared measure of the weights. Learn how l1 and l2 regularization prevent overfitting and improve generalization in machine learning and statistical modelling. compare their advantages, disadvantages, and applications, and see practical examples in python.

The Difference Between L1 And L2 Regularization Learn about regularization in machine learning, including how techniques like l1 and l2 regularization help prevent overfitting. Learn how to avoid overfitting in linear regression by using l1 and l2 regularization methods. l1 regularization shrinks the coefficients and performs feature selection, while l2 regularization reduces the complexity of the model. There are three commonly used regularization techniques to control the complexity of machine learning models: let’s discuss these standard techniques in detail. a linear regression model that uses the l2 regularization technique is called ridge regression. What is regularization in machine learning, and how do you actually prevent overfitting in practice? this guide explains l1 vs l2, dropout, and early stopping with real world intuition and code.

Regularization In Machine Learning Traffine I O There are three commonly used regularization techniques to control the complexity of machine learning models: let’s discuss these standard techniques in detail. a linear regression model that uses the l2 regularization technique is called ridge regression. What is regularization in machine learning, and how do you actually prevent overfitting in practice? this guide explains l1 vs l2, dropout, and early stopping with real world intuition and code. Learn how l1 (lasso) and l2 (ridge) regularization prevent overfitting, enhance model generalization, and enable effective feature selection. Understanding what regularization is and why it is required for machine learning and diving deep to clarify the importance of l1 and l2 regularization in deep learning. Two of the most common regularization techniques are l1 and l2 regularization. this article will explain the differences between these two methods and their implications for model development and training. Pytorch simplifies the implementation of regularization techniques like l1 and l2 through its flexible neural network framework and built in optimization routines, making it easier to build and train regularized models.

Comments are closed.