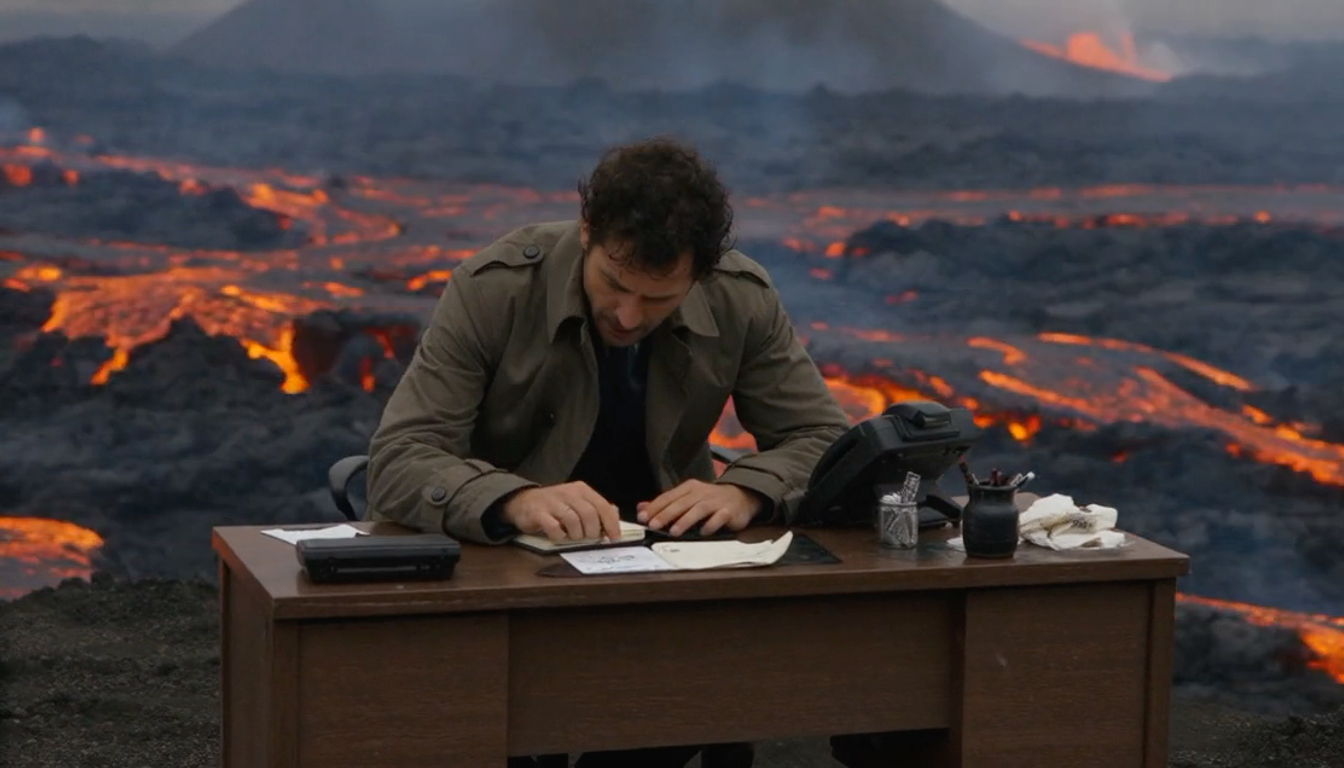

Jinx With Cogvideox Image To Video Testing

Cogvideox Open Laboratory I made some tests with #cogvideox 's new image to video model in #comfyui . if you are familiar with comfyui you can download the nodes from here: gi. With this, the cogvideox series models now support three tasks: text to video generation, video continuation, and image to video generation. welcome to try it online at experience.

Cogvideox 5b High Quality Local Video Generator Stable Diffusion Art The zipfile contains the json file, the starter image, and the png file from creation, which also contains the workflow. this workflow also contains a cogvideox motion lora for the camera movement. After certain testing, we can conclude that cogvideox is much more capable than other diffusion based video generation models. now, it can be supported on lower end gpus as well where you can use quantized model. It uses diffusion based motion prediction to generate realistic camera movement, perspective shifts, and subtle dynamic effects, turning a single image into a short, coherent video. With this, the cogvideox series models now support three tasks: text to video generation, video continuation, and image to video generation. welcome to try it online at experience.

Cogvideox 5b High Quality Local Video Generator Stable Diffusion Art It uses diffusion based motion prediction to generate realistic camera movement, perspective shifts, and subtle dynamic effects, turning a single image into a short, coherent video. With this, the cogvideox series models now support three tasks: text to video generation, video continuation, and image to video generation. welcome to try it online at experience. With this, the cogvideox series models now support three tasks: text to video generation, video continuation, and image to video generation. welcome to try it online at experience. In this tutorial, i will show you how to set up and run the state of the art video model cogvideox 5b. you can control the video by specifying an image and a prompt. An “expert” transformer with adaptive layernorm improves alignment between text and video, and 3d full attention helps accurately capture motion and time in generated videos. you can find all the original cogvideox checkpoints under the cogvideox collection. At this point, let's analyze how the model processes the image to video generation logically. the cogvideox author suggested using long text description as the prompt.

Testing Cogvideox Image To Video 720x480 Videos Civitai With this, the cogvideox series models now support three tasks: text to video generation, video continuation, and image to video generation. welcome to try it online at experience. In this tutorial, i will show you how to set up and run the state of the art video model cogvideox 5b. you can control the video by specifying an image and a prompt. An “expert” transformer with adaptive layernorm improves alignment between text and video, and 3d full attention helps accurately capture motion and time in generated videos. you can find all the original cogvideox checkpoints under the cogvideox collection. At this point, let's analyze how the model processes the image to video generation logically. the cogvideox author suggested using long text description as the prompt.

Testing Cogvideox Image To Video 720x480 Videos Civitai An “expert” transformer with adaptive layernorm improves alignment between text and video, and 3d full attention helps accurately capture motion and time in generated videos. you can find all the original cogvideox checkpoints under the cogvideox collection. At this point, let's analyze how the model processes the image to video generation logically. the cogvideox author suggested using long text description as the prompt.

Comments are closed.