Ithy Understanding Llm Quantization

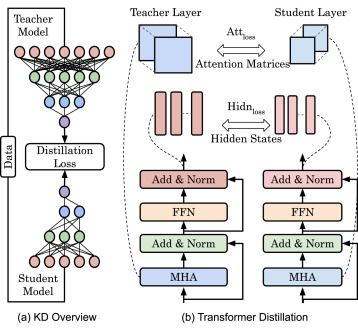

Ithy Understanding Llm Quantization What is llm quantization? llm quantization refers to a set of model compression techniques aimed at reducing the size and computational demands of large language models (llms). This paper aims to provide a comprehensive review of quantization techniques in the context of llms. we begin by detailing the underlying mechanisms of quantization, followed by a comparison of various approaches, with a specific focus on their application at the llm level.

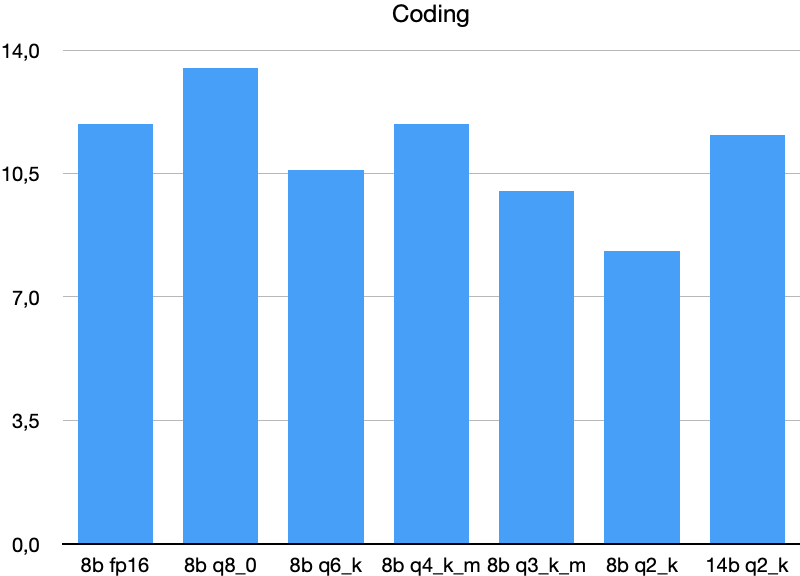

Exploiting Llm Quantization What is llm quantization? llm quantization is a compression technique that reduces the numerical precision of model weights and activations from high precision formats (like 32 bit floats) to lower precision representations (like 8 bit or 4 bit integers). This guide walks you through the practical process of quantizing llm models, from understanding the fundamentals to implementing various quantization techniques. To understand quantization, we need to first understand compression and the role of floating points in general. “compression” is the method of making these models smaller and so it faster, without significantly hurting their performance. Learn 5 key llm quantization techniques to reduce model size and improve inference speed without significant accuracy loss. includes technical details and code snippets for engineers.

Llm Quantization Making Models Faster And Smaller Matterai Blog To understand quantization, we need to first understand compression and the role of floating points in general. “compression” is the method of making these models smaller and so it faster, without significantly hurting their performance. Learn 5 key llm quantization techniques to reduce model size and improve inference speed without significant accuracy loss. includes technical details and code snippets for engineers. We systematically explore various methodologies designed to tackle the resource intensive nature of llms, including post training quantization (ptq), quantization aware fine tuning (qaf), and quantization aware training (qat). This is a curated list of resources related to quantization techniques for large language models (llms). quantization is a crucial step in deploying llms on resource constrained devices, such as mobile phones or edge devices, by reducing the model's size and computational requirements. This blog aims to give a quick introduction to the different quantization techniques you are likely to run into if you want to experiment with already quantized large language models (llms). Learn how quantization can reduce the size of large language models for efficient ai deployment on everyday devices. follow our step by step guide now!.

Llm Quantization Comparison We systematically explore various methodologies designed to tackle the resource intensive nature of llms, including post training quantization (ptq), quantization aware fine tuning (qaf), and quantization aware training (qat). This is a curated list of resources related to quantization techniques for large language models (llms). quantization is a crucial step in deploying llms on resource constrained devices, such as mobile phones or edge devices, by reducing the model's size and computational requirements. This blog aims to give a quick introduction to the different quantization techniques you are likely to run into if you want to experiment with already quantized large language models (llms). Learn how quantization can reduce the size of large language models for efficient ai deployment on everyday devices. follow our step by step guide now!.

Comments are closed.