Introduction To World Models V Jepa 2

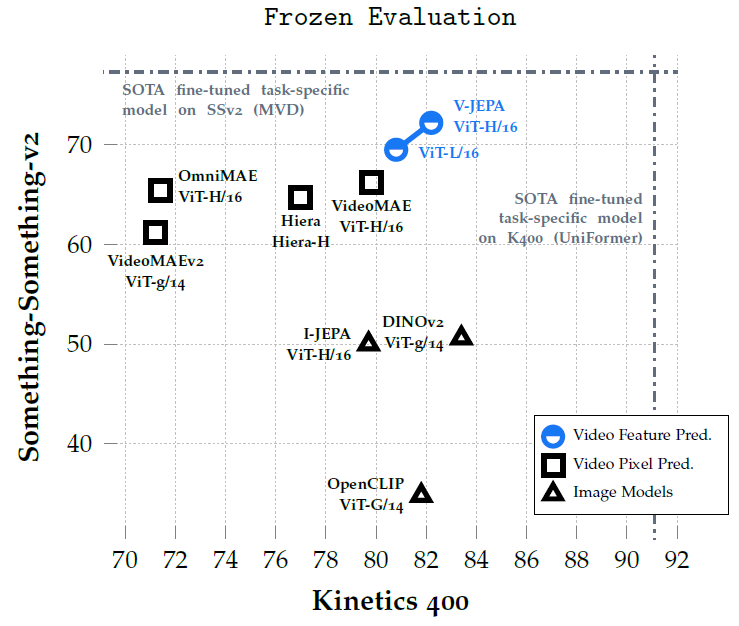

Meta S V Jepa 2 Model Open Source Self Supervised World Models Meta video joint embedding predictive architecture 2 (v jepa 2) is a world model that achieves state of the art performance on visual understanding and prediction in the physical world. our model can also be used for zero shot robot planning to interact with unfamiliar objects in new environments. The v‑jepa 2 work is one of the clearest public demonstrations that jepa‑style video world models can power real robot control in unseen environments, not just benchmark scores.

Meta S V Jepa 2 Model Open Source Self Supervised World Models A major challenge for modern ai is to learn to understand the world and learn to act largely by observation. this paper explores a self supervised approach that combines internet scale video data with a small amount of interaction data (robot trajectories), to develop models capable of understanding, predicting, and planning in the physical world. we first pre train an action free joint. V jepa: revisiting feature prediction for learning visual representations from video (explained) an introduction to nvidia cosmos world foundational models | nvidia gtc 2025. Meta's v jepa 2, the self supervised world model for robotics. learn its architecture, two stage training, and how to run inference with a python code example. V jepa 2 ac is a latent action conditioned world model post trained from v jepa 2 (using a small amount of robot trajectory interaction data) that solves robot manipulation tasks without environment specific data collection or task specific training or calibration.

How Meta Ai S Human Like V Jepa Works Meta's v jepa 2, the self supervised world model for robotics. learn its architecture, two stage training, and how to run inference with a python code example. V jepa 2 ac is a latent action conditioned world model post trained from v jepa 2 (using a small amount of robot trajectory interaction data) that solves robot manipulation tasks without environment specific data collection or task specific training or calibration. This article is for anyone interested in jepa, v jepa 2, self supervised learning, world models, and the broader evolution of ai beyond standard generative systems. A comprehensive guide covering v jepa 2, including vision based world modeling, joint embedding predictive architecture, visual prediction, embodied ai, and the shift from language centric to vision centric ai systems. Developed by meta’s fair team, v jepa 2 (video joint embedding predictive architecture 2) is the first world model trained entirely on video, that allows understanding and predicting events at a state of the art level, along with the ability to plan and control robots in unfamiliar settings. Meta ai has introduced v jepa 2, a scalable open source world model designed to learn from video at internet scale and enable robust visual understanding, future state prediction, and zero shot planning.

Meta Ai Releases V Jepa 2 Open Source Self Supervised World Models For This article is for anyone interested in jepa, v jepa 2, self supervised learning, world models, and the broader evolution of ai beyond standard generative systems. A comprehensive guide covering v jepa 2, including vision based world modeling, joint embedding predictive architecture, visual prediction, embodied ai, and the shift from language centric to vision centric ai systems. Developed by meta’s fair team, v jepa 2 (video joint embedding predictive architecture 2) is the first world model trained entirely on video, that allows understanding and predicting events at a state of the art level, along with the ability to plan and control robots in unfamiliar settings. Meta ai has introduced v jepa 2, a scalable open source world model designed to learn from video at internet scale and enable robust visual understanding, future state prediction, and zero shot planning.

Meta Ai Releases V Jepa 2 Open Source Self Supervised World Models For Developed by meta’s fair team, v jepa 2 (video joint embedding predictive architecture 2) is the first world model trained entirely on video, that allows understanding and predicting events at a state of the art level, along with the ability to plan and control robots in unfamiliar settings. Meta ai has introduced v jepa 2, a scalable open source world model designed to learn from video at internet scale and enable robust visual understanding, future state prediction, and zero shot planning.

Comments are closed.