Introducing The Roboflow Inference Widget

Roboflow Inference Inference is an open source computer vision deployment hub by roboflow. it handles model serving, video stream management, pre post processing, and gpu cpu optimization so you can focus on building your application. We made testing inference on roboflow universe models easier than ever! try out the widget! more.

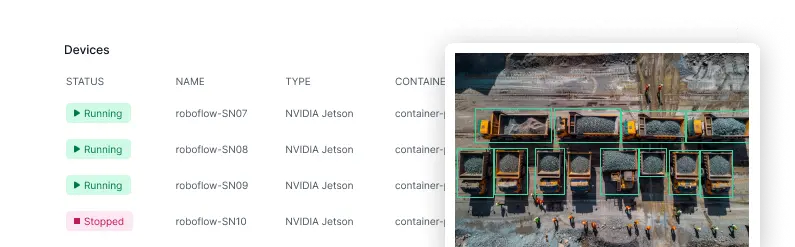

Video Tutorials Roboflow Inference Inference is designed to run on a wide range of hardware from beefy cloud servers to tiny edge devices. this lets you easily develop against your local machine or our cloud infrastructure and then seamlessly switch to another device for production deployment. Last december we introduced the roboflow inference widget on roboflow universe. a feature that helps developers test trained models by dragging and dropping images they expect the model to detect in the wild. Introducing the roboflow inference widget. now you can easily try out any universe project that has a trained model. watch this video for an. Start building with inference everything you need to start running models, exploring capabilities, and building intelligent workflows.

Models Roboflow Inference Introducing the roboflow inference widget. now you can easily try out any universe project that has a trained model. watch this video for an. Start building with inference everything you need to start running models, exploring capabilities, and building intelligent workflows. Inference is designed to run on a wide range of hardware from beefy cloud servers to tiny edge devices. this lets you easily develop against your local machine or our cloud infrastructure and then seamlessly switch to another device for production deployment. Body { font family: 'inter', sans serif; color: #666666; background color: #f7fafc; font size: 16px; padding bottom: 5rem; } .headline { font size: 1.25rem; font. There are three ways to run inference: we document every method in the "inputs" section of the inference documentation. below, we talk about when you would want to use each method. you can use the python sdk to run models on images and videos directly using the inference code, without using docker. Inference can connect with roboflow's platform to help you monitor and improve your models by uploading outlier data, accessing stats and telemetry, and tying into downstream data sinks and business intelligence suites.

Workflows Gallery Roboflow Inference Inference is designed to run on a wide range of hardware from beefy cloud servers to tiny edge devices. this lets you easily develop against your local machine or our cloud infrastructure and then seamlessly switch to another device for production deployment. Body { font family: 'inter', sans serif; color: #666666; background color: #f7fafc; font size: 16px; padding bottom: 5rem; } .headline { font size: 1.25rem; font. There are three ways to run inference: we document every method in the "inputs" section of the inference documentation. below, we talk about when you would want to use each method. you can use the python sdk to run models on images and videos directly using the inference code, without using docker. Inference can connect with roboflow's platform to help you monitor and improve your models by uploading outlier data, accessing stats and telemetry, and tying into downstream data sinks and business intelligence suites.

Comments are closed.