Ingesting Data From A Local Csv And Github In Databricks Free Edition

Github Analistaoficial Data In Action Databricks Notebooks E Dicas This tutorial walks you through using a databricks notebook to import data from a csv file containing baby name data from health.data.ny.gov into your unity catalog volume using python, scala, and r. you also learn to modify a column name, visualize the data, and save to a table. Data ingestion into databricks can be achieved in various ways, depending on the data source and the specific use case. here are several common methods for ingesting data into.

Databricks Read Csv Simplified A Comprehensive Guide 101 In this blog, we will demonstrate a method that can be used to pull github data across several formats into databricks. The alternative is to use the databricks cli (or rest api) and push local data to a location on dbfs, where it can be read into spark from within a databricks notebook. a similar idea would be to use the aws cli to put local data into an s3 bucket that can be accessed from databricks. In this blog, we will demonstrate a method that can be used to pull github data across several formats into databricks. Enjoy the videos and music you love, upload original content, and share it all with friends, family, and the world on .

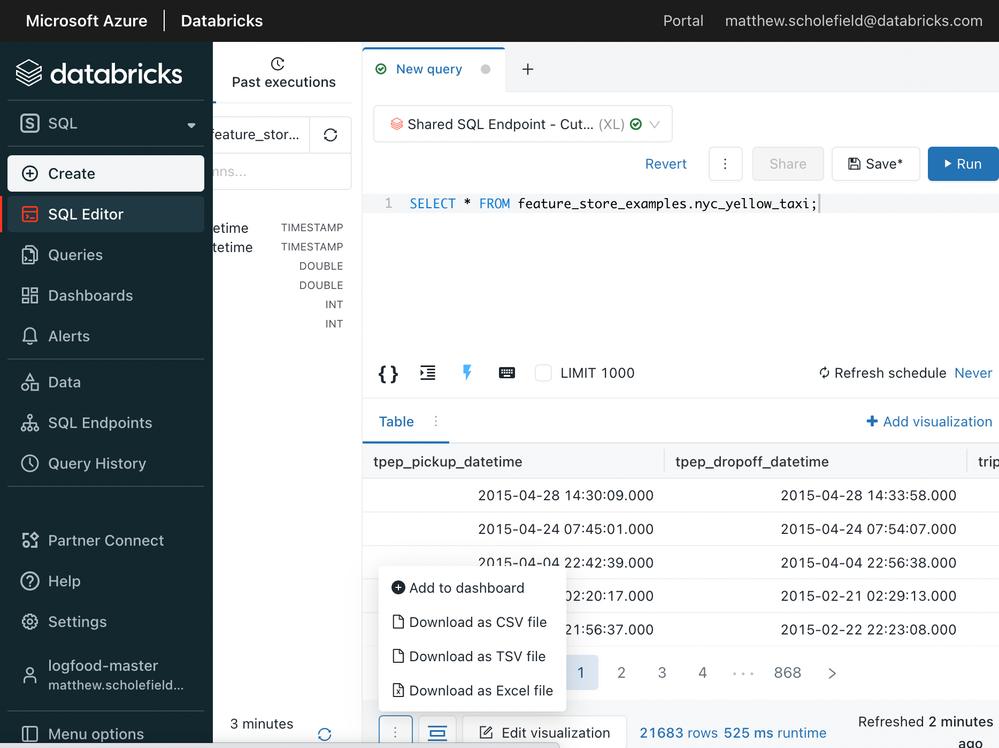

Data Ingesting Create Table In Databricks From Google Analytics Csv In this blog, we will demonstrate a method that can be used to pull github data across several formats into databricks. Enjoy the videos and music you love, upload original content, and share it all with friends, family, and the world on . Adding csv files to databricks is a fundamental task for data ingestion and analysis. whether you’re importing data for machine learning, reporting, or exploratory analysis, understanding how to efficiently upload and access csv files is essential for leveraging databricks’ powerful capabilities. By following the detailed instructions and best practices outlined on our site, you will confidently navigate the process of importing, transforming, and persisting csv data within databricks, thereby unlocking the full power of cloud based big data analytics. This article provides examples for reading csv files with azure databricks using python, scala, r, and sql. This codebase enables seamless ingestion of csv files into databricks using delta lake tables. it ensures robust data transfers with schema enforcement, batch processing, fault tolerance, and parallel processing.

Azure Databricks Read Csv File From Folder Stack Overflow Adding csv files to databricks is a fundamental task for data ingestion and analysis. whether you’re importing data for machine learning, reporting, or exploratory analysis, understanding how to efficiently upload and access csv files is essential for leveraging databricks’ powerful capabilities. By following the detailed instructions and best practices outlined on our site, you will confidently navigate the process of importing, transforming, and persisting csv data within databricks, thereby unlocking the full power of cloud based big data analytics. This article provides examples for reading csv files with azure databricks using python, scala, r, and sql. This codebase enables seamless ingestion of csv files into databricks using delta lake tables. it ensures robust data transfers with schema enforcement, batch processing, fault tolerance, and parallel processing.

Sharing Csv Export From A Dashboard Databricks Community 24335 This article provides examples for reading csv files with azure databricks using python, scala, r, and sql. This codebase enables seamless ingestion of csv files into databricks using delta lake tables. it ensures robust data transfers with schema enforcement, batch processing, fault tolerance, and parallel processing.

Comments are closed.