Information Theory 1 Ppt

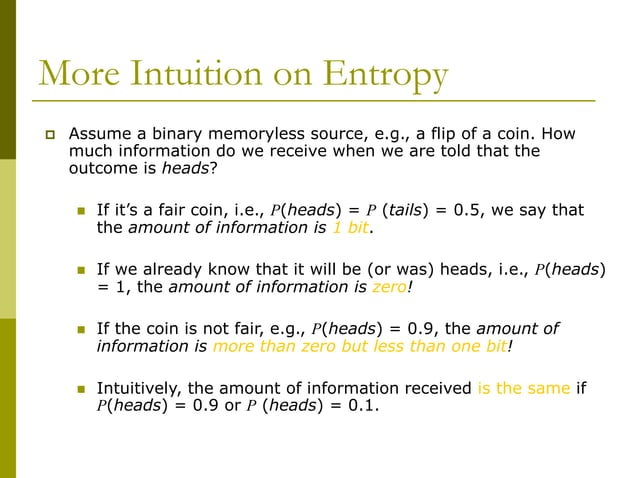

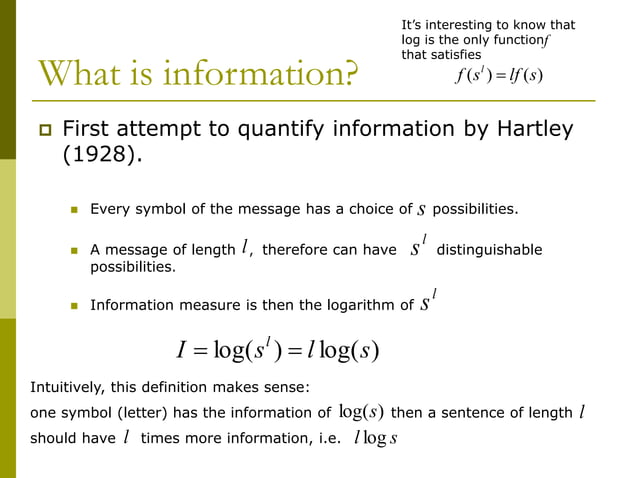

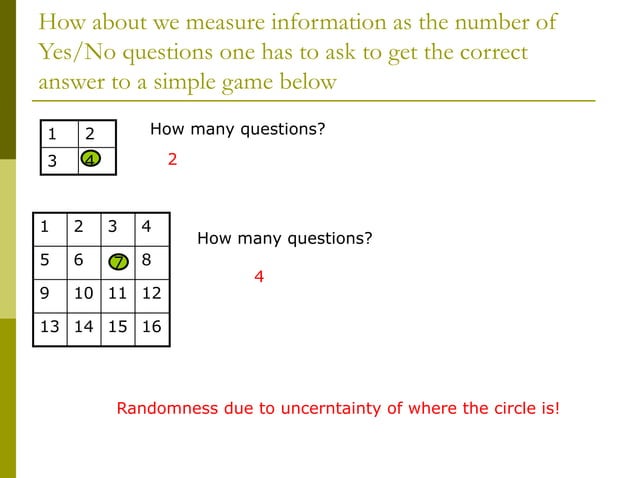

Information Theory A Tutorial Introduction 1 20 Pdf Normal This document discusses information theory and entropy. it defines information as the number of letters in a message, which can be measured and compared. shannon's information theory introduced entropy to quantify the uncertainty in a data source. First attempt to quantify information by hartley (1928). every symbol of the message has a choice of possibilities. a message of length , therefore can have distinguishable possibilities.

Information Theory 1 Ppt This document provides an overview of information theory. it discusses how information theory deals with measuring the information from a source, the capacity of a communication channel, and coding. Unlock the fundamentals of information theory with our professional powerpoint presentation template. designed for clarity and engagement, this deck features visually appealing slides, key concepts, and illustrative examples. Master the definitions, calculations, and interpretations of these essential information theory principles. 1. the document introduces information theory and discusses measuring information. it explores defining information as choices or uncertainty. 2. shannon's information theory is introduced, defining information entropy as the number of bits needed to encode the possible outcomes of a random variable. entropy measures the uncertainty in a data.

Information Theory 1 Ppt Master the definitions, calculations, and interpretations of these essential information theory principles. 1. the document introduces information theory and discusses measuring information. it explores defining information as choices or uncertainty. 2. shannon's information theory is introduced, defining information entropy as the number of bits needed to encode the possible outcomes of a random variable. entropy measures the uncertainty in a data. 01 introduction to information theory free download as powerpoint presentation (.ppt .pptx), pdf file (.pdf), text file (.txt) or view presentation slides online. 16 bit samples at a sampling rate 44.1 khz for compact discs. 1.400 mbit represent just one second of stereo music in cd quality. with data reduction mpeg audio coding, is realized by perceptual coding techniques addressing the perception of sound waves by the human ear. it maintains a sound quality that is significantly. It discusses the foundations of information theory, including its history, definition, shannon's theory, and huffman coding. it also outlines some key measures in information theory like entropy, mutual information, and channel capacity. Information theory 1 free download as powerpoint presentation (.ppt), pdf file (.pdf), text file (.txt) or view presentation slides online. the redundancy measures how far the source probabilities are from the maximum entropy case of equiprobable symbols.

Information Theory 1 Ppt 01 introduction to information theory free download as powerpoint presentation (.ppt .pptx), pdf file (.pdf), text file (.txt) or view presentation slides online. 16 bit samples at a sampling rate 44.1 khz for compact discs. 1.400 mbit represent just one second of stereo music in cd quality. with data reduction mpeg audio coding, is realized by perceptual coding techniques addressing the perception of sound waves by the human ear. it maintains a sound quality that is significantly. It discusses the foundations of information theory, including its history, definition, shannon's theory, and huffman coding. it also outlines some key measures in information theory like entropy, mutual information, and channel capacity. Information theory 1 free download as powerpoint presentation (.ppt), pdf file (.pdf), text file (.txt) or view presentation slides online. the redundancy measures how far the source probabilities are from the maximum entropy case of equiprobable symbols.

Comments are closed.