Inference Lambda

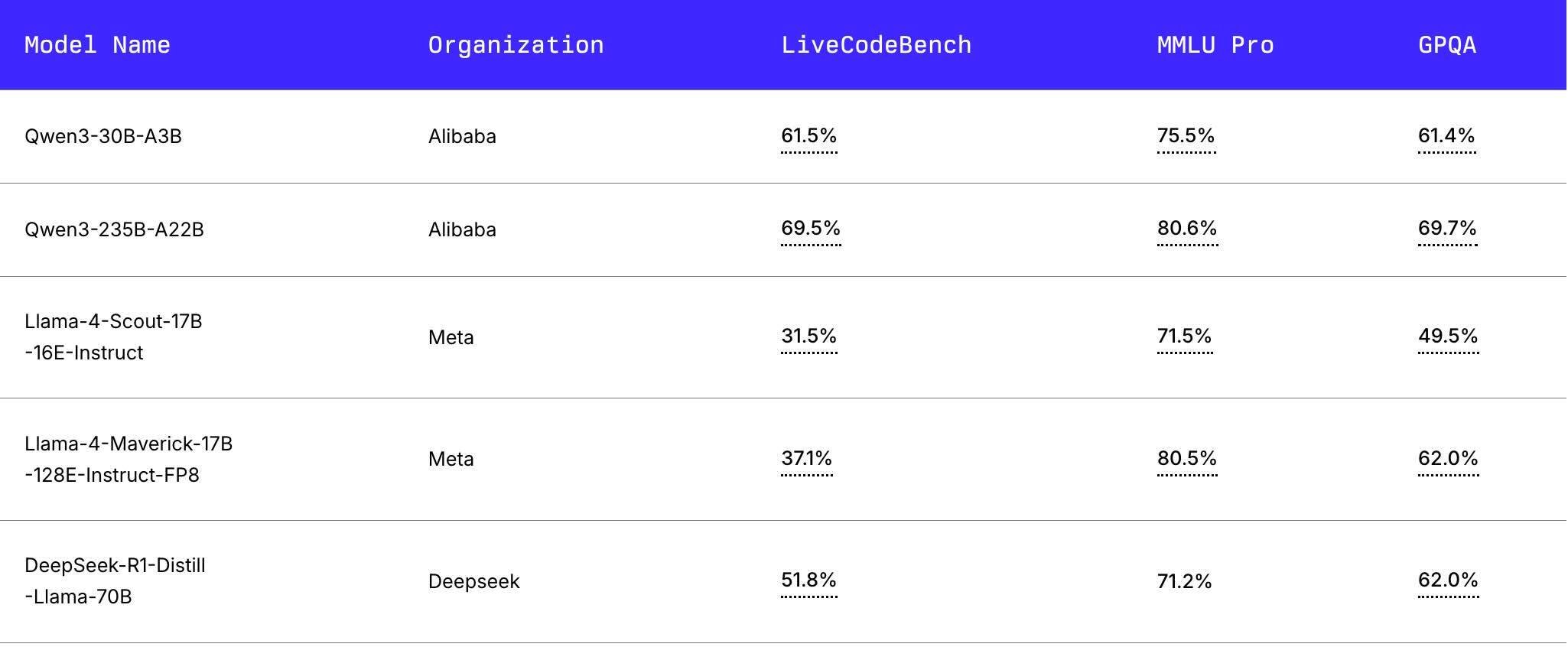

Inference Lambda We present standardized benchmark results for top contenders like meta's llama 4 series, alibaba's qwen3, and the latest from deepseek, focusing on critical performance metrics that measure everything from coding ability to general knowledge. This article will walk through the steps of performing model inference on aws lambda by building the lambda ami, loading dependencies, and testing inference.

Inference Lambda We are moving from the era of massive, monolithic training clusters to the era of distributed utility inference. the release of efficient small language models and aws lambda durable functions (late 2025) dismantled the old barriers. Explore how software developers can scale machine learning inference using aws lambda for efficient, cost effective serverless compute solutions. If you’ve ever tried deploying an ai model before, you know. they built the lambda inference api, to make it simple, scalable, and accessible. for over a decade, lambda has been engineering every layer of our stack– hardware, networking, and software, for ai performance and efficiency. For over a decade, lambda has been engineering every layer of our stack– hardware, networking, and software, for ai performance and efficiency. we’ve taken everything we’ve learned since then and built an inference api, underpinned by an industry leading inference stack, that’s purpose built for ai. cut costs. not corners.

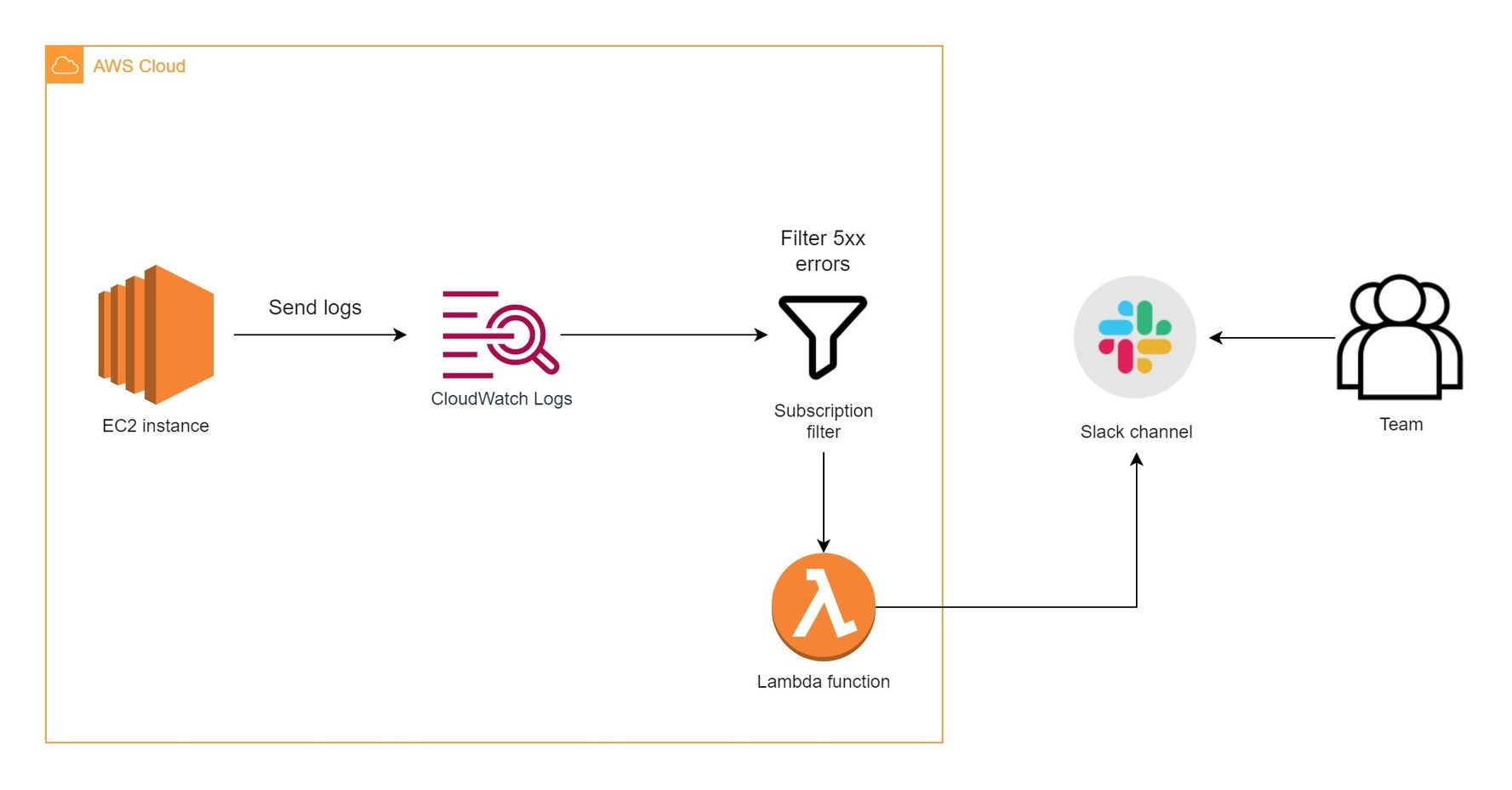

Monitor Lambda Ml Inference With Cloudwatch Dashboard Using 50 Off If you’ve ever tried deploying an ai model before, you know. they built the lambda inference api, to make it simple, scalable, and accessible. for over a decade, lambda has been engineering every layer of our stack– hardware, networking, and software, for ai performance and efficiency. For over a decade, lambda has been engineering every layer of our stack– hardware, networking, and software, for ai performance and efficiency. we’ve taken everything we’ve learned since then and built an inference api, underpinned by an industry leading inference stack, that’s purpose built for ai. cut costs. not corners. The lambda inference api represents a transformative step forward for ai development. by democratizing access to advanced inference capabilities, it empowers developers and businesses to. In this post, we implemented a model monitoring feature as a lambda extension and deployed it to a lambda ml inference workload. we showed how to build and deploy this solution to your own aws account. Lambda's inference api offers developers cost effective ai model deployment with no rate limits. pay as you go and access leading models like llama and hermes, making it a flexible and scalable choice for ai projects. Cloud gpus, on demand clusters, private cloud, and hardware for ai training and inference. run b200 and h100, deploy fast, and scale cost effectively.

Monitor Lambda Ml Inference With Cloudwatch Dashboard Using 50 Off The lambda inference api represents a transformative step forward for ai development. by democratizing access to advanced inference capabilities, it empowers developers and businesses to. In this post, we implemented a model monitoring feature as a lambda extension and deployed it to a lambda ml inference workload. we showed how to build and deploy this solution to your own aws account. Lambda's inference api offers developers cost effective ai model deployment with no rate limits. pay as you go and access leading models like llama and hermes, making it a flexible and scalable choice for ai projects. Cloud gpus, on demand clusters, private cloud, and hardware for ai training and inference. run b200 and h100, deploy fast, and scale cost effectively.

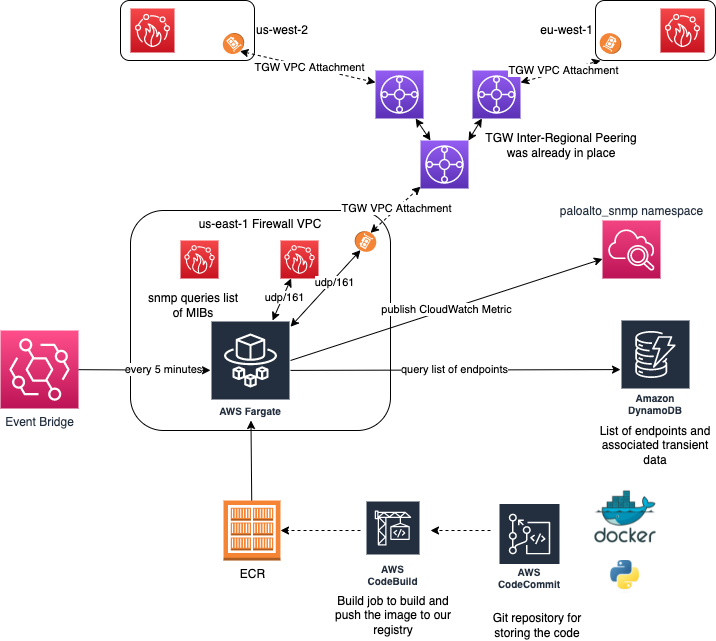

Monitor Lambda Ml Inference With Cloudwatch Dashboard Using 50 Off Lambda's inference api offers developers cost effective ai model deployment with no rate limits. pay as you go and access leading models like llama and hermes, making it a flexible and scalable choice for ai projects. Cloud gpus, on demand clusters, private cloud, and hardware for ai training and inference. run b200 and h100, deploy fast, and scale cost effectively.

Mlperf Inference V5 0 Lambda S Clusters Prove Ready For Today And

Comments are closed.