Implicit Neural Representations For Image Compression Deepai

Implicit Neural Representations For Image Compression Deepai This work investigates inrs from a novel perspective, i.e., as a tool for image compression. to this end, we propose the first comprehensive compression pipeline based on inrs including quantization, quantization aware retraining and entropy coding. This work investigates inrs from a novel perspective, i.e., as a tool for image compression. to this end, we propose the first comprehensive compression pipeline based on inrs including quantization, quantization aware retraining and entropy coding.

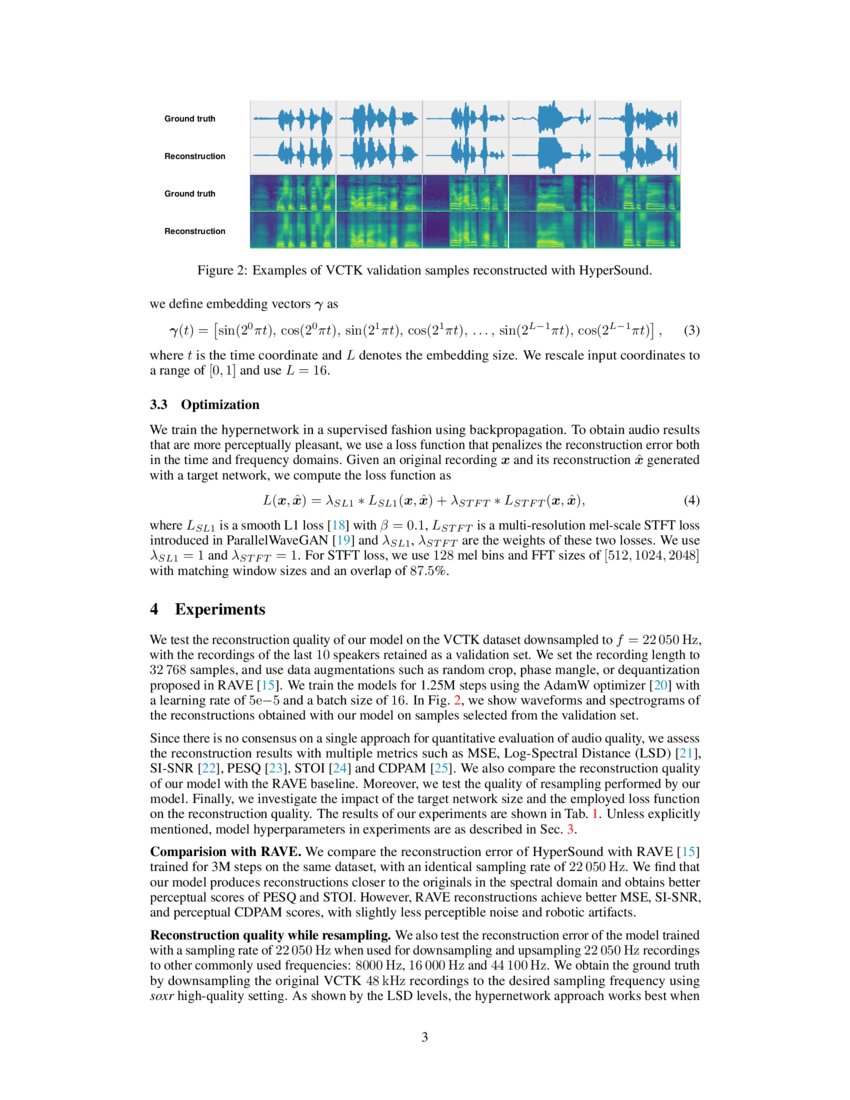

Siamese Siren Audio Compression With Implicit Neural Representations Pression particularly focusing on image compression. recently, im plicit neural representations (inrs) gained popularity as a flexible, multi purpose data representation that is able to produce high fidelity sampl. Inrs are a way to represent coordinate based data as a function. for example, an image is nothing else but a function f (x,y) = (r,g,b), namely we assign a (r,g,b) color to the pixel at location (x,y). we can train a neural network to fit this function by optimization and what you get is an inr. Fig. 1: method overview: we summarize our approach to use implicit neural representations (inrs) for compression by using the model weights as the representation for an image. Method overview: we summarize our approach to use inrs for compression by using the model weights as the representation for an image. we also visualize how a meta learned initialization is used in the encoding and decoding process in order to θ0 compress only the weight update into the bitstream Δθ.

Hypersound Generating Implicit Neural Representations Of Audio Signals We show that our inr based compression algorithm, meta learning combined with siren and positional encodings, outperforms jpeg2000 and rate distortion autoencoders on kodak with 2x reduced dimensionality for the first time and closes the gap on full resolution images. In this paper, we propose an enhanced quantified local implicit neural representation (eqlinr) for image compression by enhancing the utilization of local relationships of inr and narrow. Finally, due to the vast number of potential suitable hyper parameter configurations, we have noticed that there are chance to reduce the gap we can measure, in terms of performance, between well established image compression methods such as jpeg and siren compressed models. We propose a new simple approach for image compression: instead of storing the rgb values for each pixel of an image, we store the weights of a neural network overfitted to the image. specifically, to encode an image, we fit it with an mlp which maps pixel locations to rgb values.

Comments are closed.