Implementing Memory Pooling Techniques For Gpu Resource Allocation

Implementing Memory Pooling Techniques For Gpu Resource Allocation Whether you're working on a graphics application, machine learning model, or any other gpu intensive task, memory pooling is a technique worth considering. by following the steps outlined in this article, you can create a simple yet effective memory pool in cuda. In this work, we accelerate the python implementation of the pipeline through customized and commercial gpu enabled software libraries, and develop a c implementation for inferencing the.

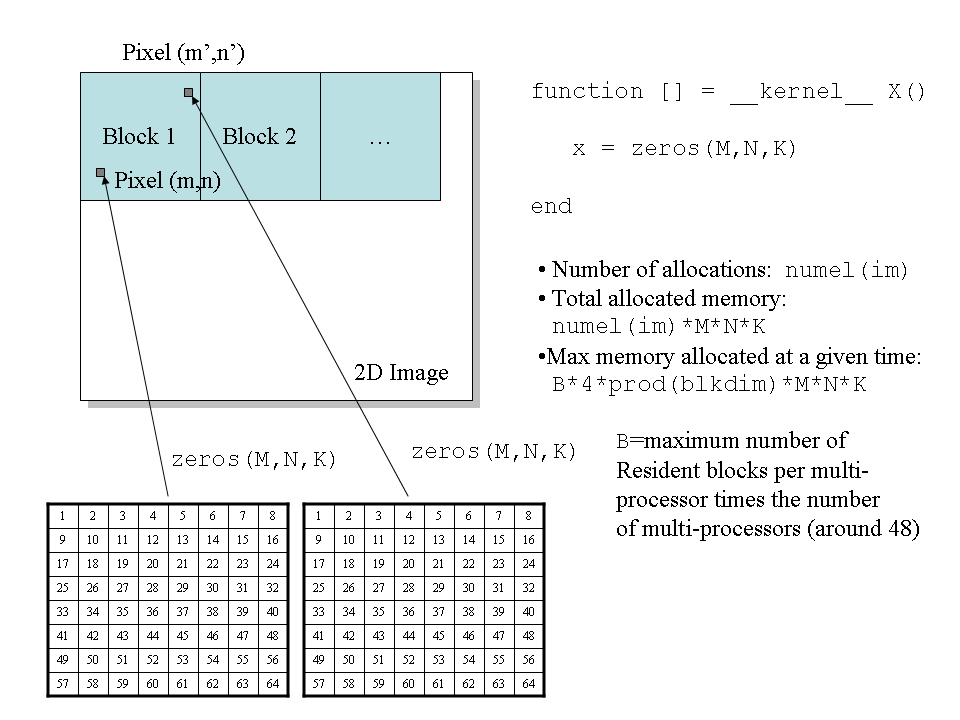

Implementing Memory Pooling Techniques For Gpu Based Scoring Algorithm Transform expensive gpu resources into flexible pools serving multiple workloads with up to 90% cost savings. Rmm enables the use of pool allocation which could improve the performance greatly. this paper proposes a systematic profiling and evaluation framework that leverages nvidia’s rmm to optimize and understand data loading performance of the cudf.read csv operation in gpu accelerated environments. The paper focuses on implementing the sobel edge detection algorithm within the cuda environment, emphasizing the use of shared memory to overcome performance degradation associated with global memory accesses. Compared with traditional memory allocation methods, memory pool management can greatly improve the efficiency of memory allocation and release and reduce the generation of memory.

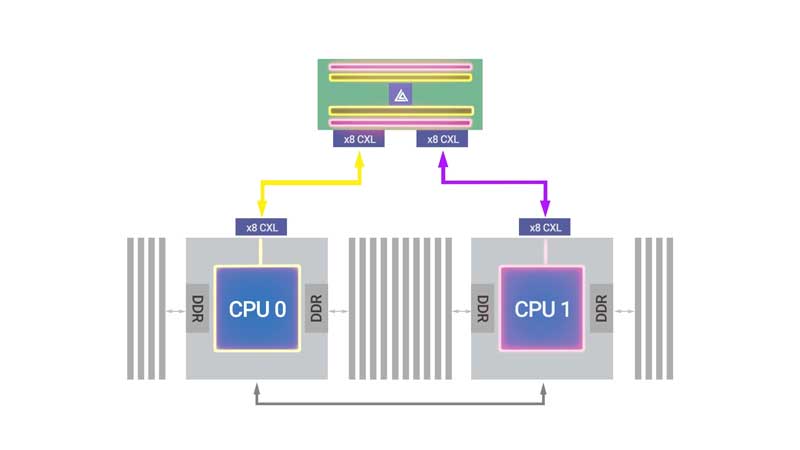

Dynamic Memory Allocation On Gpu The paper focuses on implementing the sobel edge detection algorithm within the cuda environment, emphasizing the use of shared memory to overcome performance degradation associated with global memory accesses. Compared with traditional memory allocation methods, memory pool management can greatly improve the efficiency of memory allocation and release and reduce the generation of memory. Best practices for mitigating gpu memory fragmentation include preallocating memory pools, using stream ordered memory allocators, and avoiding frequent small allocations. This paper presents gpooling, a novel pooling scheme that leverages device driver hijacking to optimize gpu resource allocation. we designed a benchmark based on real world traces from a campus data center and deployed gpooling within a gpu cluster environment. By replacing local hbms and conventional electrical networking interfaces with photonic i os, we build an unified high bandwidth photonic communication domain that enables reconfigurable access to both memory and network resources. This article explores various techniques to reduce gpu overhead when deploying nlp models, enabling faster processing, better resource utilization, and enhanced scalability.

Memory Allocation Techniques Complete Explanation For Beginners Best practices for mitigating gpu memory fragmentation include preallocating memory pools, using stream ordered memory allocators, and avoiding frequent small allocations. This paper presents gpooling, a novel pooling scheme that leverages device driver hijacking to optimize gpu resource allocation. we designed a benchmark based on real world traces from a campus data center and deployed gpooling within a gpu cluster environment. By replacing local hbms and conventional electrical networking interfaces with photonic i os, we build an unified high bandwidth photonic communication domain that enables reconfigurable access to both memory and network resources. This article explores various techniques to reduce gpu overhead when deploying nlp models, enabling faster processing, better resource utilization, and enhanced scalability.

Implementing Memory Pooling Techniques For Efficient Object Reuse In M By replacing local hbms and conventional electrical networking interfaces with photonic i os, we build an unified high bandwidth photonic communication domain that enables reconfigurable access to both memory and network resources. This article explores various techniques to reduce gpu overhead when deploying nlp models, enabling faster processing, better resource utilization, and enhanced scalability.

Memory And Thread Allocation In Gpu Device Download Scientific Diagram

Comments are closed.