Image Captioning Using Encoder Decoder

Github Amirmshebly Image Captioning Using Encoder Decoder In this paper, we propose a hierarchical encoder decoder for image captioning (hiercap). the core of our work is to establish a hierarchical encoder, which comprises global, regional, and grid sub encoders, to capture multi level semantic information from images. This paper introduces a groundbreaking enhancement to image captioning through a unique approach that harnesses the combined power of the vision encoder decoder model.

Github Itaishufaro Encoder Decoder Image Captioning Project For The By combining the power of cnns for feature extraction with the flexibility of the encoder decoder architecture for caption generation, we aimed to develop a robust and efficient image captioning system. To overcome such issues, this research work presents an automated optimization deep learning model for image caption generation. initially, the input image is pre processed, and then the encoder decoder based structure is utilized for extracting the visual features and caption generation. Due to the development we have seen in performance using the encoder decoder architecture, we have limited our focus to the encoder decoder architecture of english image captioning and how to use these techniques in arabic image captioning. In this blog we provide you with hands on tutorials on implementing three different transformer based encoder decoder image captioning models using rocm running on amd gpus.

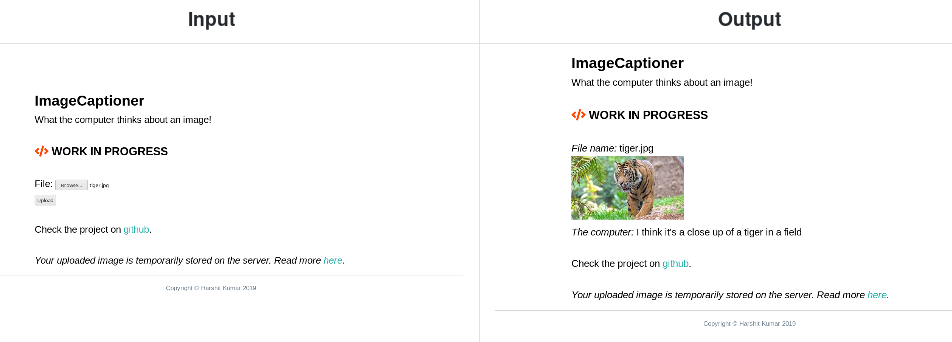

Image Captioning Using Encoder Decoder Due to the development we have seen in performance using the encoder decoder architecture, we have limited our focus to the encoder decoder architecture of english image captioning and how to use these techniques in arabic image captioning. In this blog we provide you with hands on tutorials on implementing three different transformer based encoder decoder image captioning models using rocm running on amd gpus. Today we will learn about how to solve the famous deep learning problem of captioning an image. Because of its wide range of uses, image captioning has attracted a lot of attention. strong feature representations and context aware language generation algorithms are necessary to overcome the major challenge of bridging the semantic gap between visuals and text. Using its memory capabilities, the lstm learns to map words to the specific features extracted from the images and to form a meaningful caption summarizing the scene. The proposed approach of using an “encoder–decoder pipeline” for image captioning has proven to be effective in bridging the gap between visual content and natural language descriptions.

Comments are closed.