Iccv 2025 Task Vector Quantization For Memory Efficient Model Merging

논문 리뷰 Task Vector Quantization For Memory Efficient Model Merging We address the memory overhead in model merging by in troducing task vector quantization, which exploits the nar row weight range of task vectors for effective low precision storage. In this paper, we propose quantizing task vectors (i.e., the difference between pre trained and fine tuned checkpoints) instead of quantizing fine tuned checkpoints.

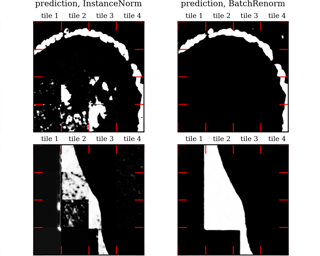

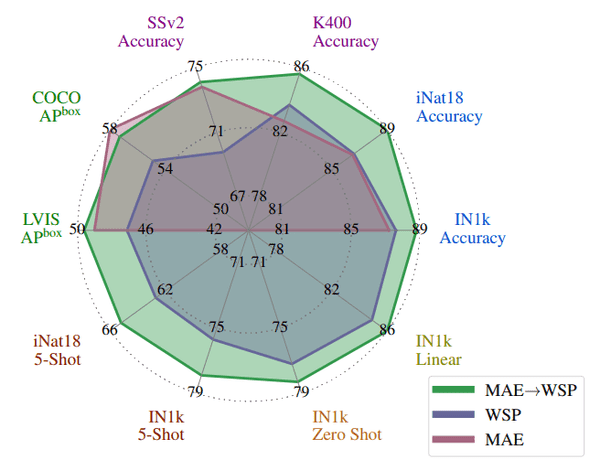

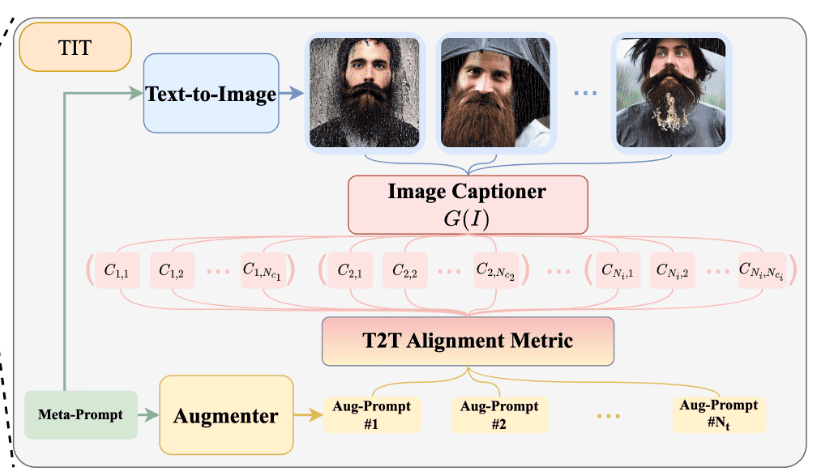

Our Paper On Tvq For Model Merging Accepted To Iccv2025 Youngeun Kim We allocate bits based on quantization sensitivity, ensuring precision while minimizing error within a memory budget. experiments on image classification and dense prediction show our method maintains or improves model merging performance while using only 8% of the memory required for full precision checkpoints. To assess the impact of quantization on model merging, we first quantize fine tuned checkpoints (fq), task vectors (tvq), and residual task vectors (rtvq), then apply these weights to various merging methods. we compare all methods with their full precision (fp32) counterparts across multiple tasks. Quantizing task vectors instead of fine tuned checkpoints reduces memory usage while maintaining or improving model merging performance in multi task models. model merging enables efficient multi task models by combining task specific fine tuned checkpoints. We address the memory overhead of storing full precision checkpoints in model merging by introducing task vector quantization, which leverages the naturally narrow weight range of task vectors for effective low precision quantization.

Iccv 2025 Awards Quantizing task vectors instead of fine tuned checkpoints reduces memory usage while maintaining or improving model merging performance in multi task models. model merging enables efficient multi task models by combining task specific fine tuned checkpoints. We address the memory overhead of storing full precision checkpoints in model merging by introducing task vector quantization, which leverages the naturally narrow weight range of task vectors for effective low precision quantization. Our method, task vector quantization (tvq), leverages the narrow weight range of task vectors for low bit quantization (≤ 4 bit) while preserving merging performance. We address the memory overhead in model merging by introducing task vector quantization, which exploits the narrow weight range of task vectors for effective low precision storage.

Adaptive Floating Point For Dnn Quantization Pdf Deep Learning Our method, task vector quantization (tvq), leverages the narrow weight range of task vectors for low bit quantization (≤ 4 bit) while preserving merging performance. We address the memory overhead in model merging by introducing task vector quantization, which exploits the narrow weight range of task vectors for effective low precision storage.

Iccv 2023 Top Papers General Trends And Personal Picks Ai Summer

Iccv 2023 Top Papers General Trends And Personal Picks Ai Summer

Comments are closed.