High Performance Memory For Ai And Hpc

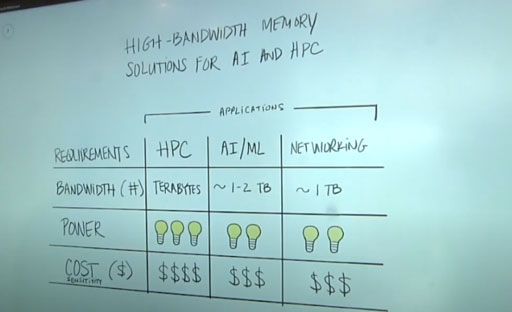

Platform For Deep Learning Acceleration By Hpc Ai Tech Memory intensive applications, such as high performance computing (hpc), graphics processing units (gpus), and ai accelerators, areas once dominated by ddr and gddr memory, rely on hbm to meet the data throughput requirements of training and inference. High speed embedded memory is essential to enable the compute efficiency needed for the next generation of highperformance computing (hpc) chip. this paper prov.

High Performance Memory For Ai And Hpc Next generation memory systems for hpc and ai face significant challenges. these systems must deliver increased capacity and performance within a constrained power budget. we design and evaluate memory architectures that meet the requirements of these critical applications. Jedec’s hbm4 and the emerging sphbm4 standard boost bandwidth and expand packaging options, helping ai and hpc systems push past the memory and i o walls. Frank ferro, senior director of product management at rambus, examines the current performance bottlenecks in high performance computing, drilling down into power and performance for different memory options, and explains what are the best solutions for different applications and why. At supercomputing 2025, sk hynix showcased advanced memory technologies for ai and high performance computing including hbm4, dram and essd solutions.

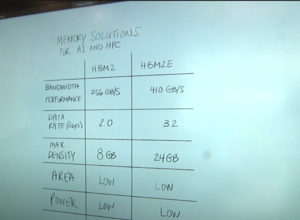

High Performance Memory For Ai Ml And Hpc Part 1 Rambus Frank ferro, senior director of product management at rambus, examines the current performance bottlenecks in high performance computing, drilling down into power and performance for different memory options, and explains what are the best solutions for different applications and why. At supercomputing 2025, sk hynix showcased advanced memory technologies for ai and high performance computing including hbm4, dram and essd solutions. Innovations in high performance memory solutions, such as high bandwidth memory (hbm) and ddr5, are addressing the need for high bandwidth and low latency for efficient data processing, which are crucial for ai workloads that process large data sets in real time. The amd instinct mi300a accelerato r combines a cpu and gpu for running hpc ai workloads. it offers hbm3 as the dedicated memory with a unified capacity of up to 128gb. similarly, the amd instinct mi300x is a gpu only accelerator designed for low latency ai processing. The result is a substantial 2.5× improvement in performance per watt compared to hbm2e, while maintaining backward compatibility with existing hbm3 controllers. with hbm3e already deployed in systems like nvidia’s h200, it has effectively become the baseline memory technology for today’s ai training, hpc and data center acceleration platforms. For example, managing the vast volumes of data involved in modern, high fidelity hpc simulations, modeling and ai model training can be critical, requiring a high performance storage solution.

High Performance Memory For Ai Ml And Hpc Part 2 Rambus Innovations in high performance memory solutions, such as high bandwidth memory (hbm) and ddr5, are addressing the need for high bandwidth and low latency for efficient data processing, which are crucial for ai workloads that process large data sets in real time. The amd instinct mi300a accelerato r combines a cpu and gpu for running hpc ai workloads. it offers hbm3 as the dedicated memory with a unified capacity of up to 128gb. similarly, the amd instinct mi300x is a gpu only accelerator designed for low latency ai processing. The result is a substantial 2.5× improvement in performance per watt compared to hbm2e, while maintaining backward compatibility with existing hbm3 controllers. with hbm3e already deployed in systems like nvidia’s h200, it has effectively become the baseline memory technology for today’s ai training, hpc and data center acceleration platforms. For example, managing the vast volumes of data involved in modern, high fidelity hpc simulations, modeling and ai model training can be critical, requiring a high performance storage solution.

High Performance Memory For Ai Ml And Hpc Part 2 Rambus The result is a substantial 2.5× improvement in performance per watt compared to hbm2e, while maintaining backward compatibility with existing hbm3 controllers. with hbm3e already deployed in systems like nvidia’s h200, it has effectively become the baseline memory technology for today’s ai training, hpc and data center acceleration platforms. For example, managing the vast volumes of data involved in modern, high fidelity hpc simulations, modeling and ai model training can be critical, requiring a high performance storage solution.

Ai Powered Hpc Market To Reach 37 4 Billion By 2028

Comments are closed.